本文我们将学习如何使用 Kubernetes Cluster API 和 ArgoCD 创建和管理多个 Kubernetes 集群。我们将使用 Kind 创建一个本地集群,在该集群上,我们将配置其他 Kubernetes 集群的创建过程。为了自动执行该过程,我们将使用 ArgoCD,我们可以从单个 Git 存储库处理整个过程。

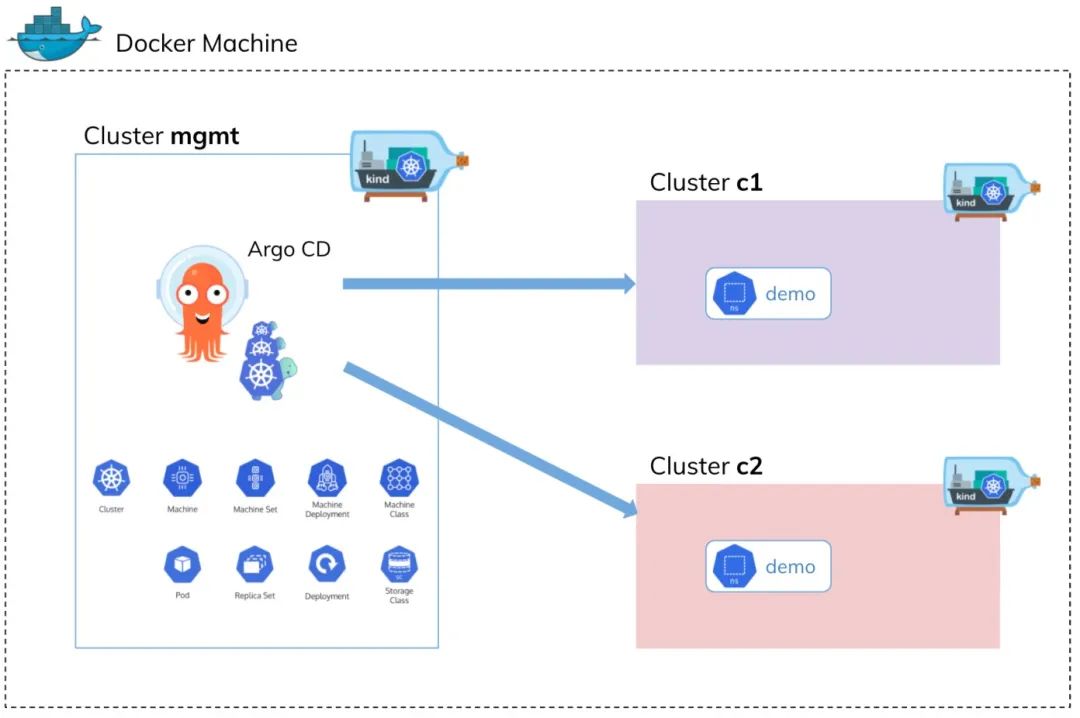

本文我们将学习如何使用 Kubernetes Cluster API 和 ArgoCD 创建和管理多个 Kubernetes 集群。我们将使用 Kind 创建一个本地集群,在该集群上,我们将配置其他 Kubernetes 集群的创建过程。为了自动执行该过程,我们将使用 ArgoCD,我们可以从单个 Git 存储库处理整个过程。 上面的架构图可以看出整个基础设施在 Docker 上运行,我们在管理集群上安装 Kubernetes Cluster API 和 ArgoCD,然后,使用这两个工具,使用 Kind 创建新的集群。之后,我们将把一些 Kubernetes 对象应用到工作负载集群(c1、c2)中,例如 Namespace、ResourceQuota 或 RoleBinding。当然,整个过程由 ArgoCD 实例管理,配置存储在 Git 存储库中。

上面的架构图可以看出整个基础设施在 Docker 上运行,我们在管理集群上安装 Kubernetes Cluster API 和 ArgoCD,然后,使用这两个工具,使用 Kind 创建新的集群。之后,我们将把一些 Kubernetes 对象应用到工作负载集群(c1、c2)中,例如 Namespace、ResourceQuota 或 RoleBinding。当然,整个过程由 ArgoCD 实例管理,配置存储在 Git 存储库中。# mgmt-cluster-config.yaml

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

nodes:

- role: control-plane

extraMounts:

- hostPath: /var/run/docker.sock

containerPath: /var/run/docker.sock$ kind create cluster --config mgmt-cluster-config.yaml --name mgmt

Creating cluster "mgmt" ...

✓ Ensuring node image (kindest/node:v1.23.4) ?

✓ Preparing nodes ?

✓ Writing configuration ?

✓ Starting control-plane ?️

✓ Installing CNI ?

✓ Installing StorageClass ?

Set kubectl context to "kind-mgmt"

You can now use your cluster with:

kubectl cluster-info --context kind-mgmt

Not sure what to do next? ? Check out https://kind.sigs.k8s.io/docs/user/quick-start/$ clusterctl init --infrastructure docker

Fetching providers

Skipping installing cert-manager as it is already installed

Installing Provider="cluster-api" Version="v1.2.0" TargetNamespace="capi-system"

Installing Provider="bootstrap-kubeadm" Version="v1.2.0" TargetNamespace="capi-kubeadm-bootstrap-system"

Installing Provider="control-plane-kubeadm" Version="v1.2.0" TargetNamespace="capi-kubeadm-control-plane-system"

Installing Provider="infrastructure-docker" Version="v1.2.0" TargetNamespace="capd-system"

Your management cluster has been initialized successfully!

You can now create your first workload cluster by running the following:

clusterctl generate cluster [name] --kubernetes-version [version] | kubectl apply -f -$ kubectl get ns

NAME STATUS AGE

capd-system Active 100s

capi-kubeadm-bootstrap-system Active 101s

capi-kubeadm-control-plane-system Active 100s

capi-system Active 102s

cert-manager Active 35m

default Active 59m

kube-node-lease Active 59m

kube-public Active 59m

kube-system Active 59m

local-path-storage Active 59m需要注意 Cluster API 初始化后创建的 Pod 默认使用的是 grc.io 的镜像,如不能正常访问需要自己中转下。

$ kubectl get pods -A |grep cap

capd-system capd-controller-manager-85788c974f-nmzkt 1/1 Running 0 107m

capi-kubeadm-bootstrap-system capi-kubeadm-bootstrap-controller-manager-7775897d58-6wp5l 1/1 Running 0 107m

capi-kubeadm-control-plane-system capi-kubeadm-control-plane-controller-manager-6958fd6555-w66xg 1/1 Running 0 107m

capi-system capi-controller-manager-648d7b84b8-l5prr 1/1 Running 0 107m$ kubectl get crd

NAME CREATED AT

certificaterequests.cert-manager.io 2022-08-14T02:52:38Z

certificates.cert-manager.io 2022-08-14T02:52:38Z

challenges.acme.cert-manager.io 2022-08-14T02:52:38Z

clusterclasses.cluster.x-k8s.io 2022-08-14T03:26:48Z

clusterissuers.cert-manager.io 2022-08-14T02:52:38Z

clusterresourcesetbindings.addons.cluster.x-k8s.io 2022-08-14T03:26:48Z

clusterresourcesets.addons.cluster.x-k8s.io 2022-08-14T03:26:48Z

clusters.cluster.x-k8s.io 2022-08-14T03:26:48Z

dockerclusters.infrastructure.cluster.x-k8s.io 2022-08-14T03:26:50Z

dockerclustertemplates.infrastructure.cluster.x-k8s.io 2022-08-14T03:26:51Z

dockermachinepools.infrastructure.cluster.x-k8s.io 2022-08-14T03:26:51Z

dockermachines.infrastructure.cluster.x-k8s.io 2022-08-14T03:26:51Z

dockermachinetemplates.infrastructure.cluster.x-k8s.io 2022-08-14T03:26:51Z

extensionconfigs.runtime.cluster.x-k8s.io 2022-08-14T03:26:48Z

ipaddressclaims.ipam.cluster.x-k8s.io 2022-08-14T03:26:49Z

ipaddresses.ipam.cluster.x-k8s.io 2022-08-14T03:26:49Z

issuers.cert-manager.io 2022-08-14T02:52:38Z

kubeadmconfigs.bootstrap.cluster.x-k8s.io 2022-08-14T03:26:49Z

kubeadmconfigtemplates.bootstrap.cluster.x-k8s.io 2022-08-14T03:26:49Z

kubeadmcontrolplanes.controlplane.cluster.x-k8s.io 2022-08-14T03:26:50Z

kubeadmcontrolplanetemplates.controlplane.cluster.x-k8s.io 2022-08-14T03:26:50Z

machinedeployments.cluster.x-k8s.io 2022-08-14T03:26:49Z

machinehealthchecks.cluster.x-k8s.io 2022-08-14T03:26:49Z

machinepools.cluster.x-k8s.io 2022-08-14T03:26:49Z

machines.cluster.x-k8s.io 2022-08-14T03:26:49Z

machinesets.cluster.x-k8s.io 2022-08-14T03:26:49Z

orders.acme.cert-manager.io 2022-08-14T02:52:38Z

providers.clusterctl.cluster.x-k8s.io 2022-08-14T02:50:54Z$ kubectl apply -f https://raw.githubusercontent.com/argoproj/argo-cd/v2.4.9/manifests/install.yaml$ kubectl get pods

NAME READY STATUS RESTARTS AGE

argocd-application-controller-0 1/1 Running 0 171m

argocd-applicationset-controller-86c8556b6d-dxlhs 1/1 Running 0 171m

argocd-dex-server-5c65569f55-mff75 1/1 Running 0 171m

argocd-notifications-controller-f5d57bc55-4hdqd 1/1 Running 0 171m

argocd-redis-65596bf87-2d5kx 1/1 Running 0 171m

argocd-repo-server-5bfd7c4cfd-4hxn5 1/1 Running 0 171m

argocd-server-8544dd9f89-cdgrw 1/1 Running 0 171m$ kubectl port-forward svc/argocd-server 8080:80

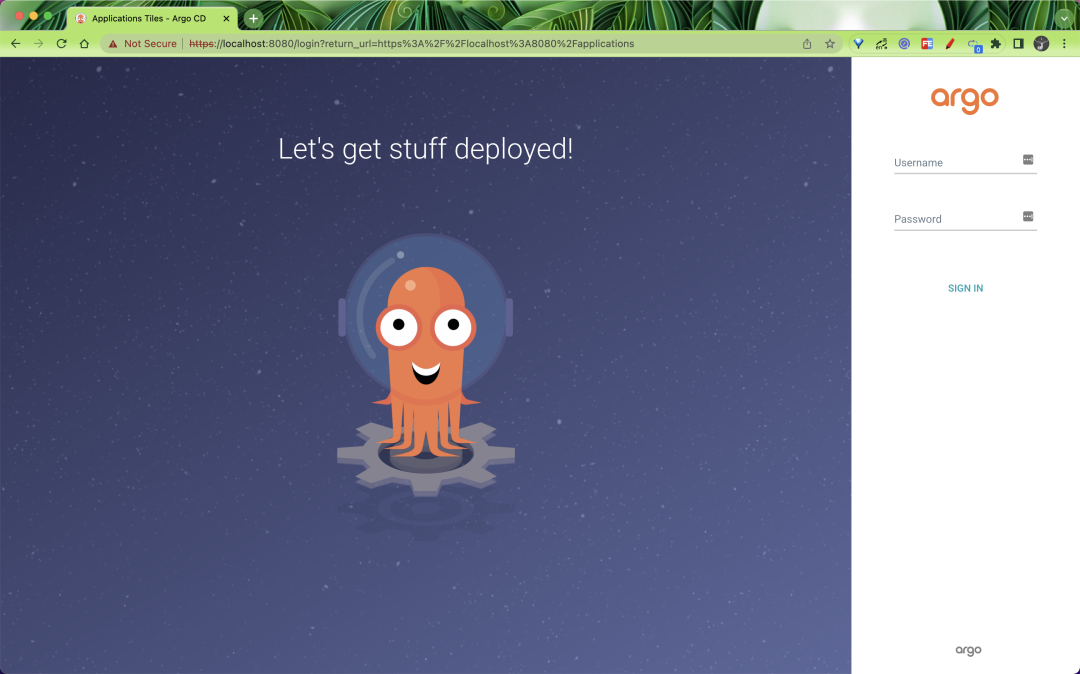

默认情况下 admin 账号的初始密码是自动生成的,会以明文的形式存储在 ArgoCD 安装的命名空间中名为 argocd-initial-admin-secret 的 Secret 对象下的 password 字段下,我们可以用下面的命令来获取:

默认情况下 admin 账号的初始密码是自动生成的,会以明文的形式存储在 ArgoCD 安装的命名空间中名为 argocd-initial-admin-secret 的 Secret 对象下的 password 字段下,我们可以用下面的命令来获取:$ kubectl get secret argocd-initial-admin-secret -o jsonpath="{.data.password}" | base64 -d && echo

$ clusterctl generate cluster c1 --flavor development \

--infrastructure docker \

--kubernetes-version v1.21.1 \

--control-plane-machine-count=3 \

--worker-machine-count=3 \

> c1-clusterapi.yamlapiVersion: cluster.x-k8s.io/v1beta1

kind: Cluster

metadata:

name: {{ .Values.cluster.name }}

namespace: default

spec:

clusterNetwork:

pods:

cidrBlocks:

- 192.168.0.0/16

serviceDomain: cluster.local

services:

cidrBlocks:

- 10.128.0.0/12

controlPlaneRef:

apiVersion: controlplane.cluster.x-k8s.io/v1beta1

kind: KubeadmControlPlane

name: {{ .Values.cluster.name }}-control-plane

namespace: default

infrastructureRef:

apiVersion: infrastructure.cluster.x-k8s.io/v1beta1

kind: DockerCluster

name: {{ .Values.cluster.name }}

namespace: default

---

apiVersion: infrastructure.cluster.x-k8s.io/v1beta1

kind: DockerCluster

metadata:

name: {{ .Values.cluster.name }}

namespace: default

---

apiVersion: infrastructure.cluster.x-k8s.io/v1beta1

kind: DockerMachineTemplate

metadata:

name: {{ .Values.cluster.name }}-control-plane

namespace: default

spec:

template:

spec:

extraMounts:

- containerPath: /var/run/docker.sock

hostPath: /var/run/docker.sock

---

apiVersion: controlplane.cluster.x-k8s.io/v1beta1

kind: KubeadmControlPlane

metadata:

name: {{ .Values.cluster.name }}-control-plane

namespace: default

spec:

kubeadmConfigSpec:

clusterConfiguration:

apiServer:

certSANs:

- localhost

- 127.0.0.1

controllerManager:

extraArgs:

enable-hostpath-provisioner: "true"

initConfiguration:

nodeRegistration:

criSocket: /var/run/containerd/containerd.sock

kubeletExtraArgs:

cgroup-driver: cgroupfs

eviction-hard: nodefs.available<0%,nodefs.inodesFree<0%,imagefs.available<0%

joinConfiguration:

nodeRegistration:

criSocket: /var/run/containerd/containerd.sock

kubeletExtraArgs:

cgroup-driver: cgroupfs

eviction-hard: nodefs.available<0%,nodefs.inodesFree<0%,imagefs.available<0%

machineTemplate:

infrastructureRef:

apiVersion: infrastructure.cluster.x-k8s.io/v1beta1

kind: DockerMachineTemplate

name: {{ .Values.cluster.name }}-control-plane

namespace: default

replicas: {{ .Values.cluster.masterNodes }}

version: {{ .Values.cluster.version }}

---

apiVersion: infrastructure.cluster.x-k8s.io/v1beta1

kind: DockerMachineTemplate

metadata:

name: {{ .Values.cluster.name }}-md-0

namespace: default

spec:

template:

spec: {}

---

apiVersion: bootstrap.cluster.x-k8s.io/v1beta1

kind: KubeadmConfigTemplate

metadata:

name: {{ .Values.cluster.name }}-md-0

namespace: default

spec:

template:

spec:

joinConfiguration:

nodeRegistration:

kubeletExtraArgs:

cgroup-driver: cgroupfs

eviction-hard: nodefs.available<0%,nodefs.inodesFree<0%,imagefs.available<0%

---

apiVersion: cluster.x-k8s.io/v1beta1

kind: MachineDeployment

metadata:

name: {{ .Values.cluster.name }}-md-0

namespace: default

spec:

clusterName: {{ .Values.cluster.name }}

replicas: {{ .Values.cluster.workerNodes }}

selector:

matchLabels: null

template:

spec:

bootstrap:

configRef:

apiVersion: bootstrap.cluster.x-k8s.io/v1beta1

kind: KubeadmConfigTemplate

name: {{ .Values.cluster.name }}-md-0

namespace: default

clusterName: {{ .Values.cluster.name }}

infrastructureRef:

apiVersion: infrastructure.cluster.x-k8s.io/v1beta1

kind: DockerMachineTemplate

name: {{ .Values.cluster.name }}-md-0

namespace: default

version: {{ .Values.cluster.version }}cluster:

name: c1

masterNodes: 3

workerNodes: 3

version: v1.21.1cluster:

name: c2

masterNodes: 1

workerNodes: 1

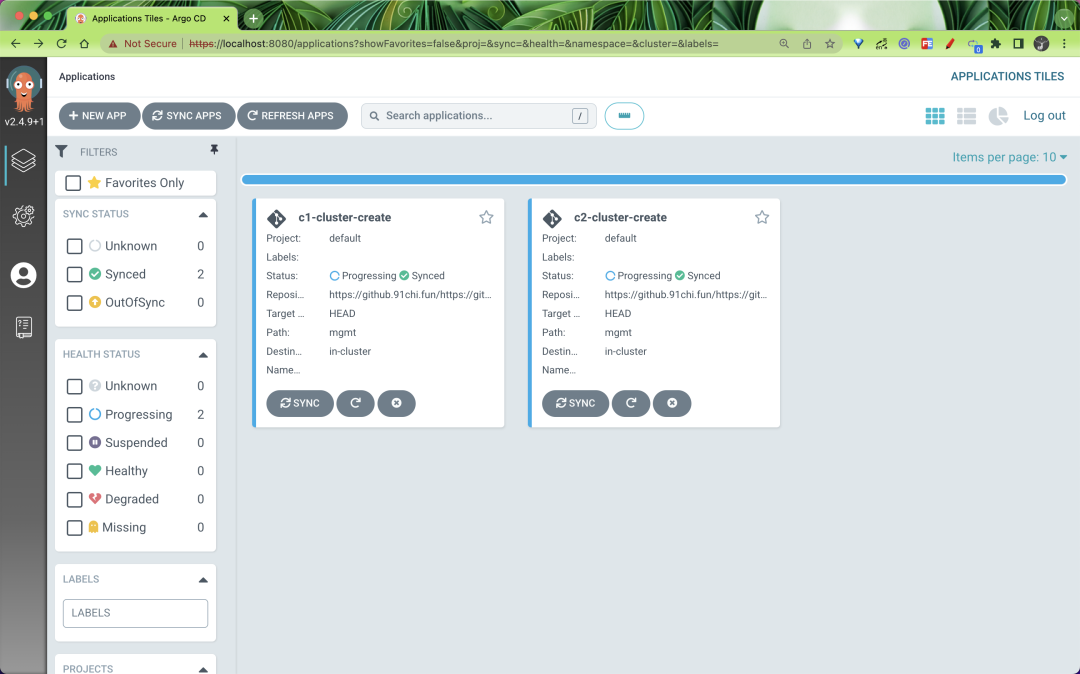

version: v1.21.1apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: c1-cluster-create

spec:

destination:

name: ""

namespace: ""

server: "https://kubernetes.default.svc"

source:

path: mgmt

repoURL: "https://github.com/cnych/sample-kubernetes-cluster-api-argocd.git"

targetRevision: HEAD

helm:

valueFiles:

- values-c1.yaml

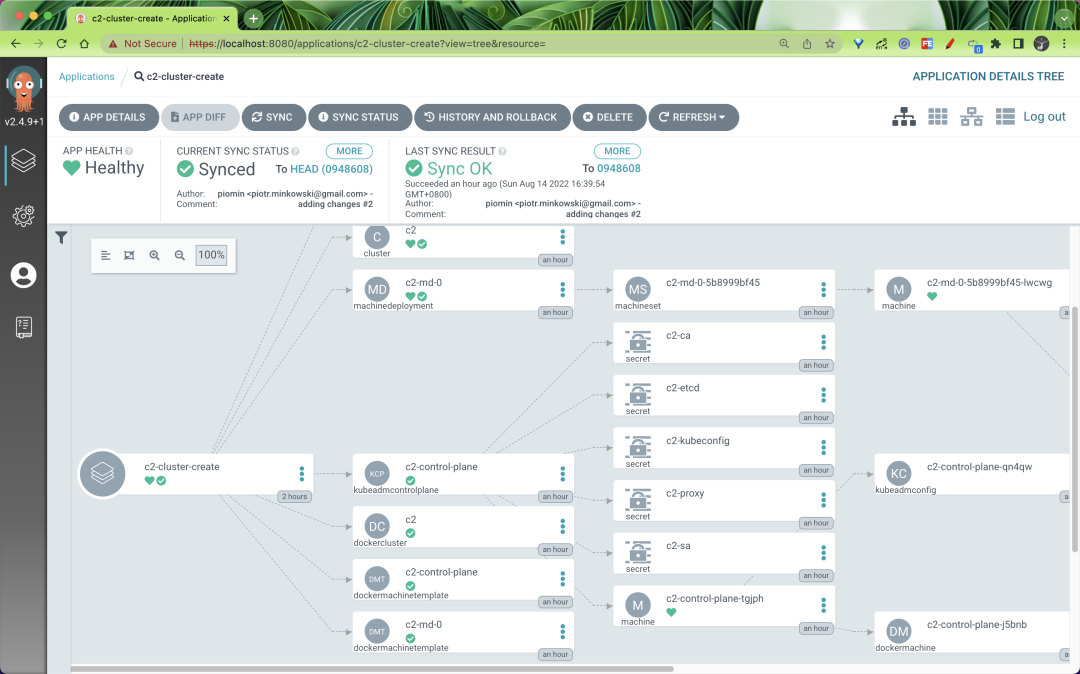

project: defaultapiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: c2-cluster-create

spec:

destination:

name: ""

namespace: ""

server: "https://kubernetes.default.svc"

source:

path: mgmt

repoURL: "https://github.com/cnych/sample-kubernetes-cluster-api-argocd.git"

targetRevision: HEAD

helm:

valueFiles:

- values-c2.yaml

project: defaultcluster-admin 角色添加到 ArgoCD 使用的 argocd-application-controller ServiceAccount。apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: cluster-admin-argocd-contoller

subjects:

- kind: ServiceAccount

name: argocd-application-controller

namespace: default

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

$ kind get clusters

c1

c2

mgmt$ clusterctl describe cluster c1

NAME READY SEVERITY REASON SINCE MESSAGE

Cluster/c1 True 4m4s

├─ClusterInfrastructure - DockerCluster/c1 True 7m49s

├─ControlPlane - KubeadmControlPlane/c1-control-plane True 4m4s

│ └─3 Machines... True 6m26s See c1-control-plane-p54fn, c1-control-plane-w4v88, ...

└─Workers

└─MachineDeployment/c1-md-0 False Warning WaitingForAvailableMachines 8m54s Minimum availability requires 3 replicas, current 0 available

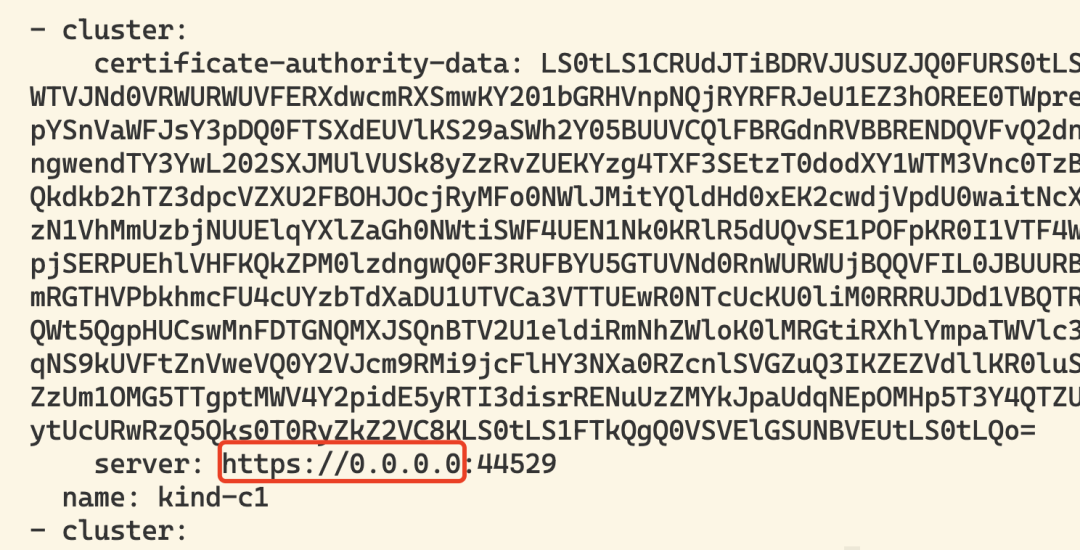

└─3 Machines... True 5m45s See c1-md-0-6f646885d6-8wr47, c1-md-0-6f646885d6-m5nwb, ...$ kind export kubeconfig --name c1

$ kind export kubeconfig --name c2

接着我们可以在这两个集群上安装 Calico CNI:

接着我们可以在这两个集群上安装 Calico CNI:$ kubectl apply -f https://docs.projectcalico.org/v3.20/manifests/calico.yaml --context kind-c1

$ kubectl apply -f https://docs.projectcalico.org/v3.20/manifests/calico.yaml --context kind-c2$ clusterctl describe cluster c2

NAME READY SEVERITY REASON SINCE MESSAGE

Cluster/c2 True 79m

├─ClusterInfrastructure - DockerCluster/c2 True 80m

├─ControlPlane - KubeadmControlPlane/c2-control-plane True 79m

│ └─Machine/c2-control-plane-tgjph True 79m

└─Workers

└─MachineDeployment/c2-md-0 True 81s

└─Machine/c2-md-0-5b8999bf45-lwcwg True 79m

apiVersion: v1

kind: Namespace

metadata:

name: demo

---

apiVersion: v1

kind: ResourceQuota

metadata:

name: demo-quota

namespace: demo

spec:

hard:

pods: "10"

requests.cpu: "1"

requests.memory: 1Gi

limits.cpu: "2"

limits.memory: 4Gi

---

apiVersion: v1

kind: LimitRange

metadata:

name: demo-limitrange

namespace: demo

spec:

limits:

- default:

memory: 512Mi

cpu: 500m

defaultRequest:

cpu: 100m

memory: 128Mi

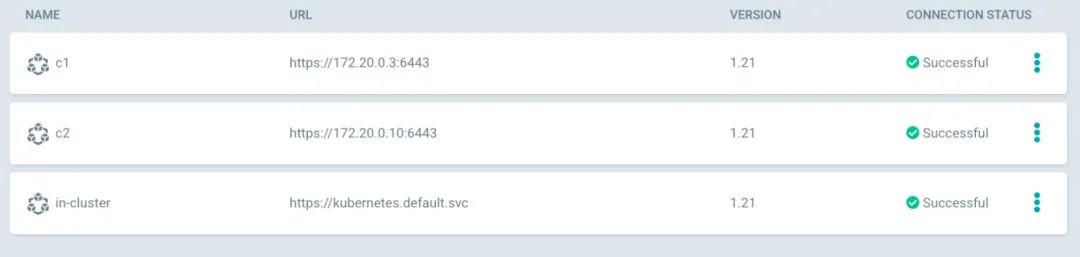

type: Container$ argocd login localhost:8080$ argocd cluster add kind-c1

$ argocd cluster add kind-c2$ kubectl get secrets | grep kubeconfig

c1-kubeconfig cluster.x-k8s.io/secret 1 85m

c2-kubeconfig cluster.x-k8s.io/secret 1 57m 在后台,ArgoCD 创建了一个与每个托管集群相关的 Secret,根据标签名称和值识别:argocd.argoproj.io/secret-type: cluster。

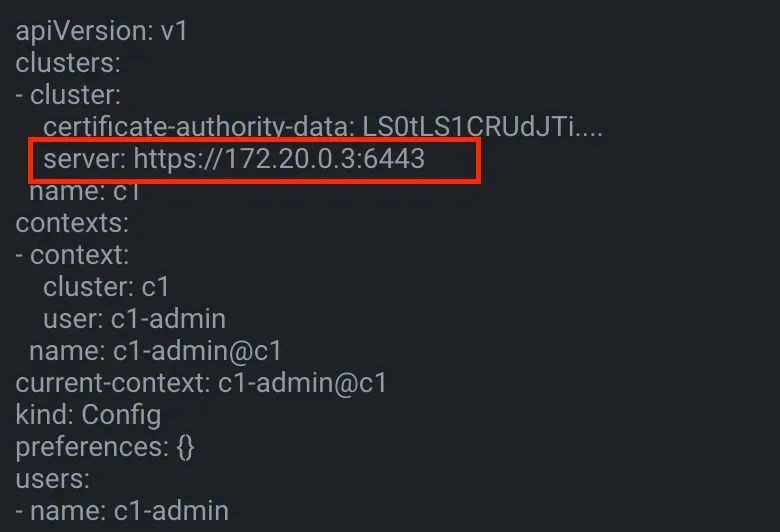

在后台,ArgoCD 创建了一个与每个托管集群相关的 Secret,根据标签名称和值识别:argocd.argoproj.io/secret-type: cluster。apiVersion: v1

kind: Secret

metadata:

name: c1-cluster-secret

labels:

argocd.argoproj.io/secret-type: cluster

type: Opaque

data:

name: c1

server: https://172.20.0.3:6443

config: |

{

"tlsClientConfig": {

"insecure": false,

"caData": "<base64 encoded certificate>",

"certData": "<base64 encoded certificate>",

"keyData": "<base64 encoded key>"

}

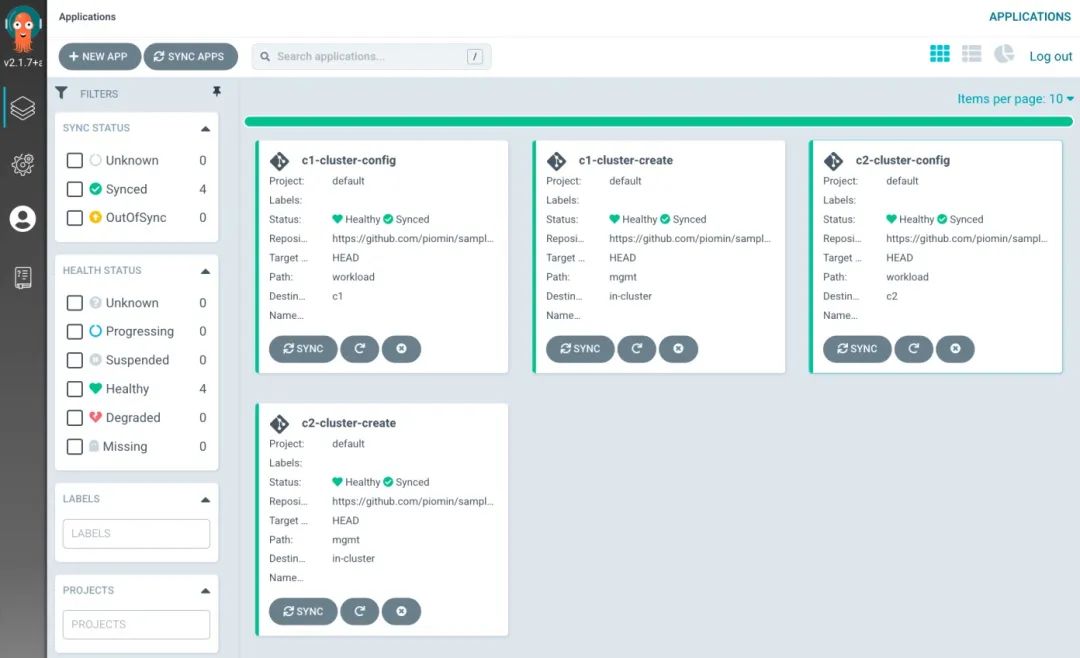

} 最后,让我们创建 ArgoCD 应用来管理两个工作负载集群上的配置。

最后,让我们创建 ArgoCD 应用来管理两个工作负载集群上的配置。apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: c1-cluster-config

spec:

project: default

source:

repoURL: "https://github.com/cnych/sample-kubernetes-cluster-api-argocd.git"

path: workload

targetRevision: HEAD

destination:

server: "https://172.20.0.3:6443"apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: c2-cluster-config

spec:

project: default

source:

repoURL: "https://github.com/cnych/sample-kubernetes-cluster-api-argocd.git"

path: workload

targetRevision: HEAD

destination:

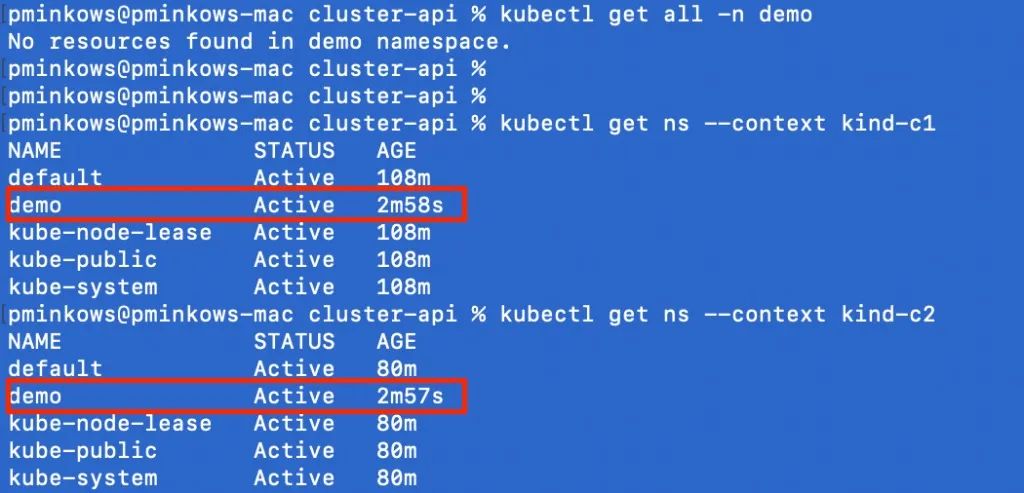

server: "https://172.20.0.10:6443" 最后,让我们验证配置是否已成功应用于目标集群。

最后,让我们验证配置是否已成功应用于目标集群。

我正在学习如何使用Nokogiri,根据这段代码我遇到了一些问题:require'rubygems'require'mechanize'post_agent=WWW::Mechanize.newpost_page=post_agent.get('http://www.vbulletin.org/forum/showthread.php?t=230708')puts"\nabsolutepathwithtbodygivesnil"putspost_page.parser.xpath('/html/body/div/div/div/div/div/table/tbody/tr/td/div

我有一个Ruby程序,它使用rubyzip压缩XML文件的目录树。gem。我的问题是文件开始变得很重,我想提高压缩级别,因为压缩时间不是问题。我在rubyzipdocumentation中找不到一种为创建的ZIP文件指定压缩级别的方法。有人知道如何更改此设置吗?是否有另一个允许指定压缩级别的Ruby库? 最佳答案 这是我通过查看rubyzip内部创建的代码。level=Zlib::BEST_COMPRESSIONZip::ZipOutputStream.open(zip_file)do|zip|Dir.glob("**/*")d

类classAprivatedeffooputs:fooendpublicdefbarputs:barendprivatedefzimputs:zimendprotecteddefdibputs:dibendendA的实例a=A.new测试a.foorescueputs:faila.barrescueputs:faila.zimrescueputs:faila.dibrescueputs:faila.gazrescueputs:fail测试输出failbarfailfailfail.发送测试[:foo,:bar,:zim,:dib,:gaz].each{|m|a.send(m)resc

很好奇,就使用rubyonrails自动化单元测试而言,你们正在做什么?您是否创建了一个脚本来在cron中运行rake作业并将结果邮寄给您?git中的预提交Hook?只是手动调用?我完全理解测试,但想知道在错误发生之前捕获错误的最佳实践是什么。让我们理所当然地认为测试本身是完美无缺的,并且可以正常工作。下一步是什么以确保他们在正确的时间将可能有害的结果传达给您? 最佳答案 不确定您到底想听什么,但是有几个级别的自动代码库控制:在处理某项功能时,您可以使用类似autotest的内容获得关于哪些有效,哪些无效的即时反馈。要确保您的提

假设我做了一个模块如下:m=Module.newdoclassCendend三个问题:除了对m的引用之外,还有什么方法可以访问C和m中的其他内容?我可以在创建匿名模块后为其命名吗(就像我输入“module...”一样)?如何在使用完匿名模块后将其删除,使其定义的常量不再存在? 最佳答案 三个答案:是的,使用ObjectSpace.此代码使c引用你的类(class)C不引用m:c=nilObjectSpace.each_object{|obj|c=objif(Class===objandobj.name=~/::C$/)}当然这取决于

出于纯粹的兴趣,我很好奇如何按顺序创建PI,而不是在过程结果之后生成数字,而是让数字在过程本身生成时显示。如果是这种情况,那么数字可以自行产生,我可以对以前看到的数字实现垃圾收集,从而创建一个无限系列。结果只是在Pi系列之后每秒生成一个数字。这是我通过互联网筛选的结果:这是流行的计算机友好算法,类机器算法:defarccot(x,unity)xpow=unity/xn=1sign=1sum=0loopdoterm=xpow/nbreakifterm==0sum+=sign*(xpow/n)xpow/=x*xn+=2sign=-signendsumenddefcalc_pi(digits

我正在尝试使用ruby和Savon来使用网络服务。测试服务为http://www.webservicex.net/WS/WSDetails.aspx?WSID=9&CATID=2require'rubygems'require'savon'client=Savon::Client.new"http://www.webservicex.net/stockquote.asmx?WSDL"client.get_quotedo|soap|soap.body={:symbol=>"AAPL"}end返回SOAP异常。检查soap信封,在我看来soap请求没有正确的命名空间。任何人都可以建议我

我正在使用i18n从头开始构建一个多语言网络应用程序,虽然我自己可以处理一大堆yml文件,但我说的语言(非常)有限,最终我想寻求外部帮助帮助。我想知道这里是否有人在使用UI插件/gem(与django上的django-rosetta不同)来处理多个翻译器,其中一些翻译器不愿意或无法处理存储库中的100多个文件,处理语言数据。谢谢&问候,安德拉斯(如果您已经在rubyonrails-talk上遇到了这个问题,我们深表歉意) 最佳答案 有一个rails3branchofthetolkgem在github上。您可以通过在Gemfi

关闭。这个问题是opinion-based.它目前不接受答案。想要改进这个问题?更新问题,以便editingthispost可以用事实和引用来回答它.关闭4年前。Improvethisquestion我想在固定时间创建一系列低音和高音调的哔哔声。例如:在150毫秒时发出高音调的蜂鸣声在151毫秒时发出低音调的蜂鸣声200毫秒时发出低音调的蜂鸣声250毫秒的高音调蜂鸣声有没有办法在Ruby或Python中做到这一点?我真的不在乎输出编码是什么(.wav、.mp3、.ogg等等),但我确实想创建一个输出文件。

我在我的项目目录中完成了compasscreate.和compassinitrails。几个问题:我已将我的.sass文件放在public/stylesheets中。这是放置它们的正确位置吗?当我运行compasswatch时,它不会自动编译这些.sass文件。我必须手动指定文件:compasswatchpublic/stylesheets/myfile.sass等。如何让它自动运行?文件ie.css、print.css和screen.css已放在stylesheets/compiled。如何在编译后不让它们重新出现的情况下删除它们?我自己编译的.sass文件编译成compiled/t