Hive数据库的存储位置 & DDL

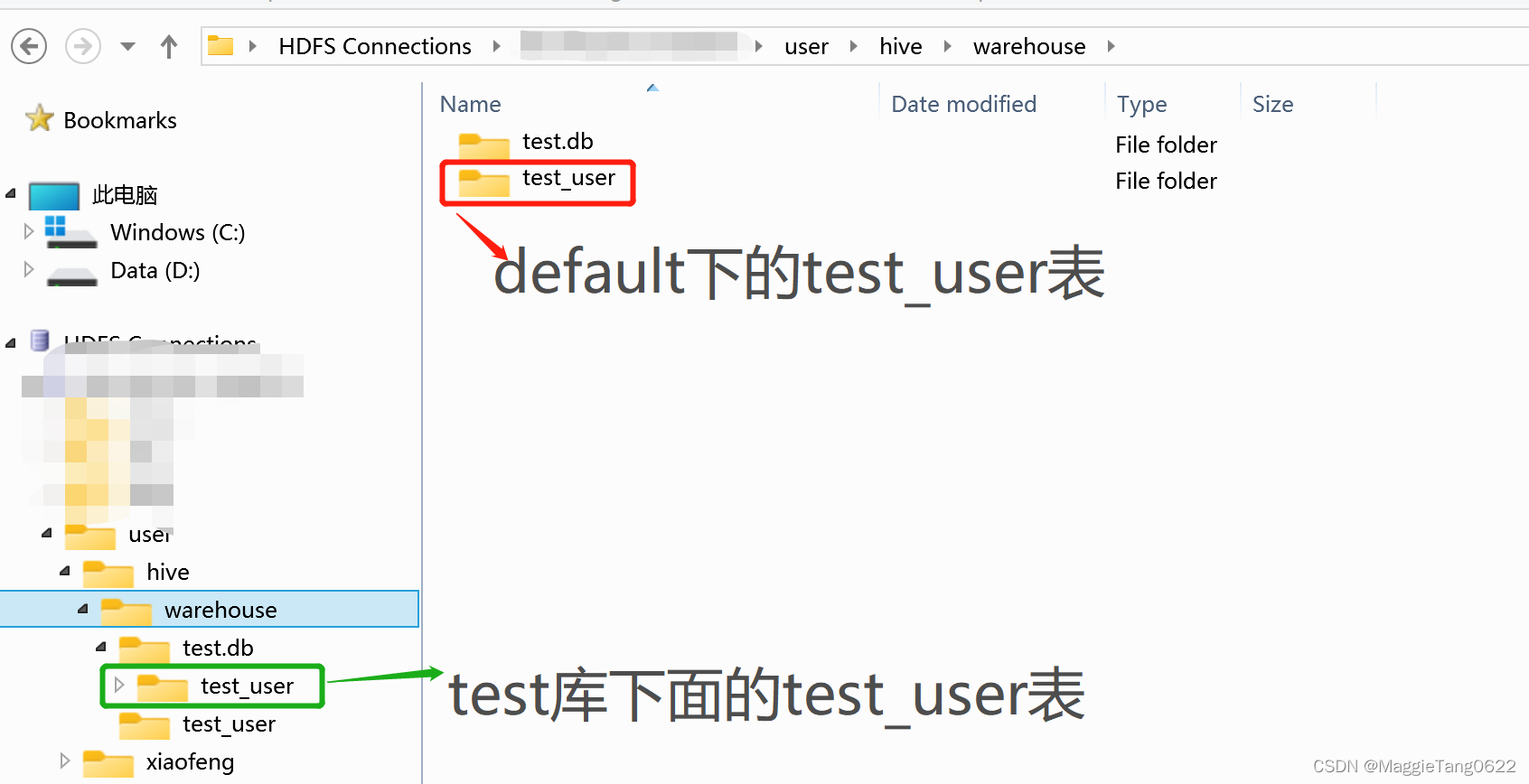

Hive对应的database 和 table都是对应分布式文件系统的一个路径:

| (-) | 默认数据库default | 非default数据库 |

|---|---|---|

| 数据库位置 | (-) | /user/hive/warehouse/数据库名字.db |

| 新创建的表位置 | /user/hive/warehouse/表名 | /user/hive/warehouse/数据库名字.db/表名 |

mysql> use myhive; // 在hive-site.xml配置的mysql数据库库名。

mysql> show tables;

+-------------------------------+

| Tables_in_myhive |

+-------------------------------+

| aux_table |

| bucketing_cols |

| cds |

| columns_v2 |

| compaction_queue |

| completed_compactions |

| completed_txn_components |

| ctlgs |

| database_params【存放元数据参数配置】 |

| db_privs |

| dbs 【存放metadata元数据】 |

| delegation_tokens |

| func_ru |

语法:

CREATE [REMOTE] (DATABASE|SCHEMA) [IF NOT EXISTS] database_name

[COMMENT database_comment]

[LOCATION hdfs_path]

[MANAGEDLOCATION hdfs_path]

[WITH DBPROPERTIES (property_name=property_value, …)];[] :可有可无

(DATABASE|SCHEMA) N选1操作

[xiaofeng@maggie101 ~]$ beeline.sh

// 切换到hiveserver2下面创建myhive_test1.db数据库

0: jdbc:hive2://localhost:10000/> Create database myhive_test1;

// 查看/user/hive/warehouse/路径下的文件

[xiaofeng@maggie101 ~]$ hadoop fs -ls /user/hive/warehouse/

Found 3 items

drwxr-xr-x - xiaofeng supergroup 0 2022-11-26 00:17 /user/hive/warehouse/myhive_test1.db

drwxr-xr-x - xiaofeng supergroup 0 2022-11-25 01:51 /user/hive/warehouse/test.db

drwxr-xr-x - xiaofeng supergroup 0 2022-11-25 11:04 /user/hive/warehouse/test_user

0: jdbc:hive2://localhost:10000/> Create database myhive_test1;

Error: Error while processing statement: FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask. Database myhive_test1 already exists (state=42000,code=1)

0: jdbc:hive2://localhost:10000/> Create database IF NOT EXISTS myhive_test1;

No rows affected (0.03 seconds)

0: jdbc:hive2://localhost:10000/> Create database myhive_test3 LOCATION '/user/hive/myhive_test3';

Create database myhive_test4 COMMENT 'maggie创建的Hive测试数据库';

Create database myhive_test5 COMMENT 'maggie创建的Hive测试数据库5' WITH DBPROPERTIES ('cretor'='maggie', 'date'='2022-11-26');

0: jdbc:hive2://localhost:10000/> show databases;

+----------------+

| database_name |

+----------------+

| default |

| myhive_test1 |

| myhive_test2 |

| myhive_test3 |

| myhive_test4 |

| myhive_test5 |

| test |

+----------------+

7 rows selected (0.088 seconds)

0: jdbc:hive2://localhost:10000/> show databases like 'myhive*';

+----------------+

| database_name |

+----------------+

| myhive_test1 |

| myhive_test2 |

| myhive_test3 |

| myhive_test4 |

| myhive_test5 |

+----------------+

5 rows selected (0.047 seconds)

0: jdbc:hive2://localhost:10000/> desc database myhive_test1;

+---------------+----------+----------------------------------------------------+-------------+-------------+-------------+

| db_name | comment | location | owner_name | owner_type | parameters |

+---------------+----------+----------------------------------------------------+-------------+-------------+-------------+

| myhive_test1 | | hdfs://maggie101:9000/user/hive/warehouse/myhive_test1.db | xiaofeng | USER | |

+---------------+----------+----------------------------------------------------+-------------+-------------+-------------+

1 row selected (0.052 seconds)

0: jdbc:hive2://localhost:10000/> desc database extended myhive_test5;

+---------------+----------------------+----------------------------------------------------+-------------+-------------+-----------------------------------+

| db_name | comment | location | owner_name | owner_type | parameters |

+---------------+----------------------+----------------------------------------------------+-------------+-------------+-----------------------------------+

| myhive_test5 | maggie???Hive?????5 | hdfs://maggie101:9000/user/hive/warehouse/myhive_test5.db | xiaofeng | USER | {date=2022-11-26, cretor=maggie} |

+---------------+----------------------+----------------------------------------------------+-------------+-------------+-----------------------------------+

1 row selected (0.036 seconds)

0: jdbc:hive2://localhost:10000/>

mysql> desc dbs;

+-----------------+---------------+------+-----+---------+-------+

| Field | Type | Null | Key | Default | Extra |

+-----------------+---------------+------+-----+---------+-------+

| DB_ID | bigint(20) | NO | PRI | NULL | |

| DESC | varchar(4000) | YES | | NULL | |

| DB_LOCATION_URI | varchar(4000) | NO | | NULL | |

| NAME | varchar(128) | YES | MUL | NULL | |

| OWNER_NAME | varchar(128) | YES | | NULL | |

| OWNER_TYPE | varchar(10) | YES | | NULL | |

| CTLG_NAME | varchar(256) | NO | MUL | hive | |

+-----------------+---------------+------+-----+---------+-------+

7 rows in set (0.00 sec)

mysql> select * from dbs;

+-------+-----------------------+-----------------------------------------------------------+--------------+------------+------------+-----------+

| DB_ID | DESC | DB_LOCATION_URI | NAME | OWNER_NAME | OWNER_TYPE | CTLG_NAME |

+-------+-----------------------+-----------------------------------------------------------+--------------+------------+------------+-----------+

| 1 | Default Hive database | hdfs://maggie101:9000/user/hive/warehouse | default | public | ROLE | hive |

| 2 | NULL | hdfs://maggie101:9000/user/hive/warehouse/test.db | test | xiaofeng | USER | hive |

| 6 | NULL | hdfs://maggie101:9000/user/hive/warehouse/myhive_test1.db | myhive_test1 | xiaofeng | USER | hive |

| 7 | NULL | hdfs://maggie101:9000/user/hive/warehouse/myhive_test2.db | myhive_test2 | xiaofeng | USER | hive |

| 8 | NULL | hdfs://maggie101:9000/user/hive/myhive_test3 | myhive_test3 | xiaofeng | USER | hive |

| 9 | maggie???Hive????? | hdfs://maggie101:9000/user/hive/warehouse/myhive_test4.db | myhive_test4 | xiaofeng | USER | hive |

| 10 | maggie???Hive?????5 | hdfs://maggie101:9000/user/hive/warehouse/myhive_test5.db | myhive_test5 | xiaofeng | USER | hive |

+-------+-----------------------+-----------------------------------------------------------+--------------+------------+------------+-----------+

7 rows in set (0.00 sec)

mysql>

mysql> desc database_params ;

+-------------+---------------+------+-----+---------+-------+

| Field | Type | Null | Key | Default | Extra |

+-------------+---------------+------+-----+---------+-------+

| DB_ID | bigint(20) | NO | PRI | NULL | |

| PARAM_KEY | varchar(180) | NO | PRI | NULL | |

| PARAM_VALUE | varchar(4000) | YES | | NULL | |

+-------------+---------------+------+-----+---------+-------+

3 rows in set (0.00 sec)

mysql> select * from database_params ;

+-------+-----------+-------------+

| DB_ID | PARAM_KEY | PARAM_VALUE |

+-------+-----------+-------------+

| 10 | cretor | maggie |

| 10 | date | 2022-11-26 |

+-------+-----------+-------------+

2 rows in set (0.00 sec)

ALTER DATABASE myhive_test5 SET DBPROPERTIES ('updatetime'='30000101');

DROP DATABASE pk_hivedfasdfafasdfdasfas ; 【不存在的时候,会报错】

DROP DATABASE IF EXISTS pk_hivedfasdfafasdfdasfas ; 【加上IF EXISTS的时候,就不会报错】

DROP DATABASE IF EXISTS pk_hivedfasdfafasdfdasfas CASCADE; 【CASCADE级联删除,有点危险】

我正在尝试测试是否存在表单。我是Rails新手。我的new.html.erb_spec.rb文件的内容是:require'spec_helper'describe"messages/new.html.erb"doit"shouldrendertheform"dorender'/messages/new.html.erb'reponse.shouldhave_form_putting_to(@message)with_submit_buttonendendView本身,new.html.erb,有代码:当我运行rspec时,它失败了:1)messages/new.html.erbshou

我在从html页面生成PDF时遇到问题。我正在使用PDFkit。在安装它的过程中,我注意到我需要wkhtmltopdf。所以我也安装了它。我做了PDFkit的文档所说的一切......现在我在尝试加载PDF时遇到了这个错误。这里是错误:commandfailed:"/usr/local/bin/wkhtmltopdf""--margin-right""0.75in""--page-size""Letter""--margin-top""0.75in""--margin-bottom""0.75in""--encoding""UTF-8""--margin-left""0.75in""-

我在我的项目目录中完成了compasscreate.和compassinitrails。几个问题:我已将我的.sass文件放在public/stylesheets中。这是放置它们的正确位置吗?当我运行compasswatch时,它不会自动编译这些.sass文件。我必须手动指定文件:compasswatchpublic/stylesheets/myfile.sass等。如何让它自动运行?文件ie.css、print.css和screen.css已放在stylesheets/compiled。如何在编译后不让它们重新出现的情况下删除它们?我自己编译的.sass文件编译成compiled/t

我主要使用Ruby来执行此操作,但到目前为止我的攻击计划如下:使用gemsrdf、rdf-rdfa和rdf-microdata或mida来解析给定任何URI的数据。我认为最好映射到像schema.org这样的统一模式,例如使用这个yaml文件,它试图描述数据词汇表和opengraph到schema.org之间的转换:#SchemaXtoschema.orgconversion#data-vocabularyDV:name:namestreet-address:streetAddressregion:addressRegionlocality:addressLocalityphoto:i

我有一个对象has_many应呈现为xml的子对象。这不是问题。我的问题是我创建了一个Hash包含此数据,就像解析器需要它一样。但是rails自动将整个文件包含在.........我需要摆脱type="array"和我该如何处理?我没有在文档中找到任何内容。 最佳答案 我遇到了同样的问题;这是我的XML:我在用这个:entries.to_xml将散列数据转换为XML,但这会将条目的数据包装到中所以我修改了:entries.to_xml(root:"Contacts")但这仍然将转换后的XML包装在“联系人”中,将我的XML代码修改为

为了将Cucumber用于命令行脚本,我按照提供的说明安装了arubagem。它在我的Gemfile中,我可以验证是否安装了正确的版本并且我已经包含了require'aruba/cucumber'在'features/env.rb'中为了确保它能正常工作,我写了以下场景:@announceScenario:Testingcucumber/arubaGivenablankslateThentheoutputfrom"ls-la"shouldcontain"drw"假设事情应该失败。它确实失败了,但失败的原因是错误的:@announceScenario:Testingcucumber/ar

我在我的项目中添加了一个系统来重置用户密码并通过电子邮件将密码发送给他,以防他忘记密码。昨天它运行良好(当我实现它时)。当我今天尝试启动服务器时,出现以下错误。=>BootingWEBrick=>Rails3.2.1applicationstartingindevelopmentonhttp://0.0.0.0:3000=>Callwith-dtodetach=>Ctrl-CtoshutdownserverExiting/Users/vinayshenoy/.rvm/gems/ruby-1.9.3-p0/gems/actionmailer-3.2.1/lib/action_mailer

我的瘦服务器配置了nginx,我的ROR应用程序正在它们上运行。在我发布代码更新时运行thinrestart会给我的应用程序带来一些停机时间。我试图弄清楚如何优雅地重启正在运行的Thin实例,但找不到好的解决方案。有没有人能做到这一点? 最佳答案 #Restartjustthethinserverdescribedbythatconfigsudothin-C/etc/thin/mysite.ymlrestartNginx将继续运行并代理请求。如果您将Nginx设置为使用多个上游服务器,例如server{listen80;server

在MRIRuby中我可以这样做:deftransferinternal_server=self.init_serverpid=forkdointernal_server.runend#Maketheserverprocessrunindependently.Process.detach(pid)internal_client=self.init_client#Dootherstuffwithconnectingtointernal_server...internal_client.post('somedata')ensure#KillserverProcess.kill('KILL',

我已经从我的命令行中获得了一切,所以我可以运行rubymyfile并且它可以正常工作。但是当我尝试从sublime中运行它时,我得到了undefinedmethod`require_relative'formain:Object有人知道我的sublime设置中缺少什么吗?我正在使用OSX并安装了rvm。 最佳答案 或者,您可以只使用“require”,它应该可以正常工作。我认为“require_relative”仅适用于ruby1.9+ 关于ruby-主要:Objectwhenrun