前言:

这次是在部署后很久才想起来整理了下文档,如有遗漏见谅,期间也遇到过很多坑有些目前还没头绪希望有大佬让我学习下

一、环境准备

| k8s-master01 | 3.127.10.209 |

|---|---|

| k8s-master02 | 3.127.10.95 |

| k8s-master03 | 3.127.10.66 |

| k8s-node01 | 3.127.10.233 |

| k8s-node02 | 3.127.33.173 |

| harbor | 3.127.33.174 |

1、k8s各节点部署nfs

挂载目录为 /home/k8s/elasticsearch/storage

2、安装制备器Provisioner

镜像为quay.io/external_storage/nfs-client-provisioner:latest

# cat rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

namespace: default

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

namespace: default

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

namespace: default

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

# cat deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

namespace: default

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: 3.127.33.174:8443/kubernetes/nfs-client-provisioner:latest

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: fuseim.pri/ifs

- name: NFS_SERVER

value: 3.127.10.95

- name: NFS_PATH

value: /home/k8s/elasticsearch/storage

volumes:

- name: nfs-client-root

nfs:

server: 3.127.10.95

path: /home/k8s/elasticsearch/storage

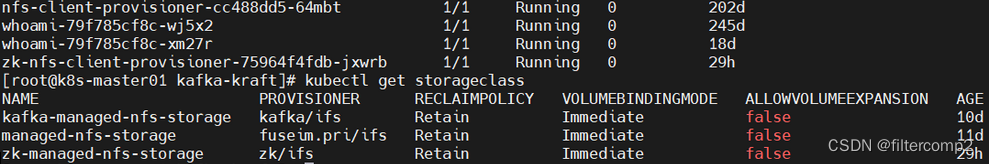

# cat es-storageclass.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: fuseim.pri/ifs # or choose another name, must match deployment's env PROVISIONER_NAME'

parameters:

archiveOnDelete: "true"

reclaimPolicy: Retain

3、ES集群部署

# cat es-cluster-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: es-svc

namespace: elk

labels:

app: es-cluster-svc

spec:

selector:

app: es

type: ClusterIP

clusterIP: None

sessionAffinity: None

ports:

- name: outer-port

port: 9200

protocol: TCP

targetPort: 9200

- name: cluster-port

port: 9300

protocol: TCP

targetPort: 9300

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: es-cluster

namespace: elk

labels:

app: es-cluster

spec:

podManagementPolicy: OrderedReady

replicas: 3

serviceName: es-svc

selector:

matchLabels:

app: es

template:

metadata:

labels:

app: es

namespace: elk

spec:

containers:

- name: es-cluster

image: 3.127.33.174:8443/elk/elasticsearch:8.1.0

imagePullPolicy: IfNotPresent

resources:

limits:

memory: "16Gi"

cpu: "200m"

ports:

- name: outer-port

containerPort: 9200

protocol: TCP

- name: cluster-port

containerPort: 9300

protocol: TCP

env:

- name: cluster.name

value: "es-cluster"

- name: node.name

valueFrom:

fieldRef:

fieldPath: metadata.name

# - name: discovery.zen.ping.unicast.hosts

- name: discovery.seed_hosts

value: "es-cluster-0.es-svc,es-cluster-1.es-svc,es-cluster-2.es-svc"

# - name: discovery.zen.minimum_master_nodes

# value: "2"

- name: cluster.initial_master_nodes

value: "es-cluster-0"

- name: ES_JAVA_OPTS

value: "-Xms1024m -Xmx1024m"

- name: xpack.security.enabled

value: "false"

volumeMounts:

- name: es-volume

mountPath: /usr/share/elasticsearch/data

initContainers:

- name: fix-permissions

image: 3.127.33.174:8443/elk/busybox:latest

imagePullPolicy: IfNotPresent

# uid,gid为1000

command: ["sh", "-c", "chown -R 1000:1000 /usr/share/elasticsearch/data"]

securityContext:

privileged: true

volumeMounts:

- name: es-volume

mountPath: /usr/share/elasticsearch/data

- name: increase-vm-max-map

image: 3.127.33.174:8443/elk/busybox:latest

imagePullPolicy: IfNotPresent

command: ["sysctl","-w","vm.max_map_count=655360"]

securityContext:

privileged: true

- name: increase-ulimit

image: 3.127.33.174:8443/elk/busybox:latest

imagePullPolicy: IfNotPresent

command: ["sh","-c","ulimit -n 65536"]

securityContext:

privileged: true

volumeClaimTemplates:

- metadata:

name: es-volume

namespace: elk

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: "150Gi"

storageClassName: managed-nfs-storage

kubectl get pods -n elk -o wide

至此es集群正常部署完毕

4、kibana部署

# cat kibana.yaml

apiVersion: v1

kind: Service

metadata:

name: kibana-svc

namespace: elk

labels:

app: kibana-svc

spec:

selector:

app: kibana-8.1.0

ports:

- name: kibana-port

port: 5601

protocol: TCP

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana-deployment

namespace: elk

labels:

app: kibana-dep

spec:

replicas: 1

selector:

matchLabels:

app: kibana-8.1.0

template:

metadata:

name: kibana

labels:

app: kibana-8.1.0

spec:

containers:

- name: kibana

image: 3.127.33.174:8443/elk/kibana:8.1.0

imagePullPolicy: IfNotPresent

resources:

limits:

cpu: "1000m"

requests:

cpu: "200m"

ports:

- name: kibana-web

containerPort: 5601

protocol: TCP

env:

- name: ELASTICSEARCH_HOSTS

value: http://es-svc:9200

readinessProbe:

initialDelaySeconds: 10

periodSeconds: 10

httpGet:

port: 5601

timeoutSeconds: 100

---

# 部署ingress通过域名来访问kibana

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: kibana-ingress

namespace: elk

labels:

app: kibana-ingress

spec:

ingressClassName: nginx

defaultBackend:

service:

name: kibana-svc

port:

name: kibana-port

rules:

- host: jszw.kibana.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: kibana-svc

port:

name: kibana-port

kubectl get pods -n elk -o wide

我是Google云的新手,我正在尝试对其进行首次部署。我的第一个部署是RubyonRails项目。我基本上是在关注thisguideinthegoogleclouddocumentation.唯一的区别是我使用的是我自己的项目,而不是他们提供的“helloworld”项目。这是我的app.yaml文件runtime:customvm:trueentrypoint:bundleexecrackup-p8080-Eproductionconfig.ruresources:cpu:0.5memory_gb:1.3disk_size_gb:10当我转到我的项目目录并运行gcloudprevie

我可以在Azure网站上部署RubyonRails吗? 最佳答案 还没有。目前仅支持.NET和PHP。 关于ruby-on-rails-RubyonRails可以部署在Azure网站上吗?,我们在StackOverflow上找到一个类似的问题: https://stackoverflow.com/questions/12964010/

文章目录一、概述简介原理模块二、配置Mysql使用版本环境要求1.操作系统2.mysql要求三、配置canal-server离线下载在线下载上传解压修改配置单机配置集群配置分库分表配置1.修改全局配置2.实例配置垂直分库水平分库3.修改group-instance.xml4.启动监听四、配置canal-adapter1修改启动配置2配置映射文件3启动ES数据同步查询所有订阅同步数据同步开关启动4.验证五、配置canal-admin一、概述简介canal是Alibaba旗下的一款开源项目,Java开发。基于数据库增量日志解析,提供增量数据订阅&消费。Git地址:https://github.co

前置步骤我们都操作完了,这篇开始介绍jenkins的集成。话不多说,看操作1、登录进入jenkins后会让你选择安装插件,选择第一个默认的就行。安装完成后设置账号密码,重新登录。2、配置JDK和Git都需要执行路径,所以需要先把执行路径找到,先进入服务器的docker容器,2.1JDK的路径root@69eef9ee86cf:/usr/bin#echo$JAVA_HOME/usr/local/openjdk-82.2Git的路径root@69eef9ee86cf:/#whichgit/usr/bin/git3、先配置JDK和Git。点击:ManageJenkins>>GlobalToolCon

深度学习部署:Windows安装pycocotools报错解决方法1.pycocotools库的简介2.pycocotools安装的坑3.解决办法更多Ai资讯:公主号AiCharm本系列是作者在跑一些深度学习实例时,遇到的各种各样的问题及解决办法,希望能够帮助到大家。ERROR:Commanderroredoutwithexitstatus1:'D:\Anaconda3\python.exe'-u-c'importsys,setuptools,tokenize;sys.argv[0]='"'"'C:\\Users\\46653\\AppData\\Local\\Temp\\pip-instal

ES一、简介1、ElasticStackES技术栈:ElasticSearch:存数据+搜索;QL;Kibana:Web可视化平台,分析。LogStash:日志收集,Log4j:产生日志;log.info(xxx)。。。。使用场景:metrics:指标监控…2、基本概念Index(索引)动词:保存(插入)名词:类似MySQL数据库,给数据Type(类型)已废弃,以前类似MySQL的表现在用索引对数据分类Document(文档)真正要保存的一个JSON数据{name:"tcx"}二、入门实战{"name":"DESKTOP-1TSVGKG","cluster_name":"elasticsear

Ocra无法处理需要“tk”的应用程序require'tk'puts'nope'用奥克拉http://github.com/larsch/ocra不起作用(如链接中的一个问题所述)问题:https://github.com/larsch/ocra/issues/29(Ocra是1.9的"new"rubyscript2exe,本质上它用于将rb脚本部署为可执行文件)唯一的问题似乎是缺少tcl的DLL文件我不认为这是一个问题据我所知,问题是缺少tk的DLL文件如果它们是已知的,则可以在执行ocra时将它们包括在内有没有办法知道tk工作所需的DLL依赖项? 最佳答

我有一个类unzipper.rb,它使用Rubyzip解压文件。在我的本地环境中,我可以成功解压缩文件,而无需使用require'zip'明确包含依赖项但是在Heroku上,我得到一个NameError(uninitializedconstantUnzipper::Zip)我只能通过使用明确的require来解决问题:为什么这在Heroku环境中是必需的,但在本地主机上却不是?我的印象是Rails自动需要所有gem。app/services/unzipper.rbrequire'zip'#OnlyrequiredforHeroku.Workslocallywithout!class

出于某种原因,heroku尝试要求dm-sqlite-adapter,即使它应该在这里使用Postgres。请注意,这发生在我打开任何URL时-而不是在gitpush本身期间。我构建了一个默认的Facebook应用程序。gem文件:source:gemcuttergem"foreman"gem"sinatra"gem"mogli"gem"json"gem"httparty"gem"thin"gem"data_mapper"gem"heroku"group:productiondogem"pg"gem"dm-postgres-adapter"endgroup:development,:t

有没有人得到Logstash在Rails上使用ruby?我的客户告诉我将Logstash用于日志收集器等。我正在使用rubyonrails技术。大部分都快完成了。但要求是将日志记录到logstash中。请让我知道这可能吗? 最佳答案 我为此编写了一个gem-logstasher.它将Rails日志写入一个单独的文件,采用纯json格式,无需任何处理即可由logstash使用。查看我的blog有关如何设置Logstash和Kibana的完整说明 关于ruby-on-rails-Lo