目录

主要步骤:

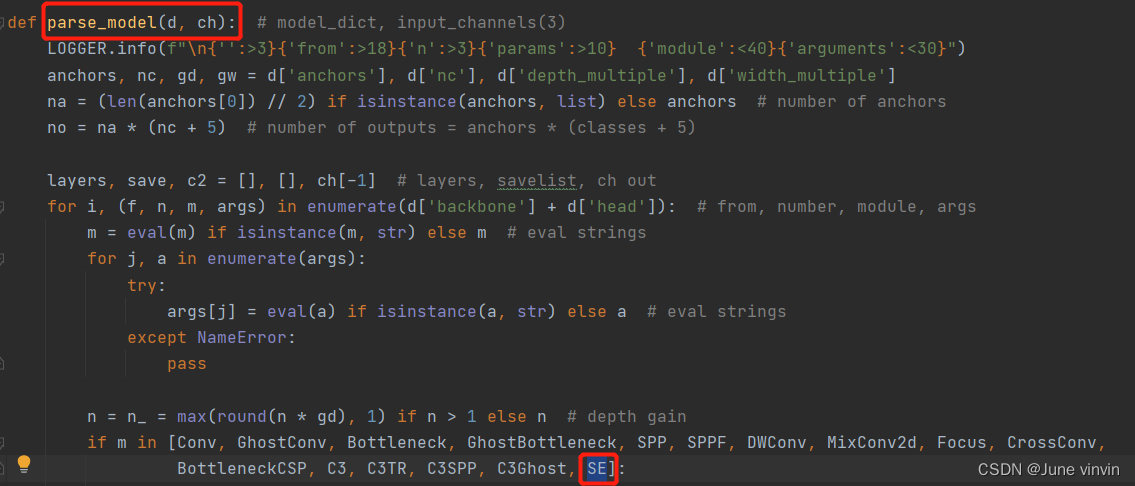

(1)在models/common.py中注册注意力模块

(2)在models/yolo.py中的parse_model函数中添加注意力模块

(3)修改配置文件yolov5s.yaml

(4)运行yolo.py进行验证

各个注意力机制模块的添加方法类似,各注意力模块的修改参照SE。

本文添加注意力完整代码:https://github.com/double-vin/yolov5_attention

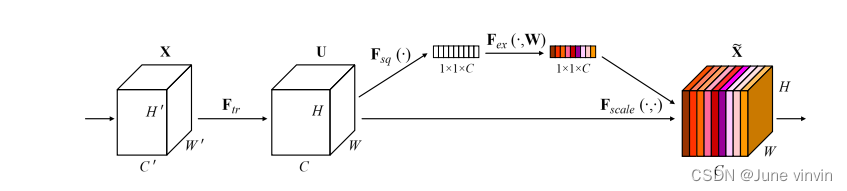

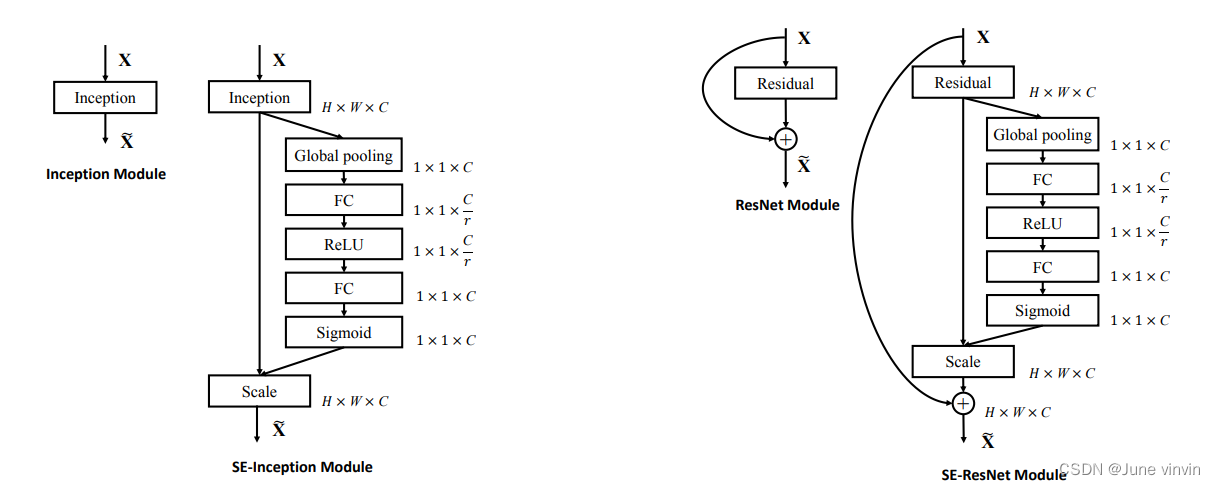

Squeeze-and-Excitation Networks

https://github.com/hujie-frank/SENet

models/common.py中注册SE模块class SE(nn.Module):

def __init__(self, c1, c2, ratio=16):

super(SE, self).__init__()

#c*1*1

self.avgpool = nn.AdaptiveAvgPool2d(1)

self.l1 = nn.Linear(c1, c1 // ratio, bias=False)

self.relu = nn.ReLU(inplace=True)

self.l2 = nn.Linear(c1 // ratio, c1, bias=False)

self.sig = nn.Sigmoid()

def forward(self, x):

b, c, _, _ = x.size()

y = self.avgpool(x).view(b, c)

y = self.l1(y)

y = self.relu(y)

y = self.l2(y)

y = self.sig(y)

y = y.view(b, c, 1, 1)

return x * y.expand_as(x)

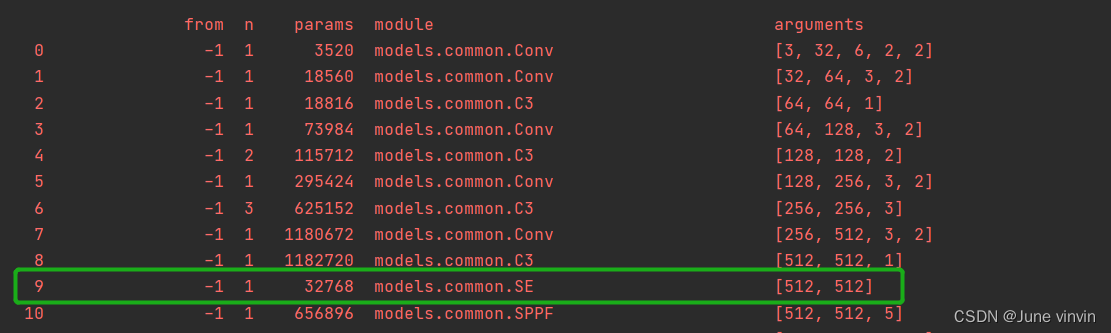

models/yolo.py中的parse_model函数中添加SE模块

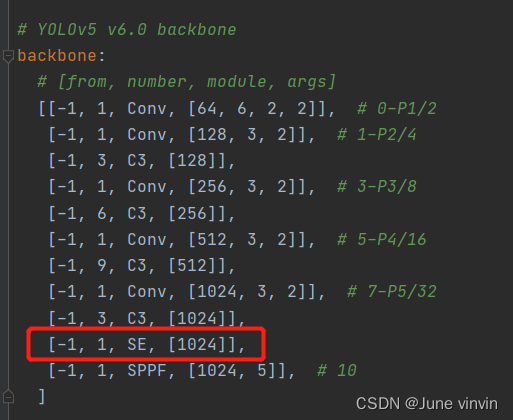

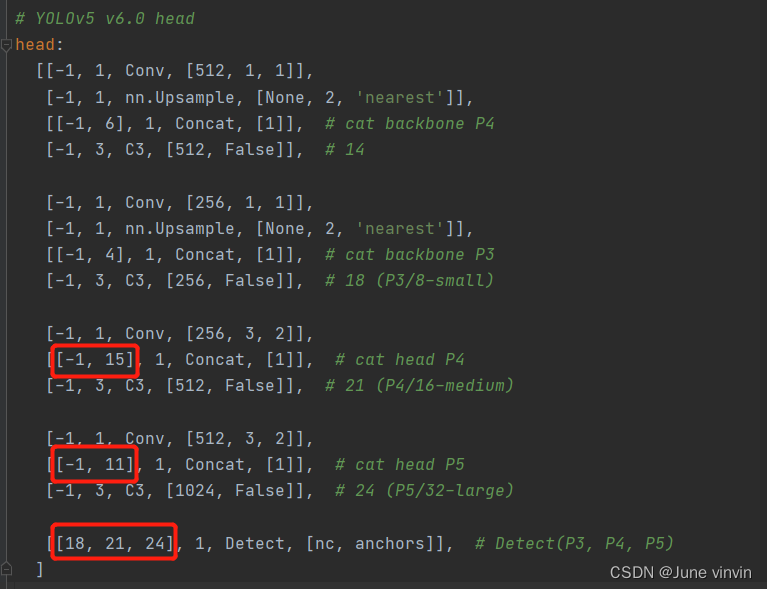

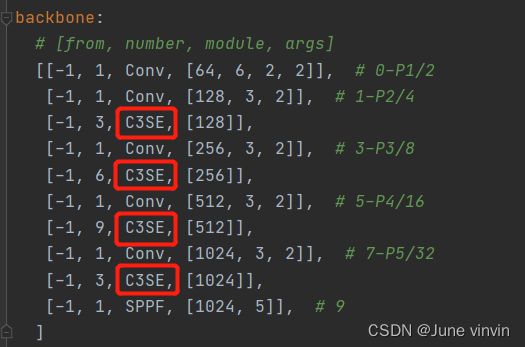

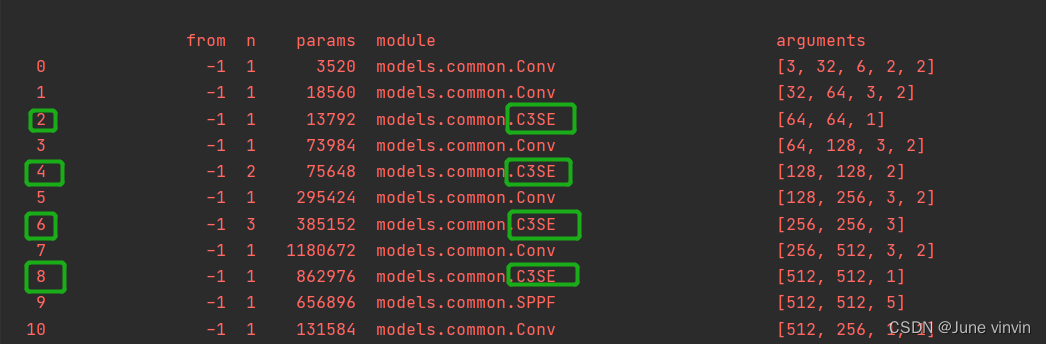

yolov5s.yaml。C3SE

两个Concat的from系数分别由[-1, 14],[-1, 10]改为[-1, 15],[-1, 11]Detect的from系数由[17, 20, 23]改为[18,21,24]

yolo.py

models/common.py中注册C3SE模块:class SEBottleneck(nn.Module):

# Standard bottleneck

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5, ratio=16): # ch_in, ch_out, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_, c2, 3, 1, g=g)

self.add = shortcut and c1 == c2

# self.se=SE(c1,c2,ratio)

self.avgpool = nn.AdaptiveAvgPool2d(1)

self.l1 = nn.Linear(c1, c1 // ratio, bias=False)

self.relu = nn.ReLU(inplace=True)

self.l2 = nn.Linear(c1 // ratio, c1, bias=False)

self.sig = nn.Sigmoid()

def forward(self, x):

x1 = self.cv2(self.cv1(x))

b, c, _, _ = x.size()

y = self.avgpool(x1).view(b, c)

y = self.l1(y)

y = self.relu(y)

y = self.l2(y)

y = self.sig(y)

y = y.view(b, c, 1, 1)

out = x1 * y.expand_as(x1)

# out=self.se(x1)*x1

return x + out if self.add else out

class C3SE(C3):

# C3 module with SEBottleneck()

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

super().__init__(c1, c2, n, shortcut, g, e)

c_ = int(c2 * e) # hidden channels

self.m = nn.Sequential(*(SEBottleneck(c_, c_, shortcut) for _ in range(n)))

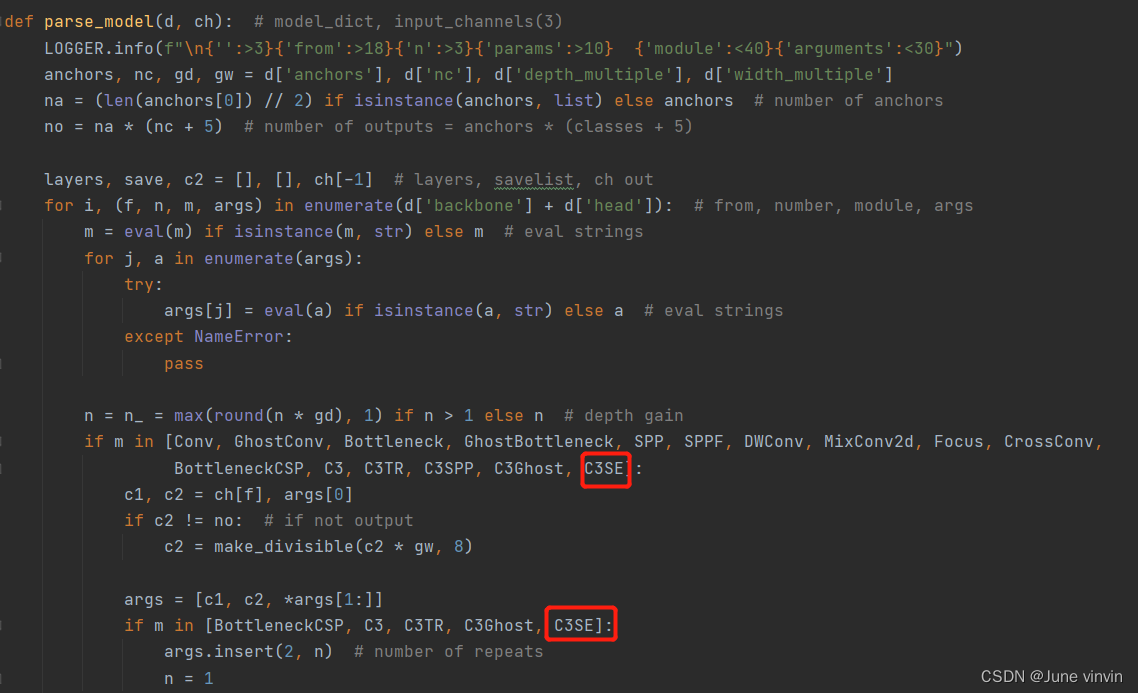

models/yolo.py中的parse_model函数中添加C3SE模块

yolov5s.yaml。

yolo.py

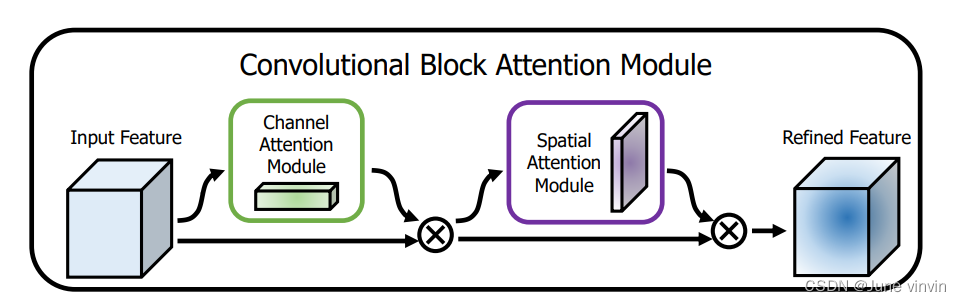

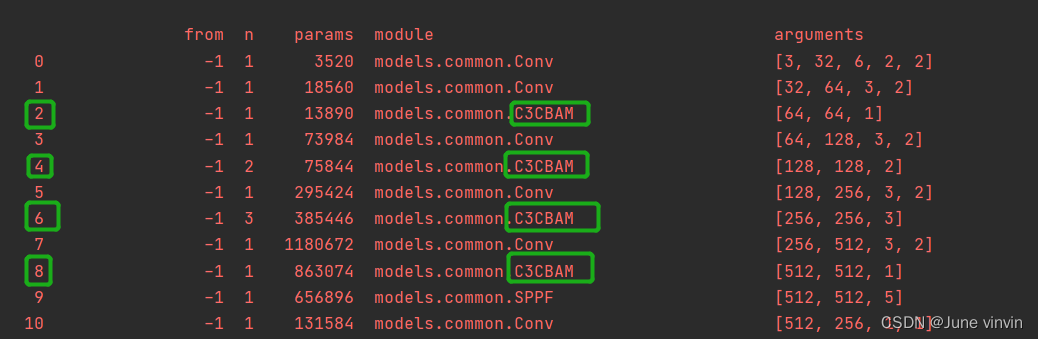

《CBAM: Convolutional Block Attention Module》

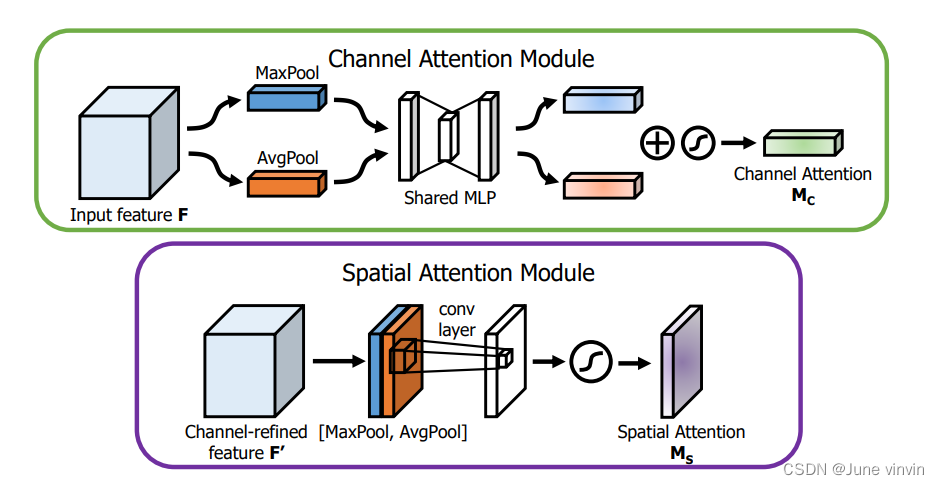

class ChannelAttention(nn.Module):

def __init__(self, in_planes, ratio=16):

super(ChannelAttention, self).__init__()

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.max_pool = nn.AdaptiveMaxPool2d(1)

self.f1 = nn.Conv2d(in_planes, in_planes // ratio, 1, bias=False)

self.relu = nn.ReLU()

self.f2 = nn.Conv2d(in_planes // ratio, in_planes, 1, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

avg_out = self.f2(self.relu(self.f1(self.avg_pool(x))))

max_out = self.f2(self.relu(self.f1(self.max_pool(x))))

out = self.sigmoid(avg_out + max_out)

return out

class SpatialAttention(nn.Module):

def __init__(self, kernel_size=7):

super(SpatialAttention, self).__init__()

assert kernel_size in (3, 7), 'kernel size must be 3 or 7'

padding = 3 if kernel_size == 7 else 1

# (特征图的大小-算子的size+2*padding)/步长+1

self.conv = nn.Conv2d(2, 1, kernel_size, padding=padding, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

# 1*h*w

avg_out = torch.mean(x, dim=1, keepdim=True)

max_out, _ = torch.max(x, dim=1, keepdim=True)

x = torch.cat([avg_out, max_out], dim=1)

#2*h*w

x = self.conv(x)

#1*h*w

return self.sigmoid(x)

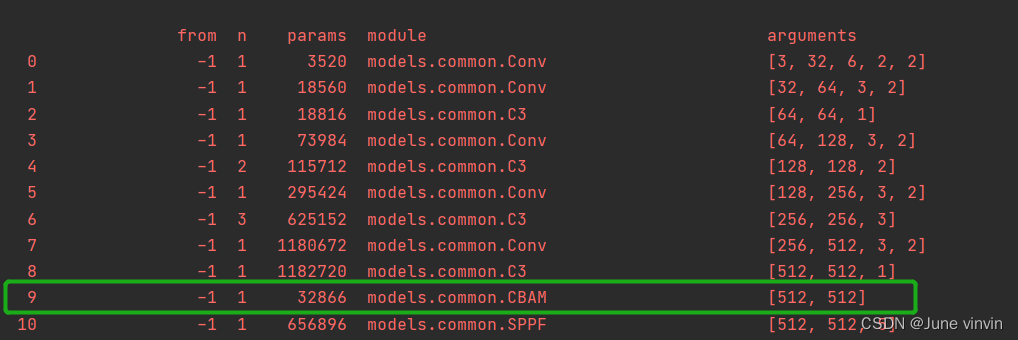

class CBAM(nn.Module):

# CSP Bottleneck with 3 convolutions

def __init__(self, c1, c2, ratio=16, kernel_size=7): # ch_in, ch_out, number, shortcut, groups, expansion

super(CBAM, self).__init__()

self.channel_attention = ChannelAttention(c1, ratio)

self.spatial_attention = SpatialAttention(kernel_size)

def forward(self, x):

out = self.channel_attention(x) * x

# c*h*w

# c*h*w * 1*h*w

out = self.spatial_attention(out) * out

return out

class CBAMBottleneck(nn.Module):

# Standard bottleneck

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5,ratio=16,kernel_size=7): # ch_in, ch_out, shortcut, groups, expansion

super(CBAMBottleneck,self).__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_, c2, 3, 1, g=g)

self.add = shortcut and c1 == c2

self.channel_attention = ChannelAttention(c2, ratio)

self.spatial_attention = SpatialAttention(kernel_size)

#self.cbam=CBAM(c1,c2,ratio,kernel_size)

def forward(self, x):

x1 = self.cv2(self.cv1(x))

out = self.channel_attention(x1) * x1

# print('outchannels:{}'.format(out.shape))

out = self.spatial_attention(out) * out

return x + out if self.add else out

class C3CBAM(C3):

# C3 module with CBAMBottleneck()

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

super().__init__(c1, c2, n, shortcut, g, e)

c_ = int(c2 * e) # hidden channels

self.m = nn.Sequential(*(CBAMBottleneck(c_, c_, shortcut) for _ in range(n)))

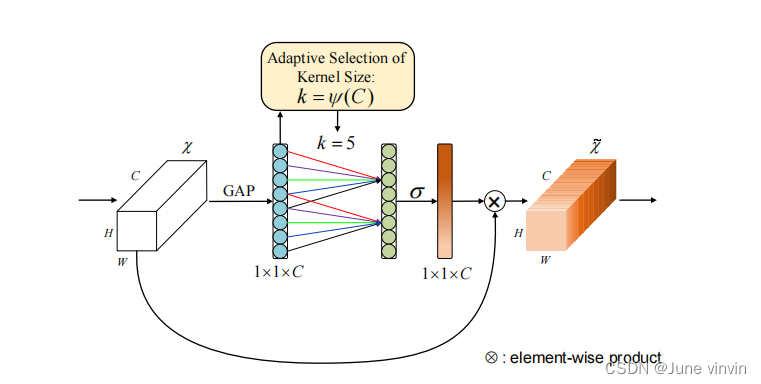

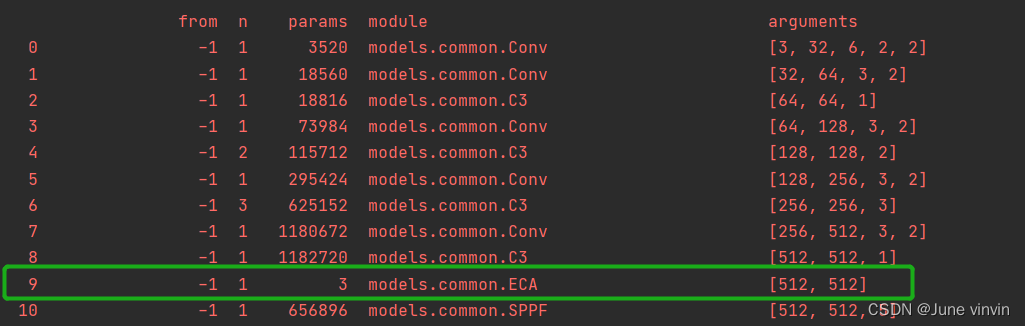

《ECA-Net: Efficient Channel Attention for Deep Convolutional Neural Networks》

https://github.com/BangguWu/ECANet

class ECA(nn.Module):

"""Constructs a ECA module.

Args:

channel: Number of channels of the input feature map

k_size: Adaptive selection of kernel size

"""

def __init__(self, c1, c2, k_size=3):

super(ECA, self).__init__()

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.conv = nn.Conv1d(1, 1, kernel_size=k_size, padding=(k_size - 1) // 2, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

# feature descriptor on the global spatial information

y = self.avg_pool(x)

# print(y.shape,y.squeeze(-1).shape,y.squeeze(-1).transpose(-1, -2).shape)

# Two different branches of ECA module

# 50*C*1*1

# 50*C*1

# 50*1*C

y = self.conv(y.squeeze(-1).transpose(-1, -2)).transpose(-1, -2).unsqueeze(-1)

# Multi-scale information fusion

y = self.sigmoid(y)

return x * y.expand_as(x)

class ECABottleneck(nn.Module):

# Standard bottleneck

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5, ratio=16, k_size=3): # ch_in, ch_out, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_, c2, 3, 1, g=g)

self.add = shortcut and c1 == c2

# self.eca=ECA(c1,c2)

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.conv = nn.Conv1d(1, 1, kernel_size=k_size, padding=(k_size - 1) // 2, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

x1 = self.cv2(self.cv1(x))

# out=self.eca(x1)*x1

y = self.avg_pool(x1)

y = self.conv(y.squeeze(-1).transpose(-1, -2)).transpose(-1, -2).unsqueeze(-1)

y = self.sigmoid(y)

out = x1 * y.expand_as(x1)

return x + out if self.add else out

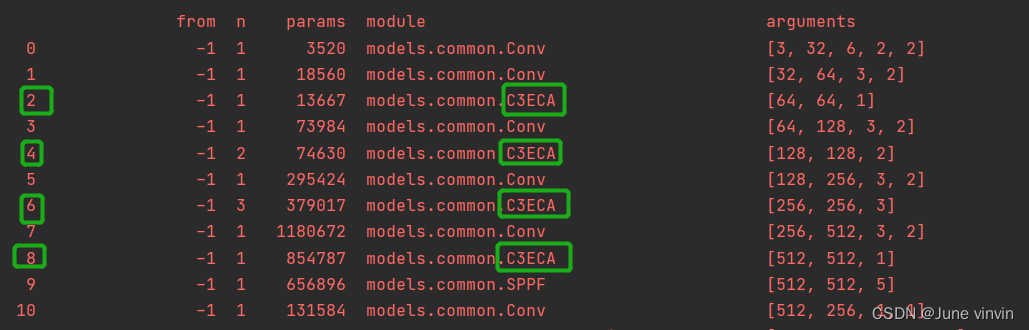

class C3ECA(C3):

# C3 module with ECABottleneck()

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

super().__init__(c1, c2, n, shortcut, g, e)

c_ = int(c2 * e) # hidden channels

self.m = nn.Sequential(*(ECABottleneck(c_, c_, shortcut) for _ in range(n)))

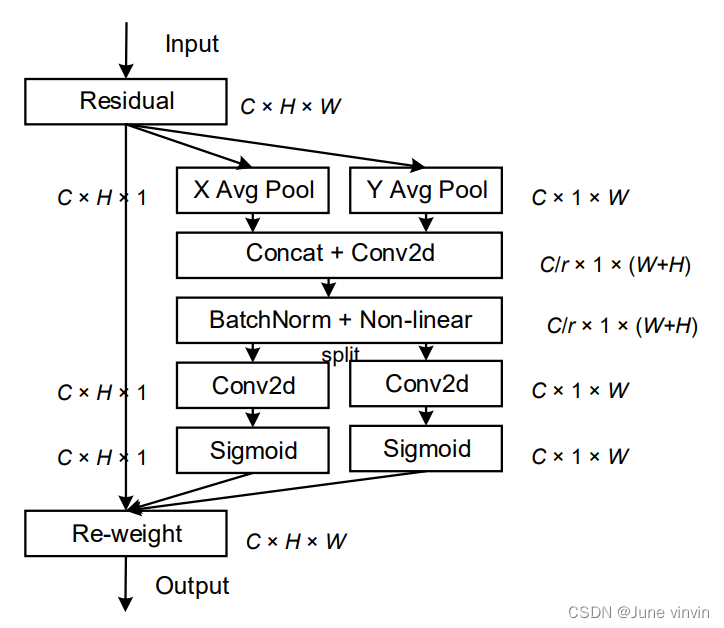

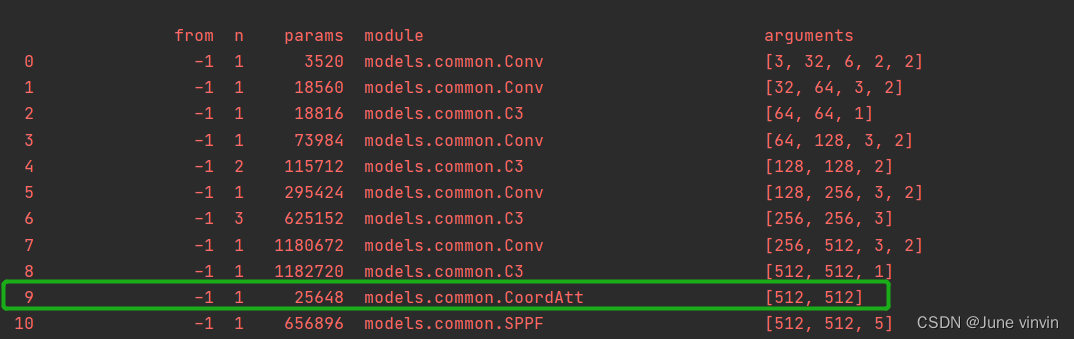

Coordinate Attention for Efficient Mobile Network Design

https://github.com/Andrew-Qibin/CoordAttention

class h_sigmoid(nn.Module):

def __init__(self, inplace=True):

super(h_sigmoid, self).__init__()

self.relu = nn.ReLU6(inplace=inplace)

def forward(self, x):

return self.relu(x + 3) / 6

class h_swish(nn.Module):

def __init__(self, inplace=True):

super(h_swish, self).__init__()

self.sigmoid = h_sigmoid(inplace=inplace)

def forward(self, x):

return x * self.sigmoid(x)

class CoordAtt(nn.Module):

def __init__(self, inp, oup, reduction=32):

super(CoordAtt, self).__init__()

self.pool_h = nn.AdaptiveAvgPool2d((None, 1))

self.pool_w = nn.AdaptiveAvgPool2d((1, None))

mip = max(8, inp // reduction)

self.conv1 = nn.Conv2d(inp, mip, kernel_size=1, stride=1, padding=0)

self.bn1 = nn.BatchNorm2d(mip)

self.act = h_swish()

self.conv_h = nn.Conv2d(mip, oup, kernel_size=1, stride=1, padding=0)

self.conv_w = nn.Conv2d(mip, oup, kernel_size=1, stride=1, padding=0)

def forward(self, x):

identity = x

n, c, h, w = x.size()

# c*1*W

x_h = self.pool_h(x)

# c*H*1

# C*1*h

x_w = self.pool_w(x).permute(0, 1, 3, 2)

y = torch.cat([x_h, x_w], dim=2)

# C*1*(h+w)

y = self.conv1(y)

y = self.bn1(y)

y = self.act(y)

x_h, x_w = torch.split(y, [h, w], dim=2)

x_w = x_w.permute(0, 1, 3, 2)

a_h = self.conv_h(x_h).sigmoid()

a_w = self.conv_w(x_w).sigmoid()

out = identity * a_w * a_h

return out

class CABottleneck(nn.Module):

# Standard bottleneck

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5, ratio=32): # ch_in, ch_out, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_, c2, 3, 1, g=g)

self.add = shortcut and c1 == c2

# self.ca=CoordAtt(c1,c2,ratio)

self.pool_h = nn.AdaptiveAvgPool2d((None, 1))

self.pool_w = nn.AdaptiveAvgPool2d((1, None))

mip = max(8, c1 // ratio)

self.conv1 = nn.Conv2d(c1, mip, kernel_size=1, stride=1, padding=0)

self.bn1 = nn.BatchNorm2d(mip)

self.act = h_swish()

self.conv_h = nn.Conv2d(mip, c2, kernel_size=1, stride=1, padding=0)

self.conv_w = nn.Conv2d(mip, c2, kernel_size=1, stride=1, padding=0)

def forward(self, x):

x1=self.cv2(self.cv1(x))

n, c, h, w = x.size()

# c*1*W

x_h = self.pool_h(x1)

# c*H*1

# C*1*h

x_w = self.pool_w(x1).permute(0, 1, 3, 2)

y = torch.cat([x_h, x_w], dim=2)

# C*1*(h+w)

y = self.conv1(y)

y = self.bn1(y)

y = self.act(y)

x_h, x_w = torch.split(y, [h, w], dim=2)

x_w = x_w.permute(0, 1, 3, 2)

a_h = self.conv_h(x_h).sigmoid()

a_w = self.conv_w(x_w).sigmoid()

out = x1 * a_w * a_h

# out=self.ca(x1)*x1

return x + out if self.add else out

class C3CA(C3):

# C3 module with CABottleneck()

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

super().__init__(c1, c2, n, shortcut, g, e)

c_ = int(c2 * e) # hidden channels

self.m = nn.Sequential(*(CABottleneck(c_, c_,shortcut) for _ in range(n)))

当我使用Bundler时,是否需要在我的Gemfile中将其列为依赖项?毕竟,我的代码中有些地方需要它。例如,当我进行Bundler设置时:require"bundler/setup" 最佳答案 没有。您可以尝试,但首先您必须用鞋带将自己抬离地面。 关于ruby-我需要将Bundler本身添加到Gemfile中吗?,我们在StackOverflow上找到一个类似的问题: https://stackoverflow.com/questions/4758609/

我有一个ModularSinatra应用程序,我正在尝试将Bootstrap添加到应用程序中。get'/bootstrap/application.css'doless:"bootstrap/bootstrap"end我在views/bootstrap中有所有less文件,包括bootstrap.less。我收到这个错误:Less::ParseErrorat/bootstrap/application.css'reset.less'wasn'tfound.Bootstrap.less的第一行是://CSSReset@import"reset.less";我尝试了所有不同的路径格式,但它

我正在使用Sequel构建一个愿望list系统。我有一个wishlists和itemstable和一个items_wishlists连接表(该名称是续集选择的名称)。items_wishlists表还有一个用于facebookid的额外列(因此我可以存储opengraph操作),这是一个NOTNULL列。我还有Wishlist和Item具有续集many_to_many关联的模型已建立。Wishlist类也有:selectmany_to_many关联的选项设置为select:[:items.*,:items_wishlists__facebook_action_id].有没有一种方法可以

当谈到运行时自省(introspection)和动态代码生成时,我认为ruby没有任何竞争对手,可能除了一些lisp方言。前几天,我正在做一些代码练习来探索ruby的动态功能,我开始想知道如何向现有对象添加方法。以下是我能想到的3种方法:obj=Object.new#addamethoddirectlydefobj.new_method...end#addamethodindirectlywiththesingletonclassclass这只是冰山一角,因为我还没有探索instance_eval、module_eval和define_method的各种组合。是否有在线/离线资

我注意到类定义,如果我打开classMyClass,并在不覆盖的情况下添加一些东西我仍然得到了之前定义的原始方法。添加的新语句扩充了现有语句。但是对于方法定义,我仍然想要与类定义相同的行为,但是当我打开defmy_method时似乎,def中的现有语句和end被覆盖了,我需要重写一遍。那么有什么方法可以使方法定义的行为与定义相同,类似于super,但不一定是子类? 最佳答案 我想您正在寻找alias_method:classAalias_method:old_func,:funcdeffuncold_func#similartoca

我有带有Logo图像的公司模型has_attached_file:logo我用他们的Logo创建了许多公司。现在,我需要添加新样式has_attached_file:logo,:styles=>{:small=>"30x15>",:medium=>"155x85>"}我是否应该重新上传所有旧数据以重新生成新样式?我不这么认为……或者有什么rake任务可以重新生成样式吗? 最佳答案 参见Thumbnail-Generation.如果rake任务不适合你,你应该能够在控制台中使用一个片段来调用重新处理!关于相关公司

我正在尝试使用Curbgem执行以下POST以解析云curl-XPOST\-H"X-Parse-Application-Id:PARSE_APP_ID"\-H"X-Parse-REST-API-Key:PARSE_API_KEY"\-H"Content-Type:image/jpeg"\--data-binary'@myPicture.jpg'\https://api.parse.com/1/files/pic.jpg用这个:curl=Curl::Easy.new("https://api.parse.com/1/files/lion.jpg")curl.multipart_form_

我正在开发一个创建网络博客的RubyonRails项目。我希望将一个名为featured的boolean数据库字段添加到Post模型中。该字段应该可以通过我添加的事件管理界面进行编辑。我使用了以下代码,但我什至没有在网站上显示另一列。$railsgeneratemigrationaddFeaturedfeatured:boolean$rakedb:migrate我是RubyonRails的新手,非常感谢任何帮助。我的index.html.erb文件中的相关代码(views):FeaturedPost架构.rb:ActiveRecord::Schema.define(:version=>

我有一个.pfx格式的证书,我需要使用ruby提取公共(public)、私有(private)和CA证书。使用shell我可以这样做:#ExtractPublicKey(askforpassword)opensslpkcs12-infile.pfx-outfile_public.pem-clcerts-nokeys#ExtractCertificateAuthorityKey(askforpassword)opensslpkcs12-infile.pfx-outfile_ca.pem-cacerts-nokeys#ExtractPrivateKey(askforpassword)o

假设我有一个这样的单例类:classSettingsincludeSingletondeftimeout#lazy-loadtimeoutfromconfigfile,orwhateverendend现在,如果我想知道使用什么超时,我需要编写如下内容:Settings.instance.timeout但我宁愿将其缩短为Settings.timeout使这项工作有效的一个明显方法是将设置的实现修改为:classSettingsincludeSingletondefself.timeoutinstance.timeoutenddeftimeout#lazy-loadtimeoutfromc