模拟学生成绩信息写入es数据库,包括姓名、性别、科目、成绩。

示例代码1: 【一次性写入10000*1000条数据】 【本人亲测耗时5100秒】

from elasticsearch import Elasticsearch

from elasticsearch import helpers

import random

import time

es = Elasticsearch(hosts='http://127.0.0.1:9200')

# print(es)

names = ['刘一', '陈二', '张三', '李四', '王五', '赵六', '孙七', '周八', '吴九', '郑十']

sexs = ['男', '女']

subjects = ['语文', '数学', '英语', '生物', '地理']

grades = [85, 77, 96, 74, 85, 69, 84, 59, 67, 69, 86, 96, 77, 78, 79, 80, 81, 82, 83, 84, 85, 86]

datas = []

start = time.time()

# 开始批量写入es数据库

# 批量写入数据

for j in range(1000):

print(j)

action = [

{

"_index": "grade",

"_type": "doc",

"_id": i,

"_source": {

"id": i,

"name": random.choice(names),

"sex": random.choice(sexs),

"subject": random.choice(subjects),

"grade": random.choice(grades)

}

} for i in range(10000 * j, 10000 * j + 10000)

]

helpers.bulk(es, action)

end = time.time()

print('花费时间:', end - start)

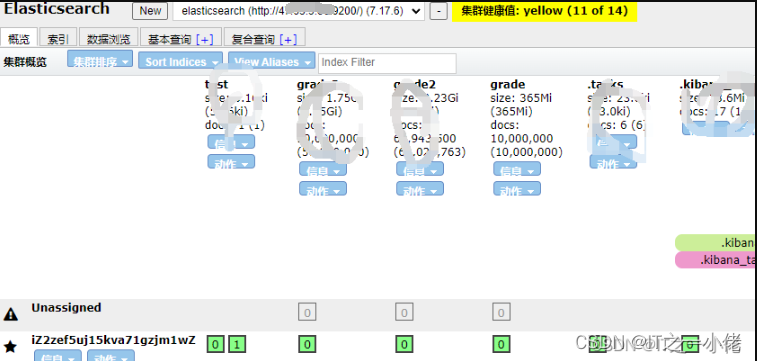

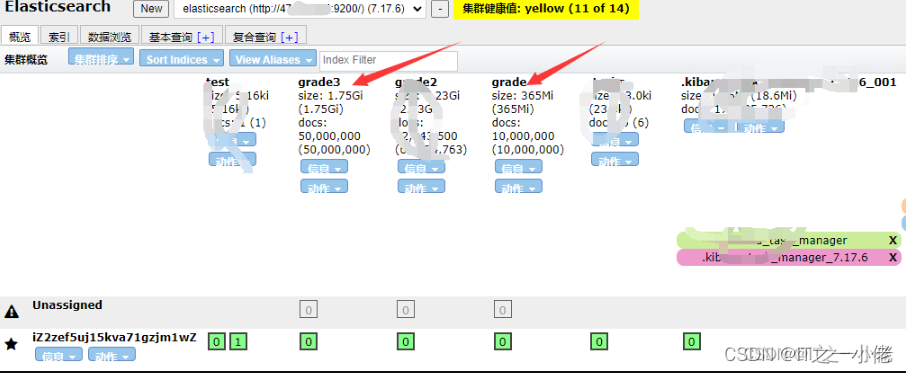

elasticsearch-head中显示:

示例代码2: 【一次性写入10000*5000条数据】 【本人亲测耗时23000秒】

from elasticsearch import Elasticsearch

from elasticsearch import helpers

import random

import time

es = Elasticsearch(hosts='http://127.0.0.1:9200')

# print(es)

names = ['刘一', '陈二', '张三', '李四', '王五', '赵六', '孙七', '周八', '吴九', '郑十']

sexs = ['男', '女']

subjects = ['语文', '数学', '英语', '生物', '地理']

grades = [85, 77, 96, 74, 85, 69, 84, 59, 67, 69, 86, 96, 77, 78, 79, 80, 81, 82, 83, 84, 85, 86]

datas = []

start = time.time()

# 开始批量写入es数据库

# 批量写入数据

for j in range(5000):

print(j)

action = [

{

"_index": "grade3",

"_type": "doc",

"_id": i,

"_source": {

"id": i,

"name": random.choice(names),

"sex": random.choice(sexs),

"subject": random.choice(subjects),

"grade": random.choice(grades)

}

} for i in range(10000 * j, 10000 * j + 10000)

]

helpers.bulk(es, action)

end = time.time()

print('花费时间:', end - start)

示例代码3: 【一次性写入10000*9205条数据】 【耗时过长】

from elasticsearch import Elasticsearch

from elasticsearch import helpers

import random

import time

es = Elasticsearch(hosts='http://127.0.0.1:9200')

names = ['刘一', '陈二', '张三', '李四', '王五', '赵六', '孙七', '周八', '吴九', '郑十']

sexs = ['男', '女']

subjects = ['语文', '数学', '英语', '生物', '地理']

grades = [85, 77, 96, 74, 85, 69, 84, 59, 67, 69, 86, 96, 77, 78, 79, 80, 81, 82, 83, 84, 85, 86]

datas = []

start = time.time()

# 开始批量写入es数据库

# 批量写入数据

for j in range(9205):

print(j)

action = [

{

"_index": "grade2",

"_type": "doc",

"_id": i,

"_source": {

"id": i,

"name": random.choice(names),

"sex": random.choice(sexs),

"subject": random.choice(subjects),

"grade": random.choice(grades)

}

} for i in range(10000*j, 10000*j+10000)

]

helpers.bulk(es, action)

end = time.time()

print('花费时间:', end - start)

查询数据并计算各种方式的成绩总分。

示例代码4: 【一次性获取所有的数据,在程序中分别计算所耗的时间】

from elasticsearch import Elasticsearch

import time

def search_data(es, size=10):

query = {

"query": {

"match_all": {}

}

}

res = es.search(index='grade', body=query, size=size)

# print(res)

return res

if __name__ == '__main__':

start = time.time()

es = Elasticsearch(hosts='http://192.168.1.1:9200')

# print(es)

size = 10000

res = search_data(es, size)

# print(type(res))

# total = res['hits']['total']['value']

# print(total)

all_source = []

for i in range(size):

source = res['hits']['hits'][i]['_source']

all_source.append(source)

# print(source)

# 统计查询出来的所有学生的所有课程的所有成绩的总成绩

start1 = time.time()

all_grade = 0

for data in all_source:

all_grade += int(data['grade'])

print('所有学生总成绩之和:', all_grade)

end1 = time.time()

print("耗时:", end1 - start1)

# 统计查询出来的每个学生的所有课程的所有成绩的总成绩

start2 = time.time()

names1 = []

all_name_grade = {}

for data in all_source:

if data['name'] in names1:

all_name_grade[data['name']] += data['grade']

else:

names1.append(data['name'])

all_name_grade[data['name']] = data['grade']

print(all_name_grade)

end2 = time.time()

print("耗时:", end2 - start2)

# 统计查询出来的每个学生的每门课程的所有成绩的总成绩

start3 = time.time()

names2 = []

subjects = []

all_name_all_subject_grade = {}

for data in all_source:

if data['name'] in names2:

if all_name_all_subject_grade[data['name']].get(data['subject']):

all_name_all_subject_grade[data['name']][data['subject']] += data['grade']

else:

all_name_all_subject_grade[data['name']][data['subject']] = data['grade']

else:

names2.append(data['name'])

all_name_all_subject_grade[data['name']] = {}

all_name_all_subject_grade[data['name']][data['subject']] = data['grade']

print(all_name_all_subject_grade)

end3 = time.time()

print("耗时:", end3 - start3)

end = time.time()

print('总耗时:', end - start)

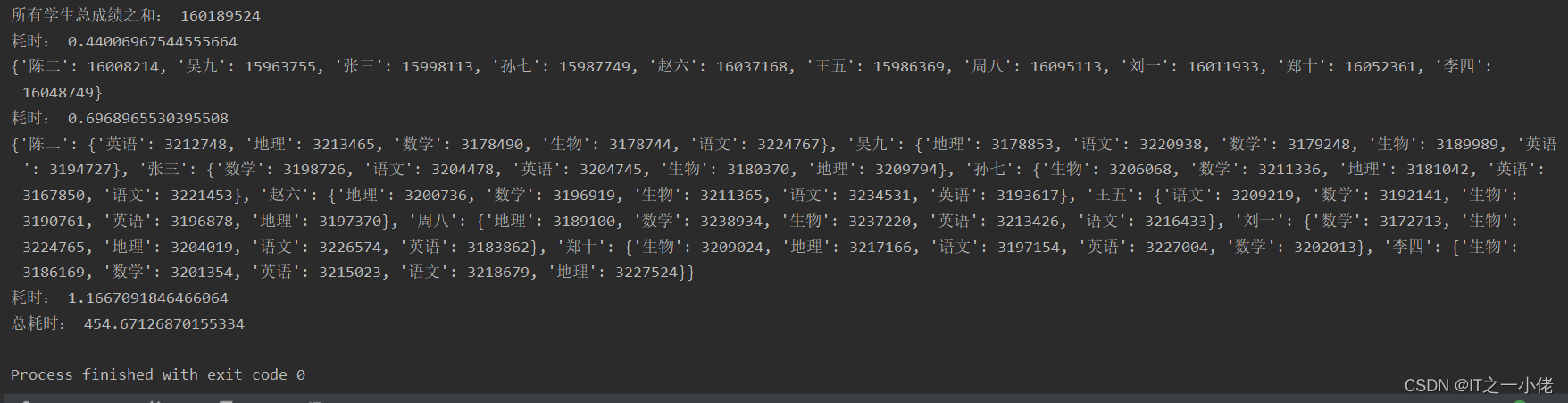

运行结果:

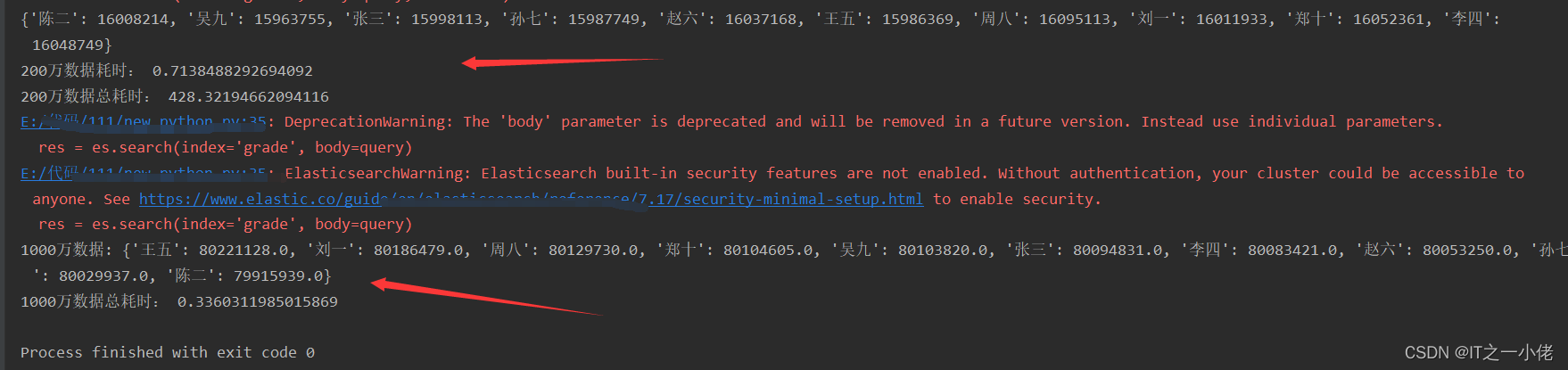

在示例代码4中当把size由10000改为 2000000时,运行效果如下所示:

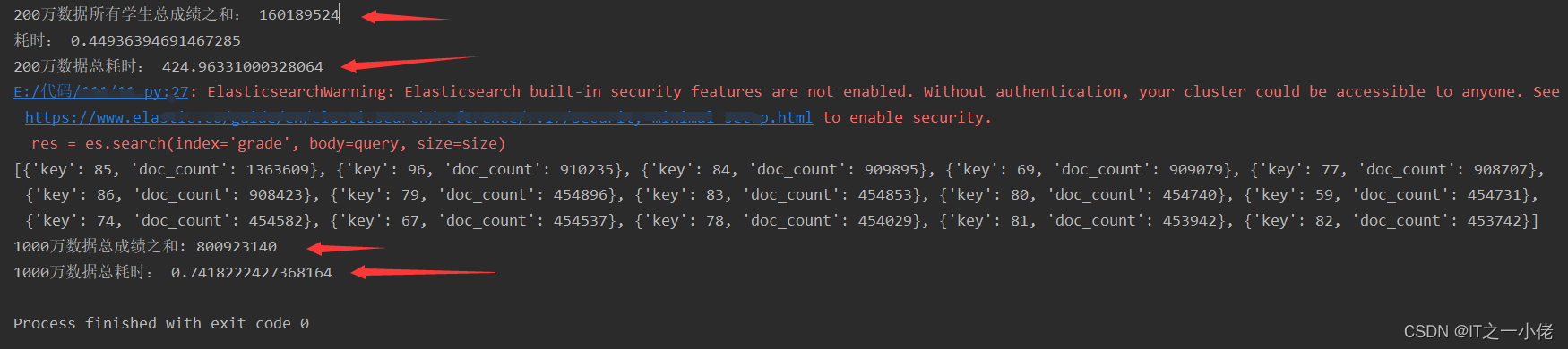

在项目中一般不用上述代码4中所统计成绩的方法,面对大量的数据是比较耗时的,要使用es中的聚合查询。计算数据中所有成绩之和。

示例代码5: 【使用普通计算方法和聚类方法做对比验证】

from elasticsearch import Elasticsearch

import time

def search_data(es, size=10):

query = {

"query": {

"match_all": {}

}

}

res = es.search(index='grade', body=query, size=size)

# print(res)

return res

def search_data2(es, size=10):

query = {

"aggs": {

"all_grade": {

"terms": {

"field": "grade",

"size": 1000

}

}

}

}

res = es.search(index='grade', body=query, size=size)

# print(res)

return res

if __name__ == '__main__':

start = time.time()

es = Elasticsearch(hosts='http://127.0.0.1:9200')

size = 2000000

res = search_data(es, size)

all_source = []

for i in range(size):

source = res['hits']['hits'][i]['_source']

all_source.append(source)

# print(source)

# 统计查询出来的所有学生的所有课程的所有成绩的总成绩

start1 = time.time()

all_grade = 0

for data in all_source:

all_grade += int(data['grade'])

print('200万数据所有学生总成绩之和:', all_grade)

end1 = time.time()

print("耗时:", end1 - start1)

end = time.time()

print('200万数据总耗时:', end - start)

# 聚合操作

start_aggs = time.time()

es = Elasticsearch(hosts='http://127.0.0.1:9200')

# size = 2000000

size = 0

res = search_data2(es, size)

# print(res)

aggs = res['aggregations']['all_grade']['buckets']

print(aggs)

sum = 0

for agg in aggs:

sum += (agg['key'] * agg['doc_count'])

print('1000万数据总成绩之和:', sum)

end_aggs = time.time()

print('1000万数据总耗时:', end_aggs - start_aggs)

运行结果:

计算数据中每个同学的各科总成绩之和。

示例代码6: 【子聚合】【先分组,再计算】

from elasticsearch import Elasticsearch

import time

def search_data(es, size=10):

query = {

"query": {

"match_all": {}

}

}

res = es.search(index='grade', body=query, size=size)

# print(res)

return res

def search_data2(es):

query = {

"size": 0,

"aggs": {

"all_names": {

"terms": {

"field": "name.keyword",

"size": 10

},

"aggs": {

"total_grade": {

"sum": {

"field": "grade"

}

}

}

}

}

}

res = es.search(index='grade', body=query)

# print(res)

return res

if __name__ == '__main__':

start = time.time()

es = Elasticsearch(hosts='http://127.0.0.1:9200')

size = 2000000

res = search_data(es, size)

all_source = []

for i in range(size):

source = res['hits']['hits'][i]['_source']

all_source.append(source)

# print(source)

# 统计查询出来的每个学生的所有课程的所有成绩的总成绩

start2 = time.time()

names1 = []

all_name_grade = {}

for data in all_source:

if data['name'] in names1:

all_name_grade[data['name']] += data['grade']

else:

names1.append(data['name'])

all_name_grade[data['name']] = data['grade']

print(all_name_grade)

end2 = time.time()

print("200万数据耗时:", end2 - start2)

end = time.time()

print('200万数据总耗时:', end - start)

# 聚合操作

start_aggs = time.time()

es = Elasticsearch(hosts='http://127.0.0.1:9200')

res = search_data2(es)

# print(res)

aggs = res['aggregations']['all_names']['buckets']

# print(aggs)

dic = {}

for agg in aggs:

dic[agg['key']] = agg['total_grade']['value']

print('1000万数据:', dic)

end_aggs = time.time()

print('1000万数据总耗时:', end_aggs - start_aggs)

运行结果:

计算数据中每个同学的每科成绩之和。

示例代码7:

from elasticsearch import Elasticsearch

import time

def search_data(es, size=10):

query = {

"query": {

"match_all": {}

}

}

res = es.search(index='grade', body=query, size=size)

# print(res)

return res

def search_data2(es):

query = {

"size": 0,

"aggs": {

"all_names": {

"terms": {

"field": "name.keyword",

"size": 10

},

"aggs": {

"all_subjects": {

"terms": {

"field": "subject.keyword",

"size": 5

},

"aggs": {

"total_grade": {

"sum": {

"field": "grade"

}

}

}

}

}

}

}

}

res = es.search(index='grade', body=query)

# print(res)

return res

if __name__ == '__main__':

start = time.time()

es = Elasticsearch(hosts='http://127.0.0.1:9200')

size = 2000000

res = search_data(es, size)

all_source = []

for i in range(size):

source = res['hits']['hits'][i]['_source']

all_source.append(source)

# print(source)

# 统计查询出来的每个学生的每门课程的所有成绩的总成绩

start3 = time.time()

names2 = []

subjects = []

all_name_all_subject_grade = {}

for data in all_source:

if data['name'] in names2:

if all_name_all_subject_grade[data['name']].get(data['subject']):

all_name_all_subject_grade[data['name']][data['subject']] += data['grade']

else:

all_name_all_subject_grade[data['name']][data['subject']] = data['grade']

else:

names2.append(data['name'])

all_name_all_subject_grade[data['name']] = {}

all_name_all_subject_grade[data['name']][data['subject']] = data['grade']

print('200万数据:', all_name_all_subject_grade)

end3 = time.time()

print("耗时:", end3 - start3)

end = time.time()

print('200万数据总耗时:', end - start)

# 聚合操作

start_aggs = time.time()

es = Elasticsearch(hosts='http://127.0.0.1:9200')

res = search_data2(es)

# print(res)

aggs = res['aggregations']['all_names']['buckets']

# print(aggs)

dic = {}

for agg in aggs:

dic[agg['key']] = {}

for sub in agg['all_subjects']['buckets']:

dic[agg['key']][sub['key']] = sub['total_grade']['value']

print('1000万数据:', dic)

end_aggs = time.time()

print('1000万数据总耗时:', end_aggs - start_aggs)

运行结果:

在上面查询计算示例代码中,当使用含有1000万数据的索引grade时,普通方法查询计算是比较耗时的,使用聚合查询能够大大节约大量时间。当面对9205万数据的索引grade2时,这时使用普通计算方法所消耗的时间太大了,在线上开发环境中是不可用的,所以必须使用聚合方法来计算。

示例代码8:

from elasticsearch import Elasticsearch

import time

def search_data(es):

query = {

"size": 0,

"aggs": {

"all_names": {

"terms": {

"field": "name.keyword",

"size": 10

},

"aggs": {

"all_subjects": {

"terms": {

"field": "subject.keyword",

"size": 5

},

"aggs": {

"total_grade": {

"sum": {

"field": "grade"

}

}

}

}

}

}

}

}

res = es.search(index='grade2', body=query)

# print(res)

return res

if __name__ == '__main__':

# 聚合操作

start_aggs = time.time()

es = Elasticsearch(hosts='http://127.0.0.1:9200')

res = search_data(es)

# print(res)

aggs = res['aggregations']['all_names']['buckets']

# print(aggs)

dic = {}

for agg in aggs:

dic[agg['key']] = {}

for sub in agg['all_subjects']['buckets']:

dic[agg['key']][sub['key']] = sub['total_grade']['value']

print('9205万数据:', dic)

end_aggs = time.time()

print('9205万数据总耗时:', end_aggs - start_aggs)

运行结果:

注意:写查询语句时建议使用kibana去写,然后复制查询语句到代码中,kibana会提示查询语句。

我正在学习如何使用Nokogiri,根据这段代码我遇到了一些问题:require'rubygems'require'mechanize'post_agent=WWW::Mechanize.newpost_page=post_agent.get('http://www.vbulletin.org/forum/showthread.php?t=230708')puts"\nabsolutepathwithtbodygivesnil"putspost_page.parser.xpath('/html/body/div/div/div/div/div/table/tbody/tr/td/div

我有一个Ruby程序,它使用rubyzip压缩XML文件的目录树。gem。我的问题是文件开始变得很重,我想提高压缩级别,因为压缩时间不是问题。我在rubyzipdocumentation中找不到一种为创建的ZIP文件指定压缩级别的方法。有人知道如何更改此设置吗?是否有另一个允许指定压缩级别的Ruby库? 最佳答案 这是我通过查看rubyzip内部创建的代码。level=Zlib::BEST_COMPRESSIONZip::ZipOutputStream.open(zip_file)do|zip|Dir.glob("**/*")d

类classAprivatedeffooputs:fooendpublicdefbarputs:barendprivatedefzimputs:zimendprotecteddefdibputs:dibendendA的实例a=A.new测试a.foorescueputs:faila.barrescueputs:faila.zimrescueputs:faila.dibrescueputs:faila.gazrescueputs:fail测试输出failbarfailfailfail.发送测试[:foo,:bar,:zim,:dib,:gaz].each{|m|a.send(m)resc

很好奇,就使用rubyonrails自动化单元测试而言,你们正在做什么?您是否创建了一个脚本来在cron中运行rake作业并将结果邮寄给您?git中的预提交Hook?只是手动调用?我完全理解测试,但想知道在错误发生之前捕获错误的最佳实践是什么。让我们理所当然地认为测试本身是完美无缺的,并且可以正常工作。下一步是什么以确保他们在正确的时间将可能有害的结果传达给您? 最佳答案 不确定您到底想听什么,但是有几个级别的自动代码库控制:在处理某项功能时,您可以使用类似autotest的内容获得关于哪些有效,哪些无效的即时反馈。要确保您的提

假设我做了一个模块如下:m=Module.newdoclassCendend三个问题:除了对m的引用之外,还有什么方法可以访问C和m中的其他内容?我可以在创建匿名模块后为其命名吗(就像我输入“module...”一样)?如何在使用完匿名模块后将其删除,使其定义的常量不再存在? 最佳答案 三个答案:是的,使用ObjectSpace.此代码使c引用你的类(class)C不引用m:c=nilObjectSpace.each_object{|obj|c=objif(Class===objandobj.name=~/::C$/)}当然这取决于

我正在尝试使用ruby和Savon来使用网络服务。测试服务为http://www.webservicex.net/WS/WSDetails.aspx?WSID=9&CATID=2require'rubygems'require'savon'client=Savon::Client.new"http://www.webservicex.net/stockquote.asmx?WSDL"client.get_quotedo|soap|soap.body={:symbol=>"AAPL"}end返回SOAP异常。检查soap信封,在我看来soap请求没有正确的命名空间。任何人都可以建议我

关闭。这个问题是opinion-based.它目前不接受答案。想要改进这个问题?更新问题,以便editingthispost可以用事实和引用来回答它.关闭4年前。Improvethisquestion我想在固定时间创建一系列低音和高音调的哔哔声。例如:在150毫秒时发出高音调的蜂鸣声在151毫秒时发出低音调的蜂鸣声200毫秒时发出低音调的蜂鸣声250毫秒的高音调蜂鸣声有没有办法在Ruby或Python中做到这一点?我真的不在乎输出编码是什么(.wav、.mp3、.ogg等等),但我确实想创建一个输出文件。

我在我的项目目录中完成了compasscreate.和compassinitrails。几个问题:我已将我的.sass文件放在public/stylesheets中。这是放置它们的正确位置吗?当我运行compasswatch时,它不会自动编译这些.sass文件。我必须手动指定文件:compasswatchpublic/stylesheets/myfile.sass等。如何让它自动运行?文件ie.css、print.css和screen.css已放在stylesheets/compiled。如何在编译后不让它们重新出现的情况下删除它们?我自己编译的.sass文件编译成compiled/t

我想将html转换为纯文本。不过,我不想只删除标签,我想智能地保留尽可能多的格式。为插入换行符标签,检测段落并格式化它们等。输入非常简单,通常是格式良好的html(不是整个文档,只是一堆内容,通常没有anchor或图像)。我可以将几个正则表达式放在一起,让我达到80%,但我认为可能有一些现有的解决方案更智能。 最佳答案 首先,不要尝试为此使用正则表达式。很有可能你会想出一个脆弱/脆弱的解决方案,它会随着HTML的变化而崩溃,或者很难管理和维护。您可以使用Nokogiri快速解析HTML并提取文本:require'nokogiri'h

我想为Heroku构建一个Rails3应用程序。他们使用Postgres作为他们的数据库,所以我通过MacPorts安装了postgres9.0。现在我需要一个postgresgem并且共识是出于性能原因你想要pggem。但是我对我得到的错误感到非常困惑当我尝试在rvm下通过geminstall安装pg时。我已经非常明确地指定了所有postgres目录的位置可以找到但仍然无法完成安装:$envARCHFLAGS='-archx86_64'geminstallpg--\--with-pg-config=/opt/local/var/db/postgresql90/defaultdb/po