本文提供三种不同的解决方式,也是三种不同的情况和思路

我的问题是在springboot整合了xxl-job一段时间后出现的。如果你程序里集成了xxl-job或者有需要配置其它端口的地方,这篇文章或许可以给你带来启发或者解决你的问题。

目录标题

启动项目后抛出异常,但是奇怪的是执行器在任务调度中心中注册成功,也能成功执行

. ____ _ __ _ _

/\\ / ___'_ __ _ _(_)_ __ __ _ \ \ \ \

( ( )\___ | '_ | '_| | '_ \/ _` | \ \ \ \

\\/ ___)| |_)| | | | | || (_| | ) ) ) )

' |____| .__|_| |_|_| |_\__, | / / / /

=========|_|==============|___/=/_/_/_/

:: Spring Boot :: (v2.2.2.RELEASE)

2023-02-14 11:22:15.516 INFO 4436 --- [ main] com.jxj.SafetyWebserverApplication : Starting SafetyWebserverApplication on abc with PID 4436 (C:\project\safetyproduction_collectdata\target\classes started by whx in C:\project\safetyproduction_collectdata)

2023-02-14 11:22:15.521 INFO 4436 --- [ main] com.jxj.SafetyWebserverApplication : No active profile set, falling back to default profiles: default

2023-02-14 11:22:16.722 INFO 4436 --- [ main] .s.d.r.c.RepositoryConfigurationDelegate : Multiple Spring Data modules found, entering strict repository configuration mode!

....

2023-02-14 11:22:19.191 INFO 4436 --- [ main] o.s.s.c.ThreadPoolTaskScheduler : Initializing ExecutorService 'taskScheduler'

2023-02-14 11:22:19.394 INFO 4436 --- [ main] com.jxj.config.XxlJobConfig : >>>>>>>>>>> xxl-job config init.

2023-02-14 11:22:19.666 INFO 4436 --- [ main] o.s.s.concurrent.ThreadPoolTaskExecutor : Initializing ExecutorService 'applicationTaskExecutor'

2023-02-14 11:22:20.271 INFO 4436 --- [ main] c.xxl.job.core.executor.XxlJobExecutor : >>>>>>>>>>> xxl-job register jobhandler success, name:demoJobHandler, jobHandler:com.xxl.job.core.handler.impl.MethodJobHandler@c6bf8d9[class com.jxj.task.WarningTask#demoJobHandler]

2023-02-14 11:22:20.585 INFO 4436 --- [ main] o.s.i.endpoint.EventDrivenConsumer : Adding {logging-channel-adapter:_org.springframework.integration.errorLogger} as a subscriber to the 'errorChannel' channel

2023-02-14 11:22:20.586 INFO 4436 --- [ main] o.s.i.channel.PublishSubscribeChannel : Channel 'application.errorChannel' has 1 subscriber(s).

2023-02-14 11:22:20.586 INFO 4436 --- [ main] o.s.i.endpoint.EventDrivenConsumer : started bean '_org.springframework.integration.errorLogger'

2023-02-14 11:22:20.586 INFO 4436 --- [ main] o.s.i.endpoint.EventDrivenConsumer : Adding {message-handler:mqttPublisherConfig.mqttOutbound.serviceActivator} as a subscriber to the 'mqttOutboundChannel' channel

2023-02-14 11:22:20.586 INFO 4436 --- [ main] o.s.integration.channel.DirectChannel : Channel 'application.mqttOutboundChannel' has 1 subscriber(s).

2023-02-14 11:22:20.600 INFO 4436 --- [ main] o.s.i.endpoint.EventDrivenConsumer : started bean 'mqttPublisherConfig.mqttOutbound.serviceActivator'

2023-02-14 11:22:20.600 INFO 4436 --- [ main] o.s.i.endpoint.EventDrivenConsumer : Adding {message-handler:mqttSenderConfig.mqttOutbound.serviceActivator} as a subscriber to the 'mqttOutboundChannel1' channel

2023-02-14 11:22:20.601 INFO 4436 --- [ main] o.s.integration.channel.DirectChannel : Channel 'application.mqttOutboundChannel1' has 1 subscriber(s).

2023-02-14 11:22:20.601 INFO 4436 --- [ main] o.s.i.endpoint.EventDrivenConsumer : started bean 'mqttSenderConfig.mqttOutbound.serviceActivator'

2023-02-14 11:22:20.601 INFO 4436 --- [ main] o.s.i.endpoint.EventDrivenConsumer : Adding {message-handler:mqttSubscriberConfig.handler.serviceActivator} as a subscriber to the 'mqttInboundChannel' channel

2023-02-14 11:22:20.601 INFO 4436 --- [ main] o.s.integration.channel.DirectChannel : Channel 'application.mqttInboundChannel' has 1 subscriber(s).

2023-02-14 11:22:20.601 INFO 4436 --- [ main] o.s.i.endpoint.EventDrivenConsumer : started bean 'mqttSubscriberConfig.handler.serviceActivator'

2023-02-14 11:22:20.601 INFO 4436 --- [ main] ProxyFactoryBean$MethodInvocationGateway : started bean 'mqttGateway'

2023-02-14 11:22:20.601 INFO 4436 --- [ main] ProxyFactoryBean$MethodInvocationGateway : started bean 'mqttGateway'

2023-02-14 11:22:20.601 INFO 4436 --- [ main] ProxyFactoryBean$MethodInvocationGateway : started bean 'mqttGateway'

2023-02-14 11:22:20.601 INFO 4436 --- [ main] o.s.i.gateway.GatewayProxyFactoryBean : started bean 'mqttGateway'

Exception in thread "Thread-17" java.net.BindException: Address already in use: bind

at sun.nio.ch.Net.bind0(Native Method)

at sun.nio.ch.Net.bind(Net.java:433)

at sun.nio.ch.Net.bind(Net.java:425)

at sun.nio.ch.ServerSocketChannelImpl.bind(ServerSocketChannelImpl.java:223)

at io.netty.channel.socket.nio.NioServerSocketChannel.doBind(NioServerSocketChannel.java:134)

at io.netty.channel.AbstractChannel$AbstractUnsafe.bind(AbstractChannel.java:551)

at io.netty.channel.DefaultChannelPipeline$HeadContext.bind(DefaultChannelPipeline.java:1346)

at io.netty.channel.AbstractChannelHandlerContext.invokeBind(AbstractChannelHandlerContext.java:503)

at io.netty.channel.AbstractChannelHandlerContext.bind(AbstractChannelHandlerContext.java:488)

at io.netty.channel.DefaultChannelPipeline.bind(DefaultChannelPipeline.java:985)

at io.netty.channel.AbstractChannel.bind(AbstractChannel.java:247)

at io.netty.bootstrap.AbstractBootstrap$2.run(AbstractBootstrap.java:344)

at io.netty.util.concurrent.AbstractEventExecutor.safeExecute$$$capture(AbstractEventExecutor.java:163)

at io.netty.util.concurrent.AbstractEventExecutor.safeExecute(AbstractEventExecutor.java)

at io.netty.util.concurrent.SingleThreadEventExecutor.runAllTasks(SingleThreadEventExecutor.java:510)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:518)

at io.netty.util.concurrent.SingleThreadEventExecutor$6.run(SingleThreadEventExecutor.java:1050)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.lang.Thread.run(Thread.java:748)

2023-02-14 11:22:21.444 INFO 4436 --- [ main] .m.i.MqttPahoMessageDrivenChannelAdapter : started bean 'inbound'; defined in: 'class path resource [com/jxj/config/MqttSubscriberConfig.class]'; from source: 'org.springframework.core.type.classreading.SimpleMethodMetadata@1d4664d7'

2023-02-14 11:22:21.446 INFO 4436 --- [ main] o.s.a.r.c.CachingConnectionFactory : Attempting to connect to: [localhost:5672]

2023-02-14 11:22:25.537 INFO 4436 --- [ main] o.s.a.r.l.SimpleMessageListenerContainer : Broker not available; cannot force queue declarations during start: java.net.ConnectException: Connection refused: connect

2023-02-14 11:22:25.545 INFO 4436 --- [ntContainer#0-1] o.s.a.r.c.CachingConnectionFactory : Attempting to connect to: [localhost:5672]

网上有的说通过

在低版本的 xxl-job 中, 初始化XxlJobSpringExecutor执行器需要在@Bean中加上 initMethod = "start", destroyMethod = "destroy",但是在高版本的 xxl-job(如 2.1.2)则需要删除 initMethod = "start", destroyMethod = "destroy"

而我的问题不是在bean上加(initMethod = “start”, destroyMethod = “destroy”)

,我加上之后会报两遍线程被使用的异常。

Exception in thread "Thread-14" java.net.BindException: Address already in use: bind

at sun.nio.ch.Net.bind0(Native Method)

at sun.nio.ch.Net.bind(Net.java:433)

at sun.nio.ch.Net.bind(Net.java:425)

at sun.nio.ch.ServerSocketChannelImpl.bind(ServerSocketChannelImpl.java:223)

at io.netty.channel.socket.nio.NioServerSocketChannel.doBind(NioServerSocketChannel.java:134)

at io.netty.channel.AbstractChannel$AbstractUnsafe.bind(AbstractChannel.java:551)

at io.netty.channel.DefaultChannelPipeline$HeadContext.bind(DefaultChannelPipeline.java:1346)

at io.netty.channel.AbstractChannelHandlerContext.invokeBind(AbstractChannelHandlerContext.java:503)

at io.netty.channel.AbstractChannelHandlerContext.bind(AbstractChannelHandlerContext.java:488)

at io.netty.channel.DefaultChannelPipeline.bind(DefaultChannelPipeline.java:985)

at io.netty.channel.AbstractChannel.bind(AbstractChannel.java:247)

at io.netty.bootstrap.AbstractBootstrap$2.run(AbstractBootstrap.java:344)

at io.netty.util.concurrent.AbstractEventExecutor.safeExecute$$$capture(AbstractEventExecutor.java:163)

at io.netty.util.concurrent.AbstractEventExecutor.safeExecute(AbstractEventExecutor.java)

at io.netty.util.concurrent.SingleThreadEventExecutor.runAllTasks(SingleThreadEventExecutor.java:510)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:518)

at io.netty.util.concurrent.SingleThreadEventExecutor$6.run(SingleThreadEventExecutor.java:1050)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.lang.Thread.run(Thread.java:748)

2023-02-14 11:25:20.568 INFO 18140 --- [ main] c.xxl.job.core.executor.XxlJobExecutor : >>>>>>>>>>> xxl-job register jobhandler success, name:demoJobHandler, jobHandler:com.xxl.job.core.handler.impl.MethodJobHandler@1618c98a[class com.jxj.task.WarningTask#demoJobHandler]

Exception in thread "Thread-20" java.net.BindException: Address already in use: bind

at sun.nio.ch.Net.bind0(Native Method)

at sun.nio.ch.Net.bind(Net.java:433)

at sun.nio.ch.Net.bind(Net.java:425)

at sun.nio.ch.ServerSocketChannelImpl.bind(ServerSocketChannelImpl.java:223)

at io.netty.channel.socket.nio.NioServerSocketChannel.doBind(NioServerSocketChannel.java:134)

at io.netty.channel.AbstractChannel$AbstractUnsafe.bind(AbstractChannel.java:551)

at io.netty.channel.DefaultChannelPipeline$HeadContext.bind(DefaultChannelPipeline.java:1346)

at io.netty.channel.AbstractChannelHandlerContext.invokeBind(AbstractChannelHandlerContext.java:503)

at io.netty.channel.AbstractChannelHandlerContext.bind(AbstractChannelHandlerContext.java:488)

at io.netty.channel.DefaultChannelPipeline.bind(DefaultChannelPipeline.java:985)

at io.netty.channel.AbstractChannel.bind(AbstractChannel.java:247)

at io.netty.bootstrap.AbstractBootstrap$2.run(AbstractBootstrap.java:344)

at io.netty.util.concurrent.AbstractEventExecutor.safeExecute$$$capture(AbstractEventExecutor.java:163)

at io.netty.util.concurrent.AbstractEventExecutor.safeExecute(AbstractEventExecutor.java)

at io.netty.util.concurrent.SingleThreadEventExecutor.runAllTasks(SingleThreadEventExecutor.java:510)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:518)

at io.netty.util.concurrent.SingleThreadEventExecutor$6.run(SingleThreadEventExecutor.java:1050)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.lang.Thread.run(Thread.java:748)

//原注解:

@Bean

public XxlJobSpringExecutor xxlJobExecutor() {

...

}

//修改后注解

@Bean(initMethod = "start", destroyMethod = "destroy")

public XxlJobSpringExecutor xxlJobExecutor() {

...

}

后来我发现我的问题是 本地和线上的程序连接了相同的xxl-job,并且连接xxl-job的端口是一样的,导致了这个问题!

xxl:

job:

accessToken: xxx

admin:

# addresses: http://1xxxxx/xxl-job-admin

addresses: http://39xxxxx/xxl-job-admin

executor:

address: 'xxx'

appname: safexxxxxst #这个名字要和页面配置的一致

ip: ''

logpath: /datxxxxjob/joxxxler

logretentiondays: 30

port: 9996

就是这个 port端口重复导致的问题修改一下即可

port: 9996

本来我是第二种情况已经解决了,结果下午又报这个错了,因此有了第三种情况的解决。

启动报错时的 错误关键日志:

2023-02-14 13:59:12.074 INFO 8364 --- [ main] o.s.a.r.c.CachingConnectionFactory : Created new connection: rabbitConnectionFactory#131ba005:0/SimpleConnection@5981f2c6 [delegate=amqp://root@127.0.0.1:5673/, localPort= 5415]

Exception in thread "Thread-21" java.net.BindException: Address already in use: bind

at sun.nio.ch.Net.bind0(Native Method)

at sun.nio.ch.Net.bind(Net.java:433)

at sun.nio.ch.Net.bind(Net.java:425)

at sun.nio.ch.ServerSocketChannelImpl.bind(ServerSocketChannelImpl.java:223)

at io.netty.channel.socket.nio.NioServerSocketChannel.doBind(NioServerSocketChannel.java:134)

at io.netty.channel.AbstractChannel$AbstractUnsafe.bind(AbstractChannel.java:551)

at io.netty.channel.DefaultChannelPipeline$HeadContext.bind(DefaultChannelPipeline.java:1346)

at io.netty.channel.AbstractChannelHandlerContext.invokeBind(AbstractChannelHandlerContext.java:503)

at io.netty.channel.AbstractChannelHandlerContext.bind(AbstractChannelHandlerContext.java:488)

at io.netty.channel.DefaultChannelPipeline.bind(DefaultChannelPipeline.java:985)

at io.netty.channel.AbstractChannel.bind(AbstractChannel.java:247)

at io.netty.bootstrap.AbstractBootstrap$2.run(AbstractBootstrap.java:344)

at

由于我已经解决过一次,所以我对端口比较敏感(大家看完后面的分析就可以知道我为什么敏感),就在yaml文件种搜了一下所有的 port 一共有五处。

排除项目的port(项目的接口冲突会直接报错,停止运行)

排除xxl-job (第二种情况冲突已经解决)

剩下的是集成的 redis,elasticsearch,rabbitmq

redis和elasticsearch我都没有开启,问题就只在rabbitmq了。

rabbitmq我是本地起的docker,来连接测试的。

然后我有认真看了一下日志,就是上面贴出来的第一行

[delegate=amqp://root@127.0.0.1:5673/, localPort= 5415]

也就是打印完这个日志后报的错误,localPort= 5415,于是我又在本地查看了一下这个5415端口使用情况

netstat -aon|findstr 5415

果然 两个不同的线程在用!

C:\Uxxxs\1xx0>netstat -aon|findstr 5415

TCP 127.0.0.1:5415 127.0.0.1:5673 ESTABLISHED 8364

TCP 127.0.0.1:5673 127.0.0.1:5415 ESTABLISHED 4136

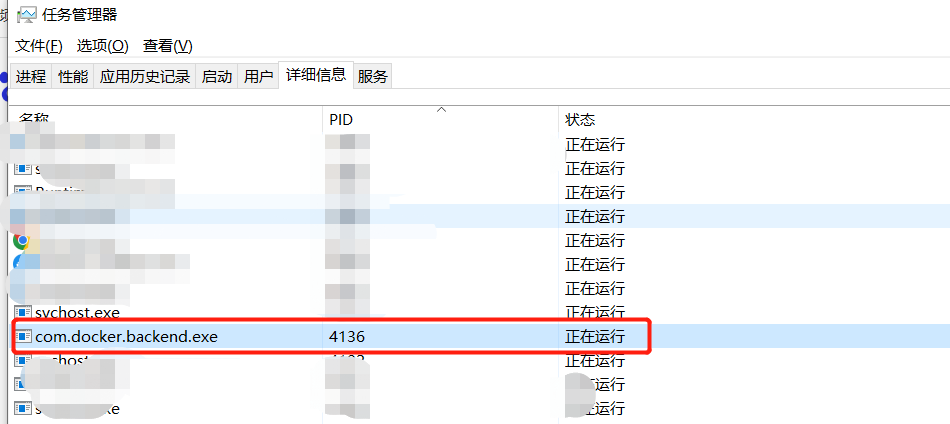

然后我打开任务管理器 详细信息,找到4136是daocker

我重启了一下电脑,,解决了

程序启动之后重新启动了一个线程去连接xxl-job的端口,但是这个端口已经被占用了,所以程序就直接返回了一个这个线程被占用了。

服务创建监听的时候,如果端口有LISTENING、ESTABLISHED、TIME_WAIT等,好像都会报错。 可以研究下原理

TCP状态转移要点

TCP协议规定,对于已经建立的连接,网络双方要进行四次握手才能成功断开连接,如果缺少了其中某个步骤,将会使连接处于假死状态,连接本身占用的资源不会被释放。网络服务器程序要同时管理大量连接,所以很有必要保证无用连接完全断开,否则大量僵死的连接会浪费许多服务器资源。在众多TCP状态中,最值得注意的状态有两个:CLOSE_WAIT和TIME_WAIT。

1、LISTENING状态

FTP服务启动后首先处于侦听(LISTENING)状态。

2、ESTABLISHED状态

ESTABLISHED的意思是建立连接。表示两台机器正在通信。

3、TIME_WAIT

我方主动调用close()断开连接,收到对方确认后状态变为TIME_WAIT。TCP协议规定TIME_WAIT状态会一直持续2MSL(即两倍的分段最大生存期),以此来确保旧的连接状态不会对新连接产生影响。处于TIME_WAIT状态的连接占用的资源不会被内核释放,所以作为服务器,在可能的情况下,尽量不要主动断开连接,以减少TIME_WAIT状态造成的资源浪费。

目前有一种避免TIME_WAIT资源浪费的方法,就是关闭socket的LINGER选项。但这种做法是TCP协议不推荐使用的,在某些情况下这个操作可能会带来错误。

作为我的Rails应用程序的一部分,我编写了一个小导入程序,它从我们的LDAP系统中吸取数据并将其塞入一个用户表中。不幸的是,与LDAP相关的代码在遍历我们的32K用户时泄漏了大量内存,我一直无法弄清楚如何解决这个问题。这个问题似乎在某种程度上与LDAP库有关,因为当我删除对LDAP内容的调用时,内存使用情况会很好地稳定下来。此外,不断增加的对象是Net::BER::BerIdentifiedString和Net::BER::BerIdentifiedArray,它们都是LDAP库的一部分。当我运行导入时,内存使用量最终达到超过1GB的峰值。如果问题存在,我需要找到一些方法来更正我的代

是的,我知道最好使用webmock,但我想知道如何在RSpec中模拟此方法:defmethod_to_testurl=URI.parseurireq=Net::HTTP::Post.newurl.pathres=Net::HTTP.start(url.host,url.port)do|http|http.requestreq,foo:1endresend这是RSpec:let(:uri){'http://example.com'}specify'HTTPcall'dohttp=mock:httpNet::HTTP.stub!(:start).and_yieldhttphttp.shou

它不等于主线程的binding,这个toplevel作用域是什么?此作用域与主线程中的binding有何不同?>ruby-e'putsTOPLEVEL_BINDING===binding'false 最佳答案 事实是,TOPLEVEL_BINDING始终引用Binding的预定义全局实例,而Kernel#binding创建的新实例>Binding每次封装当前执行上下文。在顶层,它们都包含相同的绑定(bind),但它们不是同一个对象,您无法使用==或===测试它们的绑定(bind)相等性。putsTOPLEVEL_BINDINGput

我真的很习惯使用Ruby编写以下代码:my_hash={}my_hash['test']=1Java中对应的数据结构是什么? 最佳答案 HashMapmap=newHashMap();map.put("test",1);我假设? 关于java-等价于Java中的RubyHash,我们在StackOverflow上找到一个类似的问题: https://stackoverflow.com/questions/22737685/

我正在尝试使用boilerpipe来自JRuby。我看过guide从JRuby调用Java,并成功地将它与另一个Java包一起使用,但无法弄清楚为什么同样的东西不能用于boilerpipe。我正在尝试基本上从JRuby中执行与此Java等效的操作:URLurl=newURL("http://www.example.com/some-location/index.html");Stringtext=ArticleExtractor.INSTANCE.getText(url);在JRuby中试过这个:require'java'url=java.net.URL.new("http://www

我目前正在使用以下方法获取页面的源代码:Net::HTTP.get(URI.parse(page.url))我还想获取HTTP状态,而无需发出第二个请求。有没有办法用另一种方法做到这一点?我一直在查看文档,但似乎找不到我要找的东西。 最佳答案 在我看来,除非您需要一些真正的低级访问或控制,否则最好使用Ruby的内置Open::URI模块:require'open-uri'io=open('http://www.example.org/')#=>#body=io.read[0,50]#=>"["200","OK"]io.base_ur

我只想对我一直在思考的这个问题有其他意见,例如我有classuser_controller和classuserclassUserattr_accessor:name,:usernameendclassUserController//dosomethingaboutanythingaboutusersend问题是我的User类中是否应该有逻辑user=User.newuser.do_something(user1)oritshouldbeuser_controller=UserController.newuser_controller.do_something(user1,user2)我

什么是ruby的rack或python的Java的wsgi?还有一个路由库。 最佳答案 来自Python标准PEP333:Bycontrast,althoughJavahasjustasmanywebapplicationframeworksavailable,Java's"servlet"APImakesitpossibleforapplicationswrittenwithanyJavawebapplicationframeworktoruninanywebserverthatsupportstheservletAPI.ht

这篇文章是继上一篇文章“Observability:从零开始创建Java微服务并监控它(一)”的续篇。在上一篇文章中,我们讲述了如何创建一个Javaweb应用,并使用Filebeat来收集应用所生成的日志。在今天的文章中,我来详述如何收集应用的指标,使用APM来监控应用并监督web服务的在线情况。源码可以在地址 https://github.com/liu-xiao-guo/java_observability 进行下载。摄入指标指标被视为可以随时更改的时间点值。当前请求的数量可以改变任何毫秒。你可能有1000个请求的峰值,然后一切都回到一个请求。这也意味着这些指标可能不准确,你还想提取最小/

HashMap中为什么引入红黑树,而不是AVL树呢1.概述开始学习这个知识点之前我们需要知道,在JDK1.8以及之前,针对HashMap有什么不同。JDK1.7的时候,HashMap的底层实现是数组+链表JDK1.8的时候,HashMap的底层实现是数组+链表+红黑树我们要思考一个问题,为什么要从链表转为红黑树呢。首先先让我们了解下链表有什么不好???2.链表上述的截图其实就是链表的结构,我们来看下链表的增删改查的时间复杂度增:因为链表不是线性结构,所以每次添加的时候,只需要移动一个节点,所以可以理解为复杂度是N(1)删:算法时间复杂度跟增保持一致查:既然是非线性结构,所以查询某一个节点的时候