YOLOv8训练自己的数据集(足球检测)

- 熟悉Python

matplotlib>=3.2.2

numpy>=1.18.5

opencv-python>=4.6.0

Pillow>=7.1.2

PyYAML>=5.3.1

requests>=2.23.0

scipy>=1.4.1

torch>=1.7.0

torchvision>=0.8.1

tqdm>=4.64.0

tensorboard>=2.4.1

pandas>=1.1.4

seaborn>=0.11.0

pip install ultralytics

官方YOLOv8源代码地址:https://github.com/ultralytics/ultralytics.git

本文章项目地址:https://gitcode.net/FriendshipTang/yolov8.git

注:本文之所以不直接克隆官方YOLOv8源代码地址,是因为:

- 我在源代码基础上,下载好并添加了

yolov8s.pt权重文件和新建并编辑好了关于足球数据集信息的football.yaml文件,便于后续使用。- 如果直接克隆官方YOLOv8源代码地址,你会发现会出现一个这样的路径

"/ultralytics/ultralytics",这可能会导致from ultralytics import YOLO或import ultralytics报错。

git clone https://gitcode.net/FriendshipTang/yolov8.git

Cloning into 'yolov8'...

remote: Enumerating objects: 4583, done.

remote: Counting objects: 100% (4583/4583), done.

remote: Compressing objects: 100% (1270/1270), done.

remote: Total 4583 (delta 2981), reused 4576 (delta 2979), pack-reused 0

Receiving objects: 100% (4583/4583), 23.95 MiB | 1.55 MiB/s, done.

Resolving deltas: 100% (2981/2981), done.

请到

https://gitcode.net/FriendshipTang/yolov8.git网站下载源代码zip压缩包。

详见YOLOv7训练自己的数据集(口罩检测)

地址:https://blog.csdn.net/FriendshipTang/article/details/126513426

以

football.yaml文件内容为例,大家可以根据自己的数据集信息进行修改。

# train and val data as 1) directory: path/images/, 2) file: path/images.txt, or 3) list: [path1/images/, path2/images/]

train: ./yolov8/football_yolodataset/trainset

val: ./yolov8/football_yolodataset/testset

# number of classes

nc: 1

# class names

names: ["football"]

yolo detect train data=football.yaml model=yolov8s.pt epochs=20 imgsz=640 device=0,1 batch=128

Ultralytics YOLOv8.0.37 🚀 Python-3.7.12 torch-1.11.0 CUDA:0 (Tesla T4, 15110MiB)

CUDA:1 (Tesla T4, 15110MiB)

yolo/engine/trainer: task=detect, mode=train, model=yolov8s.pt, data=football.yaml, epochs=20, patience=50, batch=128, imgsz=640, save=True, save_period=-1, cache=False, device=(0, 1), workers=8, project=None, name=None, exist_ok=False, pretrained=False, optimizer=SGD, verbose=True, seed=0, deterministic=True, single_cls=False, image_weights=False, rect=False, cos_lr=False, close_mosaic=10, resume=False, min_memory=False, overlap_mask=True, mask_ratio=4, dropout=False, val=True, split=val, save_json=False, save_hybrid=False, conf=0.001, iou=0.7, max_det=300, half=False, dnn=False, plots=True, source=ultralytics/assets/, show=False, save_txt=False, save_conf=False, save_crop=False, hide_labels=False, hide_conf=False, vid_stride=1, line_thickness=3, visualize=False, augment=False, agnostic_nms=False, classes=None, retina_masks=False, boxes=True, format=torchscript, keras=False, optimize=False, int8=False, dynamic=False, simplify=False, opset=None, workspace=4, nms=False, lr0=0.01, lrf=0.01, momentum=0.937, weight_decay=0.001, warmup_epochs=3.0, warmup_momentum=0.8, warmup_bias_lr=0.1, box=7.5, cls=0.5, dfl=1.5, fl_gamma=0.0, label_smoothing=0.0, nbs=64, hsv_h=0.015, hsv_s=0.7, hsv_v=0.4, degrees=0.0, translate=0.1, scale=0.5, shear=0.0, perspective=0.0, flipud=0.0, fliplr=0.5, mosaic=1.0, mixup=0.0, copy_paste=0.0, cfg=None, v5loader=False, save_dir=runs/detect/train

Overriding model.yaml nc=80 with nc=1

from n params module arguments

0 -1 1 928 ultralytics.nn.modules.Conv [3, 32, 3, 2]

1 -1 1 18560 ultralytics.nn.modules.Conv [32, 64, 3, 2]

2 -1 1 29056 ultralytics.nn.modules.C2f [64, 64, 1, True]

3 -1 1 73984 ultralytics.nn.modules.Conv [64, 128, 3, 2]

4 -1 2 197632 ultralytics.nn.modules.C2f [128, 128, 2, True]

5 -1 1 295424 ultralytics.nn.modules.Conv [128, 256, 3, 2]

6 -1 2 788480 ultralytics.nn.modules.C2f [256, 256, 2, True]

7 -1 1 1180672 ultralytics.nn.modules.Conv [256, 512, 3, 2]

8 -1 1 1838080 ultralytics.nn.modules.C2f [512, 512, 1, True]

9 -1 1 656896 ultralytics.nn.modules.SPPF [512, 512, 5]

10 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

11 [-1, 6] 1 0 ultralytics.nn.modules.Concat [1]

12 -1 1 591360 ultralytics.nn.modules.C2f [768, 256, 1]

13 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

14 [-1, 4] 1 0 ultralytics.nn.modules.Concat [1]

15 -1 1 148224 ultralytics.nn.modules.C2f [384, 128, 1]

16 -1 1 147712 ultralytics.nn.modules.Conv [128, 128, 3, 2]

17 [-1, 12] 1 0 ultralytics.nn.modules.Concat [1]

18 -1 1 493056 ultralytics.nn.modules.C2f [384, 256, 1]

19 -1 1 590336 ultralytics.nn.modules.Conv [256, 256, 3, 2]

20 [-1, 9] 1 0 ultralytics.nn.modules.Concat [1]

21 -1 1 1969152 ultralytics.nn.modules.C2f [768, 512, 1]

22 [15, 18, 21] 1 2116435 ultralytics.nn.modules.Detect [1, [128, 256, 512]]

Model summary: 225 layers, 11135987 parameters, 11135971 gradients, 28.6 GFLOPs

Transferred 349/355 items from pretrained weights

DDP settings: RANK 0, WORLD_SIZE 2, DEVICE cuda:0

Overriding model.yaml nc=80 with nc=1

from n params module arguments

0 -1 1 928 ultralytics.nn.modules.Conv [3, 32, 3, 2]

1 -1 1 18560 ultralytics.nn.modules.Conv [32, 64, 3, 2]

2 -1 1 29056 ultralytics.nn.modules.C2f [64, 64, 1, True]

3 -1 1 73984 ultralytics.nn.modules.Conv [64, 128, 3, 2]

4 -1 2 197632 ultralytics.nn.modules.C2f [128, 128, 2, True]

5 -1 1 295424 ultralytics.nn.modules.Conv [128, 256, 3, 2]

6 -1 2 788480 ultralytics.nn.modules.C2f [256, 256, 2, True]

7 -1 1 1180672 ultralytics.nn.modules.Conv [256, 512, 3, 2]

8 -1 1 1838080 ultralytics.nn.modules.C2f [512, 512, 1, True]

9 -1 1 656896 ultralytics.nn.modules.SPPF [512, 512, 5]

10 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

11 [-1, 6] 1 0 ultralytics.nn.modules.Concat [1]

12 -1 1 591360 ultralytics.nn.modules.C2f [768, 256, 1]

13 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

14 [-1, 4] 1 0 ultralytics.nn.modules.Concat [1]

15 -1 1 148224 ultralytics.nn.modules.C2f [384, 128, 1]

16 -1 1 147712 ultralytics.nn.modules.Conv [128, 128, 3, 2]

17 [-1, 12] 1 0 ultralytics.nn.modules.Concat [1]

18 -1 1 493056 ultralytics.nn.modules.C2f [384, 256, 1]

19 -1 1 590336 ultralytics.nn.modules.Conv [256, 256, 3, 2]

20 [-1, 9] 1 0 ultralytics.nn.modules.Concat [1]

21 -1 1 1969152 ultralytics.nn.modules.C2f [768, 512, 1]

22 [15, 18, 21] 1 2116435 ultralytics.nn.modules.Detect [1, [128, 256, 512]]

Model summary: 225 layers, 11135987 parameters, 11135971 gradients, 28.6 GFLOPs

Transferred 349/355 items from pretrained weights

optimizer: SGD(lr=0.01) with parameter groups 57 weight(decay=0.0), 64 weight(decay=0.002), 63 bias

train: Scanning /kaggle/working/yolov8/football_yolodataset/trainset/labels.cach

albumentations: Blur(p=0.01, blur_limit=(3, 7)), MedianBlur(p=0.01, blur_limit=(3, 7)), ToGray(p=0.01), CLAHE(p=0.01, clip_limit=(1, 4.0), tile_grid_size=(8, 8))

val: Scanning /kaggle/working/yolov8/football_yolodataset/testset/labels.cache..

Image sizes 640 train, 640 val

Using 2 dataloader workers

Logging results to runs/detect/train

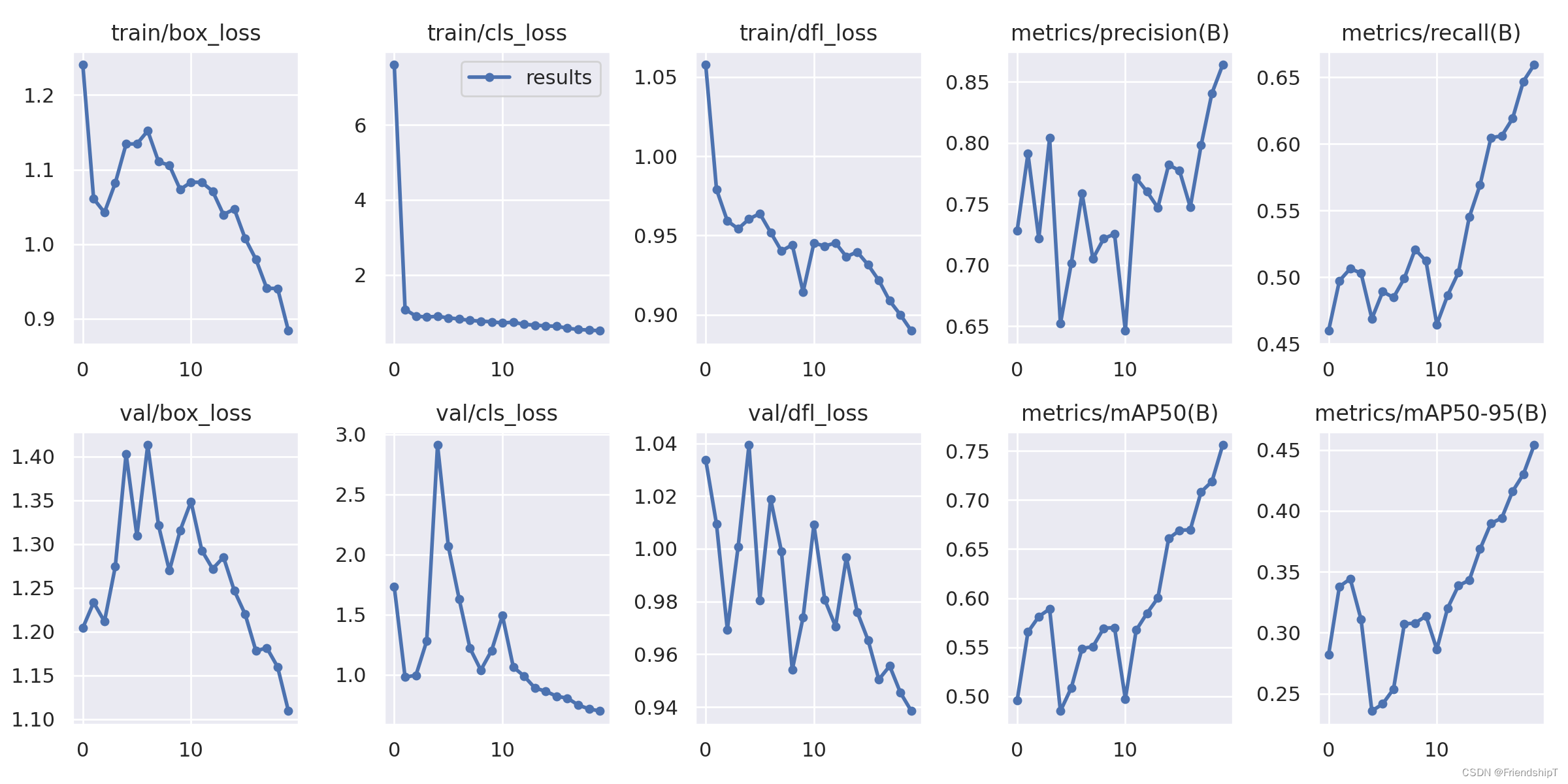

Starting training for 20 epochs...

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

1/20 13.7G 1.241 7.611 1.058 50 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.728 0.46 0.496 0.282

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

2/20 13.7G 1.061 1.076 0.979 46 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.791 0.497 0.566 0.338

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

3/20 13.7G 1.043 0.8968 0.9592 56 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.722 0.506 0.581 0.344

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

4/20 13.7G 1.082 0.8714 0.9542 57 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.804 0.503 0.589 0.311

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

5/20 13.7G 1.134 0.891 0.9604 44 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.652 0.469 0.485 0.236

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

6/20 13.7G 1.134 0.8498 0.9638 44 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.701 0.489 0.509 0.242

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

7/20 13.7G 1.152 0.8197 0.9519 49 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.759 0.485 0.549 0.254

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

8/20 13.7G 1.111 0.7813 0.9402 36 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.705 0.499 0.551 0.307

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

9/20 13.7G 1.106 0.7623 0.9441 44 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.722 0.521 0.569 0.308

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

10/20 13.7G 1.073 0.7442 0.9144 50 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.725 0.512 0.57 0.314

Closing dataloader mosaic

albumentations: Blur(p=0.01, blur_limit=(3, 7)), MedianBlur(p=0.01, blur_limit=(3, 7)), ToGray(p=0.01), CLAHE(p=0.01, clip_limit=(1, 4.0), tile_grid_size=(8, 8))

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

11/20 13.7G 1.083 0.7146 0.9452 24 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.646 0.465 0.497 0.286

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

12/20 13.7G 1.083 0.7343 0.9433 27 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.771 0.486 0.567 0.32

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

13/20 13.7G 1.071 0.6758 0.9452 26 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.76 0.504 0.585 0.339

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

14/20 13.7G 1.04 0.6566 0.9366 26 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.747 0.545 0.6 0.343

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

15/20 13.7G 1.047 0.6338 0.9396 25 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.782 0.569 0.661 0.369

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

16/20 13.7G 1.008 0.6253 0.9315 26 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.777 0.605 0.669 0.39

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

17/20 13.7G 0.9794 0.5733 0.9216 27 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.747 0.606 0.67 0.394

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

18/20 13.7G 0.9408 0.5384 0.9087 25 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.798 0.619 0.708 0.416

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

19/20 13.7G 0.9406 0.5241 0.8998 26 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.841 0.647 0.719 0.43

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

20/20 13.7G 0.8838 0.5055 0.8898 29 640: 1

Class Images Instances Box(P R mAP50 m

all 683 693 0.864 0.659 0.756 0.454

20 epochs completed in 0.980 hours.

Optimizer stripped from runs/detect/train/weights/last.pt, 22.5MB

Optimizer stripped from runs/detect/train/weights/best.pt, 22.5MB

Validating runs/detect/train/weights/best.pt...

Model summary (fused): 168 layers, 11125971 parameters, 0 gradients, 28.4 GFLOPs

Class Images Instances Box(P R mAP50 m

all 683 693 0.864 0.659 0.756 0.454

Speed: 0.1ms pre-process, 3.6ms inference, 0.0ms loss, 1.0ms post-process per image

Results saved to runs/detect/train

训练完成,会在./runs/detect/train文件夹生成best.pt和last.pt权重。

yolo detect val model=./runs/detect/train/weights/best.pt

yolo detect predict model=./runs/detect/train/weights/best.pt source="football.png" # predict with custom model

- 地址:https://download.csdn.net/download/FriendshipTang/87354858

[1] YOLOv8 源代码地址. https://github.com/ultralytics/ultralytics.git.

[2] YOLOv8 Docs. https://docs.ultralytics.com/

我主要使用Ruby来执行此操作,但到目前为止我的攻击计划如下:使用gemsrdf、rdf-rdfa和rdf-microdata或mida来解析给定任何URI的数据。我认为最好映射到像schema.org这样的统一模式,例如使用这个yaml文件,它试图描述数据词汇表和opengraph到schema.org之间的转换:#SchemaXtoschema.orgconversion#data-vocabularyDV:name:namestreet-address:streetAddressregion:addressRegionlocality:addressLocalityphoto:i

我收到这个错误:RuntimeError(自动加载常量Apps时检测到循环依赖当我使用多线程时。下面是我的代码。为什么会这样?我尝试多线程的原因是因为我正在编写一个HTML抓取应用程序。对Nokogiri::HTML(open())的调用是一个同步阻塞调用,需要1秒才能返回,我有100,000多个页面要访问,所以我试图运行多个线程来解决这个问题。有更好的方法吗?classToolsController0)app.website=array.join(',')putsapp.websiteelseapp.website="NONE"endapp.saveapps=Apps.order("

有时我需要处理键/值数据。我不喜欢使用数组,因为它们在大小上没有限制(很容易不小心添加超过2个项目,而且您最终需要稍后验证大小)。此外,0和1的索引变成了魔数(MagicNumber),并且在传达含义方面做得很差(“当我说0时,我的意思是head...”)。散列也不合适,因为可能会不小心添加额外的条目。我写了下面的类来解决这个问题:classPairattr_accessor:head,:taildefinitialize(h,t)@head,@tail=h,tendend它工作得很好并且解决了问题,但我很想知道:Ruby标准库是否已经带有这样一个类? 最佳

我正在尝试使用Curbgem执行以下POST以解析云curl-XPOST\-H"X-Parse-Application-Id:PARSE_APP_ID"\-H"X-Parse-REST-API-Key:PARSE_API_KEY"\-H"Content-Type:image/jpeg"\--data-binary'@myPicture.jpg'\https://api.parse.com/1/files/pic.jpg用这个:curl=Curl::Easy.new("https://api.parse.com/1/files/lion.jpg")curl.multipart_form_

无论您是想搭建桌面端、WEB端或者移动端APP应用,HOOPSPlatform组件都可以为您提供弹性的3D集成架构,同时,由工业领域3D技术专家组成的HOOPS技术团队也能为您提供技术支持服务。如果您的客户期望有一种在多个平台(桌面/WEB/APP,而且某些客户端是“瘦”客户端)快速、方便地将数据接入到3D应用系统的解决方案,并且当访问数据时,在各个平台上的性能和用户体验保持一致,HOOPSPlatform将帮助您完成。利用HOOPSPlatform,您可以开发在任何环境下的3D基础应用架构。HOOPSPlatform可以帮您打造3D创新型产品,HOOPSSDK包含的技术有:快速且准确的CAD

本教程将在Unity3D中混合Optitrack与数据手套的数据流,在人体运动的基础上,添加双手手指部分的运动。双手手背的角度仍由Optitrack提供,数据手套提供双手手指的角度。 01 客户端软件分别安装MotiveBody与MotionVenus并校准人体与数据手套。MotiveBodyMotionVenus数据手套使用、校准流程参照:https://gitee.com/foheart_1/foheart-h1-data-summary.git02 数据转发打开MotiveBody软件的Streaming,开始向Unity3D广播数据;MotionVenus中设置->选项选择Unit

文章目录一、概述简介原理模块二、配置Mysql使用版本环境要求1.操作系统2.mysql要求三、配置canal-server离线下载在线下载上传解压修改配置单机配置集群配置分库分表配置1.修改全局配置2.实例配置垂直分库水平分库3.修改group-instance.xml4.启动监听四、配置canal-adapter1修改启动配置2配置映射文件3启动ES数据同步查询所有订阅同步数据同步开关启动4.验证五、配置canal-admin一、概述简介canal是Alibaba旗下的一款开源项目,Java开发。基于数据库增量日志解析,提供增量数据订阅&消费。Git地址:https://github.co

我正在尝试在Rails上安装ruby,到目前为止一切都已安装,但是当我尝试使用rakedb:create创建数据库时,我收到一个奇怪的错误:dyld:lazysymbolbindingfailed:Symbolnotfound:_mysql_get_client_infoReferencedfrom:/Library/Ruby/Gems/1.8/gems/mysql2-0.3.11/lib/mysql2/mysql2.bundleExpectedin:flatnamespacedyld:Symbolnotfound:_mysql_get_client_infoReferencedf

文章目录1.开发板选择*用到的资源2.串口通信(个人理解)3.代码分析(注释比较详细)1.主函数2.串口1配置3.串口2配置以及中断函数4.注意问题5.源码链接1.开发板选择我用的是STM32F103RCT6的板子,不过代码大概在F103系列的板子上都可以运行,我试过在野火103的霸道板上也可以,主要看一下串口对应的引脚一不一样就行了,不一样的就更改一下。*用到的资源keil5软件这里用到了两个串口资源,采集数据一个,串口通信一个,板子对应引脚如下:串口1,TX:PA9,RX:PA10串口2,TX:PA2,RX:PA32.串口通信(个人理解)我就从串口采集传感器数据这个过程说一下我自己的理解,

SPI接收数据左移一位问题目录SPI接收数据左移一位问题一、问题描述二、问题分析三、探究原理四、经验总结最近在工作在学习调试SPI的过程中遇到一个问题——接收数据整体向左移了一位(1bit)。SPI数据收发是数据交换,因此接收数据时从第二个字节开始才是有效数据,也就是数据整体向右移一个字节(1byte)。请教前辈之后也没有得到解决,通过在网上查阅前人经验终于解决问题,所以写一个避坑经验总结。实际背景:MCU与一款芯片使用spi通信,MCU作为主机,芯片作为从机。这款芯片采用的是它规定的六线SPI,多了两根线:RDY和INT,这样从机就可以主动请求主机给主机发送数据了。一、问题描述根据从机芯片手