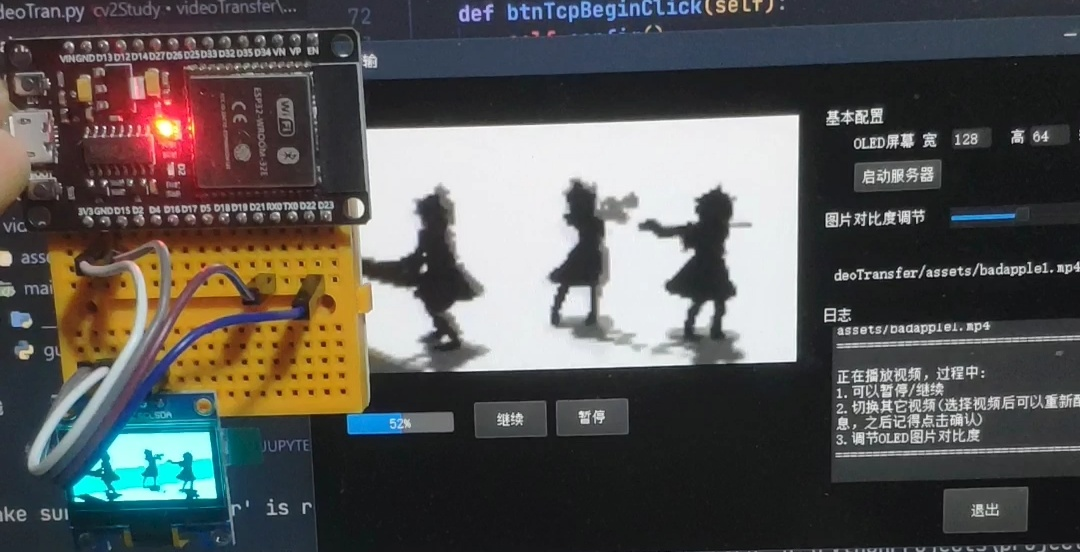

ESP32采用Arduino开发,结合u8g2模块可以很方便地实现在oled上显示图片。因此,只需要将一个视频拆开成一帧帧,然后循环显示即可。

然而,有几个问题:

视频太大,esp32的flash无法存下怎么办?

答:两种方案:视频存储在电脑,一帧帧发送给ESP32即可,这样ESP32每次只需要存放一帧。

可以通过【串口】发送给ESP32,也可以采用【socket协议】发送。(均可以采用python实现发送方的代码)

如何将图片转换成u8g2能够显示的格式?

通常我们使用u8g2显示图片,需要使用PCtoLCD2022这个软件将图片格式转换,其配置如下。为了能够传输视频,需要用python【实现这个转换算法】

整体流程:

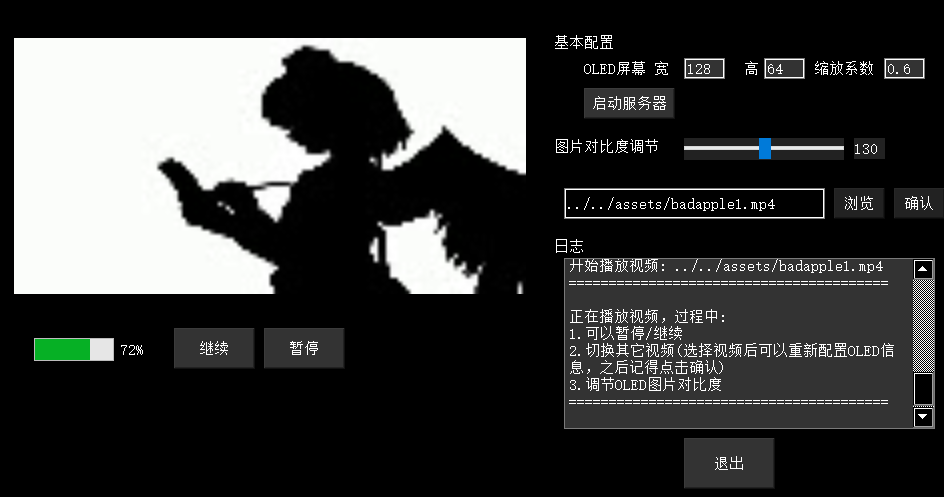

- PC通过Python代码读取视频,将视频每一帧读取出来,转换成适合的大小,然后通过图片转换算法,将每一帧转换成符合u8g2显示的数据格式,最后将这些数据通过TCP方式发送到ESP32中

- ESP32接收到这些数据后,就保存到img变量中,然后采用

u8g2.drawXBM(img)来显示图片即可

图片转换算法已经实现:(只实现了PCtoLCD配置中的“阳码”、“逐行式”、“逆向”方案)

阴码、阳码区分:由于oled是由很多个led灯组成的,只能有点亮或不点亮两种状态,因此只能显示两种颜色。

对于阳码,白色点亮小灯,黑色不点亮。阴码则反过来,即黑色点亮,白色不点亮。

import cv2

def getU8g2Img(img, newW=0, scale=1)->list:

'''

return: 返回图像取模后的结果

参数:

- img: 输入图片(cv2格式(BGR))

- newW: 目标图像的宽度

- scale: 将图像放大(或缩小)倍数

注意:newW与scale二者只需设置其中一个即可

'''

imgGrey = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

h,w = imgGrey.shape

if scale!=1:

imgGrey = cv2.resize(imgGrey, dsize=None, fx=scale, fy=scale)

elif newW!=0:

imgGrey = cv2.resize(imgGrey, dsize=None, fx=newW/w, fy=newW/w)

h, w = imgGrey.shape

ret, imgBin = cv2.threshold(imgGrey, 200, 255, cv2.THRESH_BINARY) # 返回 阈值 和 图像

print(f"最终图片宽={w} 高={h}")

resultList = []

for i in range(h):

tmp = w

k = 0

while True:

rowCode = ''

for j in range(k, min(k+8, tmp)):

# 阴码:黑色表示1,白色255表示0,

# rowCode += ('0' if imgBin[i][j] > 100 else '1')

# 阳码,黑色为0,不点亮,白色为1,点亮

rowCode += ('1' if imgBin[i][j] > 100 else '0')

if len(rowCode) < 8:

# rowCode += ('0' * (8-len(rowCode))) # 阴码

rowCode += ('1' * (8-len(rowCode))) # 阳码

rowCode = rowCode[::-1] # 倒序,对应pctoLCD2002【逆向】

k += 8

resultList.append('0x'+(f'{int(rowCode, 2):0>2x}').upper())

if k >= tmp:

break

return resultList

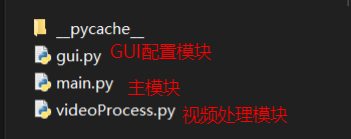

主要分3个模块实现

gui.py

# -*- coding: utf-8 -*-

# Form implementation generated from reading ui file 'gui.ui'

#

# Created by: PyQt5 UI code generator 5.15.0

#

# WARNING: Any manual changes made to this file will be lost when pyuic5 is

# run again. Do not edit this file unless you know what you are doing.

from PyQt5 import QtCore, QtGui, QtWidgets

class Ui_Form(object):

def setupUi(self, Form):

Form.setObjectName("Form")

Form.resize(955, 515)

Form.setStyleSheet("background: rgb(0, 0, 0);\n"

"color: #fff;")

self.btnInputVideo = QtWidgets.QPushButton(Form)

self.btnInputVideo.setGeometry(QtCore.QRect(840, 200, 51, 31))

self.btnInputVideo.setStyleSheet("color:#fff;background: #222;")

self.btnInputVideo.setObjectName("btnInputVideo")

self.editVideoInput = QtWidgets.QLineEdit(Form)

self.editVideoInput.setGeometry(QtCore.QRect(570, 200, 261, 31))

self.editVideoInput.setStyleSheet("color:#fff;")

self.editVideoInput.setInputMask("")

self.editVideoInput.setText("")

self.editVideoInput.setMaxLength(32767)

self.editVideoInput.setFrame(True)

self.editVideoInput.setCursorPosition(0)

self.editVideoInput.setObjectName("editVideoInput")

self.imgLabel = QtWidgets.QLabel(Form)

self.imgLabel.setGeometry(QtCore.QRect(20, 50, 512, 256))

self.imgLabel.setStyleSheet("color:#aaa;background: #111;")

self.imgLabel.setOpenExternalLinks(False)

self.imgLabel.setTextInteractionFlags(QtCore.Qt.LinksAccessibleByMouse)

self.imgLabel.setObjectName("imgLabel")

self.btnExit = QtWidgets.QPushButton(Form)

self.btnExit.setGeometry(QtCore.QRect(690, 450, 91, 51))

self.btnExit.setObjectName("btnExit")

self.btnPause = QtWidgets.QPushButton(Form)

self.btnPause.setGeometry(QtCore.QRect(270, 340, 81, 41))

self.btnPause.setStyleSheet("color:#fff;background: #333;")

self.btnPause.setObjectName("btnPause")

self.btnResume = QtWidgets.QPushButton(Form)

self.btnResume.setGeometry(QtCore.QRect(180, 340, 81, 41))

self.btnResume.setStyleSheet("color:#fff;background: #333;")

self.btnResume.setObjectName("btnResume")

self.btnConfirm = QtWidgets.QPushButton(Form)

self.btnConfirm.setGeometry(QtCore.QRect(900, 200, 51, 31))

self.btnConfirm.setStyleSheet("color:#fff;background: #222;")

self.btnConfirm.setObjectName("btnConfirm")

self.btnTcpBegin = QtWidgets.QPushButton(Form)

self.btnTcpBegin.setGeometry(QtCore.QRect(590, 100, 91, 31))

self.btnTcpBegin.setStyleSheet("color:#fff;background: #333;")

self.btnTcpBegin.setObjectName("btnTcpBegin")

self.textLog = QtWidgets.QTextBrowser(Form)

self.textLog.setGeometry(QtCore.QRect(570, 270, 371, 171))

self.textLog.setStyleSheet("color:#fff;background: #333;")

self.textLog.setLineWidth(1)

self.textLog.setObjectName("textLog")

self.progressBar = QtWidgets.QProgressBar(Form)

self.progressBar.setGeometry(QtCore.QRect(40, 350, 118, 23))

self.progressBar.setStyleSheet("color:#fff;background: #666;")

self.progressBar.setProperty("value", 11)

self.progressBar.setTextVisible(True)

self.progressBar.setObjectName("progressBar")

self.label = QtWidgets.QLabel(Form)

self.label.setGeometry(QtCore.QRect(560, 40, 81, 31))

self.label.setStyleSheet("color:#fff;")

self.label.setFrameShadow(QtWidgets.QFrame.Plain)

self.label.setTextFormat(QtCore.Qt.RichText)

self.label.setObjectName("label")

self.lineEditW = QtWidgets.QLineEdit(Form)

self.lineEditW.setGeometry(QtCore.QRect(690, 70, 41, 21))

self.lineEditW.setStyleSheet("color:#fff;background: #333;")

self.lineEditW.setObjectName("lineEditW")

self.label_2 = QtWidgets.QLabel(Form)

self.label_2.setGeometry(QtCore.QRect(590, 70, 91, 21))

self.label_2.setStyleSheet("color:#fff;")

self.label_2.setObjectName("label_2")

self.label_3 = QtWidgets.QLabel(Form)

self.label_3.setGeometry(QtCore.QRect(750, 70, 21, 21))

self.label_3.setStyleSheet("color:#fff;")

self.label_3.setObjectName("label_3")

self.lineEditH = QtWidgets.QLineEdit(Form)

self.lineEditH.setGeometry(QtCore.QRect(770, 70, 41, 21))

self.lineEditH.setStyleSheet("color:#fff;background: #333;")

self.lineEditH.setObjectName("lineEditH")

self.label_4 = QtWidgets.QLabel(Form)

self.label_4.setGeometry(QtCore.QRect(820, 70, 71, 21))

self.label_4.setStyleSheet("color:#fff;")

self.label_4.setObjectName("label_4")

self.lineEditScale = QtWidgets.QLineEdit(Form)

self.lineEditScale.setGeometry(QtCore.QRect(890, 70, 41, 21))

self.lineEditScale.setStyleSheet("color:#fff;background: #333;")

self.lineEditScale.setObjectName("lineEditScale")

self.label_5 = QtWidgets.QLabel(Form)

self.label_5.setGeometry(QtCore.QRect(560, 250, 41, 16))

self.label_5.setStyleSheet("color:#fff;")

self.label_5.setObjectName("label_5")

self.sliderThresh = QtWidgets.QSlider(Form)

self.sliderThresh.setEnabled(True)

self.sliderThresh.setGeometry(QtCore.QRect(690, 150, 160, 22))

self.sliderThresh.setToolTip("")

self.sliderThresh.setStyleSheet("color:#f00;background: #222;")

self.sliderThresh.setMaximum(255)

self.sliderThresh.setProperty("value", 119)

self.sliderThresh.setSliderPosition(119)

self.sliderThresh.setTracking(True)

self.sliderThresh.setOrientation(QtCore.Qt.Horizontal)

self.sliderThresh.setTickPosition(QtWidgets.QSlider.NoTicks)

self.sliderThresh.setTickInterval(10)

self.sliderThresh.setObjectName("sliderThresh")

self.label_6 = QtWidgets.QLabel(Form)

self.label_6.setGeometry(QtCore.QRect(560, 150, 121, 16))

self.label_6.setStyleSheet("color:#fff;")

self.label_6.setObjectName("label_6")

self.labelThresh = QtWidgets.QLabel(Form)

self.labelThresh.setGeometry(QtCore.QRect(860, 150, 31, 21))

self.labelThresh.setStyleSheet("background: #222;\n"

"")

self.labelThresh.setObjectName("labelThresh")

self.retranslateUi(Form)

self.btnExit.clicked.connect(Form.btnExitClick)

self.btnResume.clicked.connect(Form.btnResumeClick)

self.btnPause.clicked.connect(Form.btnPauseClick)

self.btnTcpBegin.clicked.connect(Form.btnTcpBeginClick)

self.btnInputVideo.clicked.connect(Form.btnInputVideoClick)

self.btnConfirm.clicked.connect(Form.btnConfirmClick)

QtCore.QMetaObject.connectSlotsByName(Form)

def retranslateUi(self, Form):

_translate = QtCore.QCoreApplication.translate

Form.setWindowTitle(_translate("Form", "视频传输"))

self.btnInputVideo.setText(_translate("Form", "浏览"))

self.editVideoInput.setPlaceholderText(_translate("Form", "输入gif图片或视频地址"))

self.imgLabel.setText(_translate("Form", "imgLabel"))

self.btnExit.setStyleSheet(_translate("Form", "color:#fff;background: #333;"))

self.btnExit.setText(_translate("Form", "退出"))

self.btnPause.setText(_translate("Form", "暂停"))

self.btnResume.setText(_translate("Form", "继续"))

self.btnConfirm.setText(_translate("Form", "确认"))

self.btnTcpBegin.setText(_translate("Form", "启动服务器"))

self.label.setText(_translate("Form", "基本配置"))

self.lineEditW.setText(_translate("Form", "128"))

self.label_2.setText(_translate("Form", "OLED屏幕 宽"))

self.label_3.setText(_translate("Form", "高"))

self.lineEditH.setText(_translate("Form", "64"))

self.label_4.setText(_translate("Form", "缩放系数"))

self.lineEditScale.setText(_translate("Form", "1"))

self.label_5.setText(_translate("Form", "日志"))

self.label_6.setText(_translate("Form", "图片对比度调节"))

self.labelThresh.setText(_translate("Form", "0"))

videoProcess.py

import cv2

import socket

# 读取一帧,参数video为cv2.readCapture(path="xxx/xxx.mp4")

def getOneFrame(video):

ret, frame = video.read()

if ret:

return (True, frame)

return (False, 0)

# 修改图像尺寸

def frameResize(frame, wid, hei):

frame = cv2.resize(frame, dsize=(wid, hei))

return frame

def getImgModeList(frame, thresh=127) -> list:

'''

return: 返回图像取模后的结果

参数:

- frame: 视频中的一帧,实际上是cv2格式(BGR)的图片

- thresh: 二值化灰度图的门限值

'''

imgGrey = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

ret, imgResult = cv2.threshold(imgGrey, thresh, 255, cv2.THRESH_BINARY) # 返回 阈值 和 图像

h, w = imgResult.shape

# return 0

resultList = []

for i in range(h):

tmp = w

k = 0

while True:

rowCode = ''

for j in range(k, min(k+8, tmp)):

# 阴码:黑色表示1,白色255表示0,

# rowCode += ('0' if imgBin[i][j] > 100 else '1')

# 阳码,黑色为0,不点亮,白色为1,点亮

rowCode += ('1' if imgResult[i][j] > 200 else '0')

if len(rowCode) < 8:

# rowCode += ('0' * (8-len(rowCode))) # 阴码

rowCode += ('1' * (8-len(rowCode))) # 阳码

rowCode = rowCode[::-1] # 倒序,对应pctLot2002顺向

k += 8

resultList.append(int(rowCode, 2))

if k >= tmp:

break

return resultList

main.py

from PyQt5 import QtWidgets, QtGui, QtCore

from gui import Ui_Form

import sys

import cv2

import threading

import socket

from queue import Queue

import videoProcess as vp

# 配置

oledWidth, oledHeight= 128, 64

imgToOledScale = 0.5 # 发给oled的图片缩放系数

# 全局变量

modesQue = Queue(5000)

imgToQtQue = Queue(5000)

isConnected = False

# progressValue = 0 # 播放进度值

imgToOled = None

# frameCount = 0 # 总帧数

# curFrame = 0 # 当前帧

class MyUi(QtWidgets.QWidget, Ui_Form):

def __init__(self) -> None:

super().__init__()

self.setupUi(self)

# QtWidgets.QApplication.setStyle(QtWidgets.QStyleFactory.create('windows')) # Windows Fusion

self.imgLabelWidth = self.imgLabel.width()

self.imgLabelHeight = self.imgLabel.height()

self.thresh = 130

self.timer1 = QtCore.QTimer() # 10ms

self.timer2 = QtCore.QTimer()

self.timer3 = QtCore.QTimer()

self.timer1.timeout.connect(self.getModesQue)

self.timer2.timeout.connect(self.showImgToQt)

self.timer3.timeout.connect(self.updateRegularly)

self.editVideoInput.setText('../../assets/badapple1.mp4')

# self.labelThresh.setText(str(self.thresh))

self.sliderThresh.setValue(self.thresh)

self.videoPath = ''

self.pauseFlag = False

self.exitFlag = False

self.frameCount = 0

self.curFrame = 0

self.progressValue = 0

self.timer3.start(50)

def btnInputVideoClick(self):

self.videoPath, _ = QtWidgets.QFileDialog.getOpenFileName(self, '打开视频', r'../../assets')

self.editVideoInput.setText(self.videoPath)

def btnConfirmClick(self):

if not isConnected:

self.printLog("客户端还未连接。。。")

return

self.config()

# global frameCount, curFrame

# curFrame = 0

self.videoPath = self.editVideoInput.text()

self.sliderThresh.setValue(self.thresh)

self.printLog(f"开始播放视频: {self.videoPath}")

self.printLog(

'正在播放视频,过程中:\n'+

'1.可以暂停/继续\n'+

'2.切换其它视频(选择视频后可以重新配置OLED信息,之后记得点击确认)\n'+

'3.调节OLED图片对比度')

imgToQtQue.queue.clear()

modesQue.queue.clear()

self.video = cv2.VideoCapture(self.videoPath)

if self.video.isOpened():

self.frameCount = self.video.get(7)

self.timer1.start(20)

self.timer2.start(45)

def btnTcpBeginClick(self):

self.config()

self.sockThread = SocketThread()

self.sockThread.start()

self.printLog("服务器已启动,请选择要播放的视频或GIF动图,然后等待客户端连接")

def btnPauseClick(self):

if not isConnected: return

self.pauseFlag = True

self.sockThread.pause()

self.printLog("已暂停")

def btnResumeClick(self):

if not isConnected:

return

self.pauseFlag = False

self.sockThread.resume()

self.printLog("已继续")

def btnExitClick(self):

try:

self.sockThread.stop()

except:

pass

self.close()

def getModesQue(self):

if self.video.isOpened():

ret, frame = vp.getOneFrame(self.video)

if ret:

imgToQtShow = vp.frameResize(

frame, self.imgLabelWidth, self.imgLabelHeight)

imgToQtQue.put(imgToQtShow)

imgToOLED1 = vp.frameResize(frame, int(

oledWidth*imgToOledScale), int(oledHeight*imgToOledScale))

modesQue.put(vp.getImgModeList(imgToOLED1, thresh=self.thresh))

else:

self.video.release()

def showImgToQt(self):

global imgToOled

if not imgToQtQue.empty() and (not self.pauseFlag):

self.curFrame +=1

self.progressValue = int(self.curFrame/self.frameCount*100)

if not modesQue.empty():

imgToOled = modesQue.get()

shrink = cv2.cvtColor(imgToQtQue.get(), cv2.COLOR_BGR2RGB)

# cv 图片转换成 qt图片

qtImg = QtGui.QImage(shrink.data, # 数据源

shrink.shape[1], # 宽度

shrink.shape[0], # 高度

shrink.shape[1] * 3, # 行字节数

QtGui.QImage.Format_RGB888)

# label 控件显示图片

self.imgLabel.setPixmap(QtGui.QPixmap(qtImg))

# self.imgLabel.show()

def config(self):

global oledHeight, oledWidth, imgToOledScale

oledWidth = int(self.lineEditW.text())

oledHeight = int(self.lineEditH.text())

imgToOledScale = float(self.lineEditScale.text())

self.printLog(f"oled宽:{oledWidth} 高:{oledHeight} 缩放系数:{imgToOledScale}")

def printLog(self, text):

self.textLog.append(text) # 文本框逐条添加数据

self.textLog.append('='*40+'\n')

self.textLog.ensureCursorVisible()

def updateRegularly(self):

self.thresh = self.sliderThresh.value()

self.progressBar.setValue(self.progressValue)

self.labelThresh.setText(str(self.thresh))

class SocketThread(threading.Thread):

def __init__(self, host='', port=8762, bufferSize=1024):

super().__init__()

self.__e = threading.Event()

self.__e.set()

self.__e2 = threading.Event()

self.__e2.set()

self.host = host

self.port = port

self.bufferSize = bufferSize

def run(self):

global isConnected

# with socket.socket() as s:

with socket.socket(socket.AF_INET, socket.SOCK_STREAM) as s:

# 绑定服务器地址和端口

s.bind((self.host, self.port))

# 启动服务监听

s.listen(4)

myui.printLog(f'服务器启动,端口: {self.port},等待用户接入')

# while video.isOpened():

# 等待客户端连接请求,获取connSock

conn, addr = s.accept()

isConnected = True

myui.printLog('客户端:{}已连接,请点击【确认】按钮开始播放视频'.format(addr))

with conn:

while self.__e.isSet():

if modesQue.empty(): continue

self.__e2.wait()

# 接收请求信息

dataGet = conn.recv(self.bufferSize).decode('utf-8').strip()

# print('接收到信息:{}'.format(dataGet))

if dataGet == 'S':

# if not modesQue.empty():

w = int(oledWidth * imgToOledScale)

h = int(oledHeight * imgToOledScale)

dataSend = w.to_bytes(

1, byteorder='little') + h.to_bytes(1, byteorder='little')

conn.send(dataSend)

# else:

# print('视频传输结束,等待输入新视频')

# break

if dataGet == 'D' and imgToOled!=None:

# modeList = modesQue.get() # 每个元素是十进制的字符串形式

modeList = imgToOled

dataSend = b''

for i in range(len(modeList)):

dataSend += (modeList[i].to_bytes(1,

byteorder='little'))

# print(len(modeList))

# print(modeList)

conn.send(dataSend)

if dataGet == 'N':

myui.printLog('接收请求信息:{},客户端要求关闭服务器'.format(dataGet))

break

if dataGet == '':

myui.printLog('客户端异常,连接断开')

break

print("关闭连接")

s.close()

def stop(self):

self.__e.clear()

def pause(self):

self.__e2.clear()

def resume(self):

self.__e2.set()

if __name__ == '__main__':

app = QtWidgets.QApplication(sys.argv)

myui = MyUi()

myui.printLog('注意:\n1.请先配置OLED信息,然后点击【启动服务器】\n2.选择要播放的视频\n3.等待客户端连接,直到出现"客户端xxx已连接"即可\n4.点击【确认】开始播放视频\n5.播放过程可以随时切换视频、以及暂停')

myui.show()

sys.exit(app.exec())

采用Arduino+u8g2库开发

#include <WiFi.h>

#include "U8g2lib.h"

//接线:SCL=19, SDA=18

U8G2_SSD1306_128X64_NONAME_F_HW_I2C u8g2(U8G2_R0, /* reset=*/U8X8_PIN_NONE, /* clock=*/19, /* data=*/18); // ESP32 Thing, HW I2C with pin remapping

//U8G2_SSD1306_128X64_NONAME_F_HW_I2C u8g2(U8G2_R0, /* reset=*/ U8X8_PIN_NONE);

const char *ssid = "ssid";

const char *password = "xxxx";

const IPAddress serverIP(192,168,43,157); //欲访问的地址

uint16_t serverPort = 8762; //服务器端口号

uint8_t w, h; // 图片宽高

uint8_t img[4000] PROGMEM = {0};

uint8_t buff[4000] PROGMEM = {0};

WiFiClient client; //声明一个客户端对象,用于与服务器进行连接

void setup()

{

Serial.begin(115200);

Serial.println();

WiFi.mode(WIFI_STA);

WiFi.setSleep(false); //关闭STA模式下wifi休眠,提高响应速度

WiFi.begin(ssid, password);

u8g2.begin();

while (WiFi.status() != WL_CONNECTED)

{

delay(500);

Serial.print(".");

}

Serial.println("Connected");

Serial.print("IP Address:");

Serial.println(WiFi.localIP());

}

uint8_t shape[2]; //宽高

uint16_t read_count;

//使图片显示到屏幕中间, w, h为图片宽高

void showImg(uint8_t w, uint8_t h, uint8_t *img){

uint8_t x, y;

x = (128-w)/2;

y = (64-h)/2;

u8g2.clearBuffer();

u8g2.drawXBMP(x, y, w, h, img);

u8g2.setFont(u8g2_font_ncenB14_tr);

u8g2.sendBuffer();

}

void loop()

{

Serial.println("尝试连接服务器");

if (client.connect(serverIP, serverPort)) //尝试访问目标地址

{

Serial.println("连接成功");

client.print("S"); //向服务器发送S,获取帧宽高

while(client.connected()){

while(1)

{

if (client.available()) //如果有数据可读取

{

read_count = client.read(shape, 1024);//向缓冲区读取数据,read_count为读取到的数据长度

w = shape[0];

h = shape[1];

client.write("D"); //发送D,获取图片数据(已经转换为u8g2能显示的格式)

}

else continue;

break;

}

while(1)

{

if(client.available()) //如果有数据可读取

{

read_count = client.read(buff, 2048);

memcpy(img, buff, read_count);//将读取的buff字节地址复制给img_buff数组

client.write("S");

}

else continue;

showImg(w, h, img);

memset(img,0,sizeof(img));//清空buff

break;

}

}

}

else

{

Serial.println("访问失败");

client.stop(); //关闭客户端

}

delay(500);

}

文章目录1.开发板选择*用到的资源2.串口通信(个人理解)3.代码分析(注释比较详细)1.主函数2.串口1配置3.串口2配置以及中断函数4.注意问题5.源码链接1.开发板选择我用的是STM32F103RCT6的板子,不过代码大概在F103系列的板子上都可以运行,我试过在野火103的霸道板上也可以,主要看一下串口对应的引脚一不一样就行了,不一样的就更改一下。*用到的资源keil5软件这里用到了两个串口资源,采集数据一个,串口通信一个,板子对应引脚如下:串口1,TX:PA9,RX:PA10串口2,TX:PA2,RX:PA32.串口通信(个人理解)我就从串口采集传感器数据这个过程说一下我自己的理解,

我一直在寻找一种以编程方式或通过命令行将mp3转换为aac的方法,但没有成功。理想情况下,我有一段代码可以从我的Rails应用程序中调用,将mp3转换为aac。我安装了ffmpeg和libfaac,并能够使用以下命令创建aac文件:ffmpeg-itest.mp3-acodeclibfaac-ab163840dest.aac当我将输出文件的名称更改为dest.m4a时,它无法在iTunes中播放。谢谢! 最佳答案 FFmpeg提供AAC编码功能(如果您已编译它们)。如果您使用的是Windows,则可以从here获取完整的二进制文件。

LL库和HAL库简介LL:Low-Layer,底层库HAL:HardwareAbstractionLayer,硬件抽象层库LL库和hal库对比,很精简,这实际上是一个精简的库。LL库的配置选择如下:在STM32CUBEMX中,点击菜单的“ProjectManager”–>“AdvancedSettings”,在下面的界面中选择“AdvancedSettings”,然后在每个模块后面选择使用的库总结:1、如果使用的MCU是小容量的,那么STM32CubeLL将是最佳选择;2、如果结合可移植性和优化,使用STM32CubeHAL并使用特定的优化实现替换一些调用,可保持最大的可移植性。另外HAL和L

我如何用ruby编写一个脚本,当从命令行执行时播放mp3文件(背景音乐)?我试过了run="mplayer#{"/Users/bhushan/resume/m.mp3"}-aosdl-vox11-framedrop-cache16384-cache-min20/100"system(run)但它也不起作用,以上是播放器特定的。如果用户没有安装mplayer怎么办。有没有更好的办法? 最佳答案 我一般都是这样pid=fork{exec'mpg123','-q',file} 关于ruby

目录一、ESP32简单介绍二、ESP32Wi-Fi模块介绍三、ESP32Wi-Fi编程模型四、ESP32Wi-Fi事件处理流程 五、ESP32Wi-Fi开发环境六、ESP32Wi-Fi具体代码七、ESP32Wi-Fi代码解读6.1主程序app_main7.2自定义代码wifi_init_sta()八、ESP32Wi-Fi连接验证8.1测试方法8.2服务器模拟工具sscom58.3测试代码8.4测试结果前言为了开发一款亚马逊物联网产品,开始入手ESP32模块。为了能够记录自己的学习过程,特记录如下操作过程。一、ESP32简单介绍ESP32是一套Wi-Fi(2.4GHz)和蓝牙(4.2)双模解决方

有道无术,术尚可求,有术无道,止于术。本系列SpringBoot版本3.0.4本系列SpringSecurity版本6.0.2本系列SpringAuthorizationServer版本1.0.2源码地址:https://gitee.com/pearl-organization/study-spring-security-demo文章目录前言1.OAuth2AuthorizationServerMetadataEndpointFilter2.OAuth2AuthorizationEndpointFilter3.OidcProviderConfigurationEndpointFilter4.N

我正在用Ruby编写DSL来控制我正在处理的Arduino项目;巴尔迪诺。这是一只酒吧猴子,将由软件控制来提供饮料。Arduino通过串行端口接收命令,告诉Arduino要打开什么泵以及打开多长时间。它目前正在读取一个食谱(见下文)并将其打印出来。串行通信的代码以及我在下面提到的其他一些想法仍然需要改进。这是我的第一个DSL,我正在处理之前的示例,所以它的边缘非常粗糙。任何批评、代码改进(是否有任何关于RubyDSL最佳实践或习语的良好引用?)或任何一般性评论。我目前有DSL的粗略草稿,因此饮料配方如下所示(Githublink):desc"Simpleglassofwater"rec

在我的代码中,我需要使用各种算法(包括CRC32)对文件进行哈希处理。因为我还在Digest系列中使用其他加密哈希函数,所以我认为为它们维护一个一致的接口(interface)会很好。为了记录,我确实找到了digest-crc,一颗完全符合我要求的gem。问题是,Zlib是标准库的一部分,并且有一个我想重用的CRC32工作实现。此外,它是用C编写的,因此它应该提供与digest-crc相关的卓越性能,后者是纯ruby实现。实现Digest::CRC32一开始看起来非常简单:%w(digestzlib).each{|f|requiref}classDigest::CRC32一切正常:

我正在尝试在我的机器上安装win32-apigem,但在构建native扩展时我遇到了一些问题:$geminstallwin32-api--no-ri--rdocTemporarilyenhancingPATHtoincludeDevKit...Buildingnativeextensions.Thiscouldtakeawhile...C:\Programs\dev_kit\bin\make.exe:***Couldn'treservespaceforcygwin'sheap,Win32error0ERROR:Errorinstallingwin32-api:ERROR:Failed

我在Windows上运行ruby1.9.2并试图移植在Ruby1.8中工作的代码。该代码使用以前运行良好的Open4.popen4。对于1.9.2,我做了以下事情:通过geminstallPOpen4安装了POpen4需要POpen4通过require'popen4'尝试像这样使用POpen4:Open4.popen4("cmd"){|io_in,io_out,io_er|...}当我这样做时,我得到了错误:nosuchfiletoload--win32/open3如果我尝试安装win32-open3(geminstallwin32-open3),我会收到错误消息:win32-op