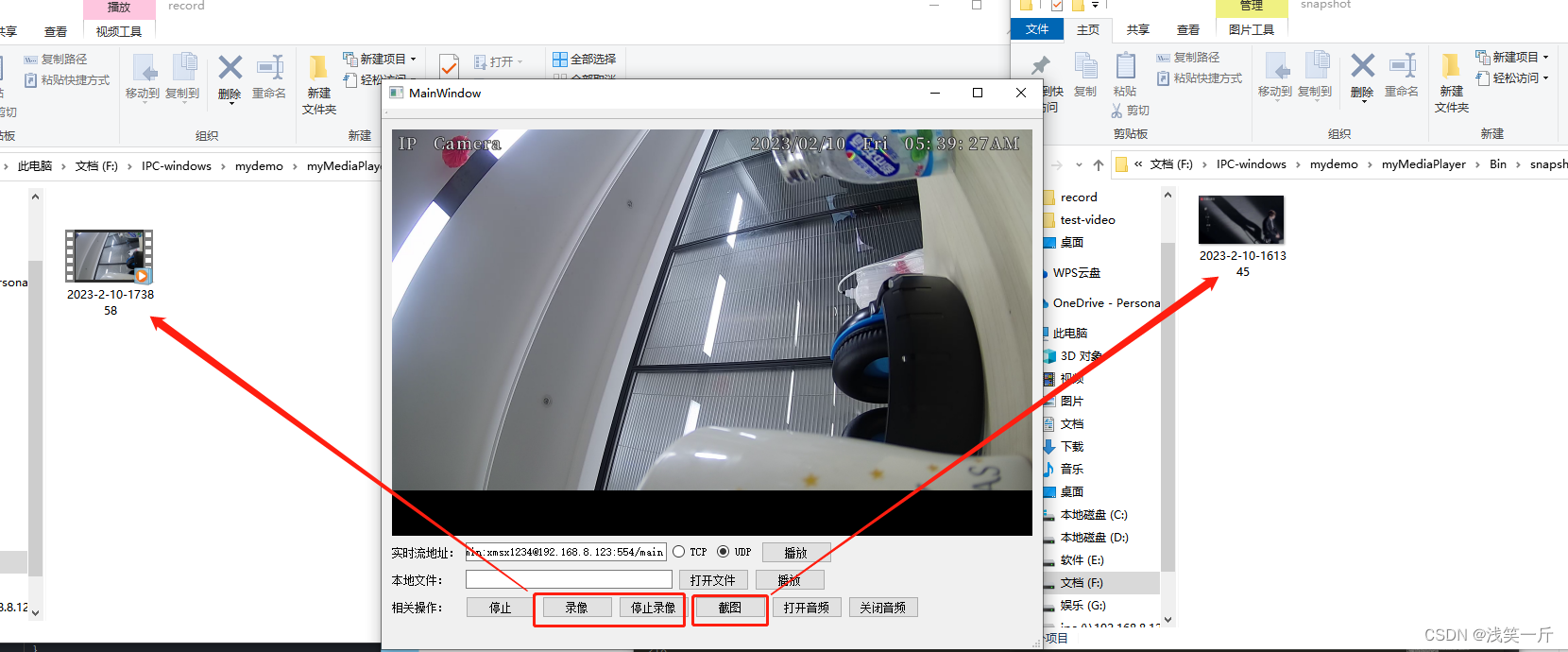

本工程qt用的版本是5.8-32位,ffmpeg用的版本是较新的5.1版本。它支持TCP或UDP方式拉取实时流,实时流我采用的是监控摄像头的RTSP流。音频播放采用的是QAudioOutput,视频经ffmpeg解码并由YUV转RGB后是在QOpenGLWidget下进行渲染显示。本工程的代码有注释,可以通过本博客查看代码或者在播放最后的链接处下载工程demo。

mainwindow.h

#ifndef MAINWINDOW_H

#define MAINWINDOW_H

#include <QMainWindow>

#include "commondef.h"

#include "mediathread.h"

#include "ctopenglwidget.h"

namespace Ui {

class MainWindow;

}

class MainWindow : public QMainWindow

{

Q_OBJECT

public:

explicit MainWindow(QWidget *parent = 0);

~MainWindow();

void Init();

private slots:

void on_btn_play_clicked();

void on_btn_open_clicked();

void on_btn_play2_clicked();

void on_btn_stop_clicked();

void on_btn_record_clicked();

void on_btn_snapshot_clicked();

void on_btn_open_audio_clicked();

void on_btn_close_audio_clicked();

void on_btn_stop_record_clicked();

private:

Ui::MainWindow *ui;

MediaThread* m_pMediaThread = nullptr;

};

#endif // MAINWINDOW_H

mainwindow.cpp

#include "mainwindow.h"

#include "ui_mainwindow.h"

#include <QMessageBox>

#include <QFileDialog>

#include "ctaudioplayer.h"

MainWindow::MainWindow(QWidget *parent) :

QMainWindow(parent),

ui(new Ui::MainWindow)

{

ui->setupUi(this);

Init();

}

MainWindow::~MainWindow()

{

delete ui;

}

void MainWindow::Init()

{

ui->radioButton_TCP->setChecked(false);

ui->radioButton_UDP->setChecked(true);

}

void MainWindow::on_btn_play_clicked()

{

QString sUrl = ui->lineEdit_Url->text();

if(sUrl.isEmpty())

{

QMessageBox::critical(this, "myFFmpeg", "错误:实时流url不能为空.");

return;

}

if(nullptr == m_pMediaThread)

{

MY_DEBUG << "new MediaThread";

m_pMediaThread = new MediaThread;

}

else

{

if(m_pMediaThread->isRunning())

{

QMessageBox::critical(this, "myFFmpeg", "错误:请先点击停止按钮关闭视频.");

return;

}

}

connect(m_pMediaThread, SIGNAL(sig_emitImage(const QImage&)),

ui->openGLWidget, SLOT(slot_showImage(const QImage&)));

bool bMediaInit = false;

if(ui->radioButton_TCP->isChecked())

{

bMediaInit = m_pMediaThread->Init(sUrl, PROTOCOL_TCP);

}

else

{

bMediaInit = m_pMediaThread->Init(sUrl, PROTOCOL_UDP);

}

if(bMediaInit)

{

m_pMediaThread->startThread();

}

}

void MainWindow::on_btn_open_clicked()

{

QString sFileName = QFileDialog::getOpenFileName(this, QString::fromLocal8Bit("选择视频文件"));

if(sFileName.isEmpty())

{

QMessageBox::critical(this, "myFFmpeg", "错误:文件不能为空.");

return;

}

ui->lineEdit_File->setText(sFileName);

}

void MainWindow::on_btn_play2_clicked()

{

QString sFileName = ui->lineEdit_File->text();

if(sFileName.isEmpty())

{

QMessageBox::critical(this, "myFFmpeg", "错误:文件不能为空.");

return;

}

if(nullptr == m_pMediaThread)

{

m_pMediaThread = new MediaThread;

}

else

{

if(m_pMediaThread->isRunning())

{

QMessageBox::critical(this, "myFFmpeg", "错误:请先点击停止按钮关闭视频.");

return;

}

}

connect(m_pMediaThread, SIGNAL(sig_emitImage(const QImage&)),

ui->openGLWidget, SLOT(slot_showImage(const QImage&)));

if(m_pMediaThread->Init(sFileName))

{

m_pMediaThread->startThread();

}

}

void MainWindow::on_btn_stop_clicked()

{

if(m_pMediaThread)

{

qDebug() << "on_btn_stop_clicked 000";

disconnect(m_pMediaThread, SIGNAL(sig_emitImage(const QImage&)),

ui->openGLWidget, SLOT(slot_showImage(const QImage&)));

m_pMediaThread->stopThread();

qDebug() << "on_btn_stop_clicked 111";

m_pMediaThread->quit();

m_pMediaThread->wait();

qDebug() << "on_btn_stop_clicked 222";

m_pMediaThread->DeInit();

qDebug() << "on_btn_stop_clicked 333";

}

}

void MainWindow::on_btn_record_clicked()

{

if(m_pMediaThread)

m_pMediaThread->startRecord();

}

void MainWindow::on_btn_snapshot_clicked()

{

if(m_pMediaThread)

m_pMediaThread->Snapshot();

}

void MainWindow::on_btn_open_audio_clicked()

{

ctAudioPlayer::getInstance().isPlay(true);

}

void MainWindow::on_btn_close_audio_clicked()

{

ctAudioPlayer::getInstance().isPlay(false);

}

void MainWindow::on_btn_stop_record_clicked()

{

if(m_pMediaThread)

m_pMediaThread->stopRecord();

}

mediathread.h

#ifndef MEDIATHREAD_H

#define MEDIATHREAD_H

#include <QThread>

#include <QImage>

#include "ctffmpeg.h"

#include "commondef.h"

#include "mp4recorder.h"

#define MAX_AUDIO_OUT_SIZE 8*1152

class MediaThread : public QThread

{

Q_OBJECT

public:

MediaThread();

~MediaThread();

bool Init(QString sUrl, int nProtocolType = PROTOCOL_UDP);

void DeInit();

void startThread();

void stopThread();

void setPause(bool bPause);

void Snapshot();

void startRecord();

void stopRecord();

public:

int m_nMinAudioPlayerSize = 640;

private:

void run() override;

signals:

void sig_emitImage(const QImage&);

private:

bool m_bRun = false;

bool m_bPause = false;

bool m_bRecord = false;

ctFFmpeg* m_pFFmpeg = nullptr;

mp4Recorder m_pMp4Recorder;

};

#endif // MEDIATHREAD_H

mediathread.cpp

#include "mediathread.h"

#include "ctaudioplayer.h"

#include <QDate>

#include <QTime>

MediaThread::MediaThread()

{

}

MediaThread::~MediaThread()

{

if(m_pFFmpeg)

{

m_pFFmpeg->DeInit();

delete m_pFFmpeg;

m_pFFmpeg = nullptr;

}

}

bool MediaThread::Init(QString sUrl, int nProtocolType)

{

if(nullptr == m_pFFmpeg)

{

MY_DEBUG << "new ctFFmpeg";

m_pFFmpeg = new ctFFmpeg;

connect(m_pFFmpeg, SIGNAL(sig_getImage(const QImage&)),

this, SIGNAL(sig_emitImage(const QImage&)));

}

if(m_pFFmpeg->Init(sUrl, nProtocolType) != 0)

{

MY_DEBUG << "FFmpeg Init error.";

return false;

}

return true;

}

void MediaThread::DeInit()

{

MY_DEBUG << "DeInit 000";

m_pFFmpeg->DeInit();

MY_DEBUG << "DeInit 111";

if(m_pFFmpeg)

{

delete m_pFFmpeg;

m_pFFmpeg = nullptr;

}

MY_DEBUG << "DeInit end";

}

void MediaThread::startThread()

{

m_bRun = true;

start();

}

void MediaThread::stopThread()

{

m_bRun = false;

}

void MediaThread::setPause(bool bPause)

{

m_bPause = bPause;

}

void MediaThread::Snapshot()

{

m_pFFmpeg->Snapshot();

}

void MediaThread::startRecord()

{

QString sPath = "./record/";

QDate date = QDate::currentDate();

QTime time = QTime::currentTime();

QString sRecordPath = QString("%1%2-%3-%4-%5%6%7.mp4").arg(sPath).arg(date.year()). \

arg(date.month()).arg(date.day()).arg(time.hour()).arg(time.minute()). \

arg(time.second());

MY_DEBUG << "sRecordPath:" << sRecordPath;

if(nullptr != m_pFFmpeg->m_pAVFmtCxt && m_bRun)

{

m_bRecord = m_pMp4Recorder.Init(m_pFFmpeg->m_pAVFmtCxt, sRecordPath);

}

}

void MediaThread::stopRecord()

{

if(m_bRecord)

{

MY_DEBUG << "stopRecord...";

m_pMp4Recorder.DeInit();

m_bRecord = false;

}

}

void MediaThread::run()

{

char audioOut[MAX_AUDIO_OUT_SIZE] = {0};

while(m_bRun)

{

if(m_bPause)

{

msleep(100);

continue;

}

//获取播放器缓存大小

if(m_pFFmpeg->m_bSupportAudioPlay)

{

int nFreeSize = ctAudioPlayer::getInstance().getFreeSize();

if(nFreeSize < m_nMinAudioPlayerSize)

{

msleep(1);

continue;

}

}

AVPacket pkt = m_pFFmpeg->getPacket();

if (pkt.size <= 0)

{

msleep(10);

continue;

}

//解码播放

if (pkt.stream_index == m_pFFmpeg->m_nAudioIndex &&

m_pFFmpeg->m_bSupportAudioPlay)

{

if(m_pFFmpeg->Decode(&pkt))

{

int nLen = m_pFFmpeg->getAudioFrame(audioOut);//获取一帧音频的pcm

if(nLen > 0)

ctAudioPlayer::getInstance().Write(audioOut, nLen);

}

}

else

{

//目前只支持录制视频

if(m_bRecord)

{

//MY_DEBUG << "record...";

AVPacket* pPkt = av_packet_clone(&pkt);

m_pMp4Recorder.saveOneFrame(*pPkt);

av_packet_free(&pPkt);

}

if(m_pFFmpeg->Decode(&pkt))

{

m_pFFmpeg->getVideoFrame();

}

}

av_packet_unref(&pkt);

}

MY_DEBUG << "run end";

}

ctffmpeg.h

#ifndef CTFFMPEG_H

#define CTFFMPEG_H

#include <QObject>

#include <QMutex>

extern "C"

{

#include "libavcodec/avcodec.h"

#include "libavcodec/dxva2.h"

#include "libavutil/avstring.h"

#include "libavutil/mathematics.h"

#include "libavutil/pixdesc.h"

#include "libavutil/imgutils.h"

#include "libavutil/dict.h"

#include "libavutil/parseutils.h"

#include "libavutil/samplefmt.h"

#include "libavutil/avassert.h"

#include "libavutil/time.h"

#include "libavformat/avformat.h"

#include "libswscale/swscale.h"

#include "libavutil/opt.h"

#include "libavcodec/avfft.h"

#include "libswresample/swresample.h"

#include "libavfilter/buffersink.h"

#include "libavfilter/buffersrc.h"

#include "libavutil/avutil.h"

}

#include "commondef.h"

class ctFFmpeg : public QObject

{

Q_OBJECT

public:

ctFFmpeg();

~ctFFmpeg();

int Init(QString sUrl, int nProtocolType = PROTOCOL_UDP);

void DeInit();

AVPacket getPacket(); //读取一帧

bool Decode(const AVPacket *pkt); //解码

int getVideoFrame();

int getAudioFrame(char* pOut);

void Snapshot();

private:

int InitVideo();

int InitAudio();

signals:

void sig_getImage(const QImage &image);

public:

int m_nVideoIndex = -1;

int m_nAudioIndex = -1;

bool m_bSupportAudioPlay = false;

AVFormatContext *m_pAVFmtCxt = nullptr; //流媒体的上下文

private:

AVCodecContext* m_pVideoCodecCxt = nullptr; //视频解码器上下文

AVCodecContext* m_pAudioCodecCxt = nullptr; //音频解码器上下文

AVFrame *m_pYuvFrame = nullptr; //解码后的视频帧数据

AVFrame *m_pPcmFrame = nullptr; //解码后的音频数据

SwrContext *m_pAudioSwrContext = nullptr; //音频重采样上下文

SwsContext *m_pVideoSwsContext = nullptr; //处理像素问题,格式转换 yuv->rbg

AVPacket m_packet; //每一帧数据 原始数据

AVFrame* m_pFrameRGB = nullptr; //转换后的RGB数据

enum AVCodecID m_CodecId;

uint8_t* m_pOutBuffer = nullptr;

int m_nAudioSampleRate = 8000; //音频采样率

int m_nAudioPlaySampleRate = 44100; //音频播放采样率

int m_nAudioPlayChannelNum = 1; //音频播放通道数

int64_t m_nLastReadPacktTime = 0;

bool m_bSnapshot = false;

QString m_sSnapPath = "./snapshot/test.jpg";

QMutex m_mutex;

};

#endif // CTFFMPEG_H

ctffmpeg.cpp

#include "ctffmpeg.h"

#include <QImage>

#include "ctaudioplayer.h"

#include <QPixmap>

#include <QDate>

#include <QTime>

ctFFmpeg::ctFFmpeg()

{

}

ctFFmpeg::~ctFFmpeg()

{

}

int ctFFmpeg::Init(QString sUrl, int nProtocolType)

{

DeInit();

avformat_network_init();//初始化网络流

//参数设置

AVDictionary* pOptDict = NULL;

if(nProtocolType == PROTOCOL_UDP)

av_dict_set(&pOptDict, "rtsp_transport", "udp", 0);

else

av_dict_set(&pOptDict, "rtsp_transport", "tcp", 0);

av_dict_set(&pOptDict, "stimeout", "5000000", 0);

av_dict_set(&pOptDict, "buffer_size", "8192000", 0);

if(nullptr == m_pAVFmtCxt)

m_pAVFmtCxt = avformat_alloc_context();

if(nullptr == m_pYuvFrame)

m_pYuvFrame = av_frame_alloc();

if(nullptr == m_pPcmFrame)

m_pPcmFrame = av_frame_alloc();

//加入中断处理

m_nLastReadPacktTime = av_gettime();

m_pAVFmtCxt->interrupt_callback.opaque = this;

m_pAVFmtCxt->interrupt_callback.callback = [](void* ctx)

{

ctFFmpeg* pThis = (ctFFmpeg*)ctx;

int nTimeout = 3;

if (av_gettime() - pThis->m_nLastReadPacktTime > nTimeout * 1000 * 1000)

{

return -1;

}

return 0;

};

//打开码流

int nRet = avformat_open_input(&m_pAVFmtCxt, sUrl.toStdString().c_str(), nullptr, nullptr);

if(nRet < 0)

{

MY_DEBUG << "avformat_open_input failed nRet:" << nRet;

return nRet;

}

//设置探测时间,获取码流信息

m_pAVFmtCxt->probesize = 400 * 1024;

m_pAVFmtCxt->max_analyze_duration = 2 * AV_TIME_BASE;

nRet = avformat_find_stream_info(m_pAVFmtCxt, nullptr);

if(nRet < 0)

{

MY_DEBUG << "avformat_find_stream_info failed nRet:" << nRet;

return nRet;

}

//打印码流信息

av_dump_format(m_pAVFmtCxt, 0, sUrl.toStdString().c_str(), 0);

//查找码流

for (int nIndex = 0; nIndex < m_pAVFmtCxt->nb_streams; nIndex++)

{

if (m_pAVFmtCxt->streams[nIndex]->codecpar->codec_type == AVMEDIA_TYPE_VIDEO)

{

m_nVideoIndex = nIndex;

}

if (m_pAVFmtCxt->streams[nIndex]->codecpar->codec_type == AVMEDIA_TYPE_AUDIO)

{

m_nAudioIndex = nIndex;

}

}

//初始化视频

if(InitVideo() < 0)

{

MY_DEBUG << "InitVideo() error";

return -1;

}

//初始化音频

if(m_nAudioIndex != -1)

{

if(InitAudio() < 0)

{

MY_DEBUG << "InitAudio() error";

m_bSupportAudioPlay = false;

}

else

m_bSupportAudioPlay = true;

}

return 0;

}

void ctFFmpeg::DeInit()

{

MY_DEBUG << "DeInit 000";

m_mutex.lock();

MY_DEBUG << "DeInit 111";

if (nullptr != m_pVideoSwsContext)

{

sws_freeContext(m_pVideoSwsContext);

}

MY_DEBUG << "DeInit 222";

if (nullptr != m_pAudioSwrContext)

{

swr_free(&m_pAudioSwrContext);

m_pAudioSwrContext = nullptr;

}

MY_DEBUG << "DeInit 333";

if(nullptr != m_pYuvFrame)

av_free(m_pYuvFrame);

MY_DEBUG << "DeInit 444";

if(nullptr != m_pPcmFrame)

av_free(m_pPcmFrame);

MY_DEBUG << "DeInit 555";

if(nullptr != m_pOutBuffer)

{

av_free(m_pOutBuffer);

}

MY_DEBUG << "DeInit 666";

if(nullptr != m_pVideoCodecCxt)

{

avcodec_close(m_pVideoCodecCxt);

//avcodec_free_context(&m_pVideoCodecCxt);

m_pVideoCodecCxt = nullptr;

}

MY_DEBUG << "DeInit 777";

if (nullptr != m_pAudioCodecCxt)

{

avcodec_close(m_pAudioCodecCxt);

//avcodec_free_context(&m_pAudioCodecCxt);

m_pAudioCodecCxt = nullptr;

}

MY_DEBUG << "DeInit 888";

if(nullptr != m_pAVFmtCxt)

{

avformat_close_input(&m_pAVFmtCxt);

MY_DEBUG << "DeInit 999";

avformat_free_context(m_pAVFmtCxt);

m_pAVFmtCxt = nullptr;

}

MY_DEBUG << "DeInit end";

m_mutex.unlock();

}

AVPacket ctFFmpeg::getPacket()

{

AVPacket pkt;

memset(&pkt, 0, sizeof(AVPacket));

if(!m_pAVFmtCxt)

{

return pkt;

}

m_nLastReadPacktTime = av_gettime();

int nErr = av_read_frame(m_pAVFmtCxt, &pkt);

if(nErr < 0)

{

//错误信息

char errorbuff[1024];

av_strerror(nErr, errorbuff, sizeof(errorbuff));

}

return pkt;

}

bool ctFFmpeg::Decode(const AVPacket *pkt)

{

m_mutex.lock();

if(!m_pAVFmtCxt)

{

m_mutex.unlock();

return false;

}

AVCodecContext* pCodecCxt = nullptr;

AVFrame *pFrame;

if (pkt->stream_index == m_nAudioIndex)

{

pFrame = m_pPcmFrame;

pCodecCxt = m_pAudioCodecCxt;

}

else

{

pFrame = m_pYuvFrame;

pCodecCxt = m_pVideoCodecCxt;

}

//发送编码数据包

int nRet = avcodec_send_packet(pCodecCxt, pkt);

if (nRet != 0)

{

m_mutex.unlock();

MY_DEBUG << "avcodec_send_packet error---" << nRet;

return false;

}

//获取解码的输出数据

nRet = avcodec_receive_frame(pCodecCxt, pFrame);

if (nRet != 0)

{

m_mutex.unlock();

qDebug()<<"avcodec_receive_frame error---" << nRet;

return false;

}

m_mutex.unlock();

return true;

}

int ctFFmpeg::getVideoFrame()

{

m_mutex.lock();

auto nRet = sws_scale(m_pVideoSwsContext, (const uint8_t* const*)m_pYuvFrame->data,

m_pYuvFrame->linesize, 0, m_pVideoCodecCxt->height,

m_pFrameRGB->data, m_pFrameRGB->linesize);

if(nRet < 0)

{

//MY_DEBUG << "sws_scale error.";

m_mutex.unlock();

return -1;

}

//发送获取一帧图像信号

QImage image(m_pFrameRGB->data[0], m_pVideoCodecCxt->width,

m_pVideoCodecCxt->height, QImage::Format_ARGB32);

//截图

if(m_bSnapshot)

{

QPixmap pixPicture = QPixmap::fromImage(image);

QString sPath = "./snapshot/";

QDate date = QDate::currentDate();

QTime time = QTime::currentTime();

m_sSnapPath = QString("%1%2-%3-%4-%5%6%7.jpg").arg(sPath).arg(date.year()). \

arg(date.month()).arg(date.day()).arg(time.hour()).arg(time.minute()). \

arg(time.second());

MY_DEBUG << "Snapshot... m_sSnapPath:" << m_sSnapPath;

pixPicture.save(m_sSnapPath, "jpg");

m_bSnapshot = false;

}

emit sig_getImage(image);

m_mutex.unlock();

return 0;

}

int ctFFmpeg::getAudioFrame(char *pOut)

{

m_mutex.lock();

uint8_t *pData[1];

pData[0] = (uint8_t *)pOut;

//获取目标样本数

auto nDstNbSamples = av_rescale_rnd(m_pPcmFrame->nb_samples,

m_nAudioPlaySampleRate,

m_nAudioSampleRate,

AV_ROUND_ZERO);

//重采样

int nLen = swr_convert(m_pAudioSwrContext, pData, nDstNbSamples,

(const uint8_t **)m_pPcmFrame->data,

m_pPcmFrame->nb_samples);

if(nLen <= 0)

{

MY_DEBUG << "swr_convert error";

m_mutex.unlock();

return -1;

}

//获取样本保存的缓存大小

int nOutsize = av_samples_get_buffer_size(nullptr, m_pAudioCodecCxt->channels,

m_pPcmFrame->nb_samples,

AV_SAMPLE_FMT_S16,

0);

m_mutex.unlock();

return nOutsize;

}

void ctFFmpeg::Snapshot()

{

m_bSnapshot = true;

}

int ctFFmpeg::InitVideo()

{

if(m_nVideoIndex == -1)

{

MY_DEBUG << "m_nVideoIndex == -1 error";

return -1;

}

//查找视频解码器

const AVCodec *pAVCodec = avcodec_find_decoder(m_pAVFmtCxt->streams[m_nVideoIndex]->codecpar->codec_id);

if(!pAVCodec)

{

MY_DEBUG << "video decoder not found";

return -1;

}

//视频解码器参数配置

m_CodecId = pAVCodec->id;

if(!m_pVideoCodecCxt)

m_pVideoCodecCxt = avcodec_alloc_context3(nullptr);

if(nullptr == m_pVideoCodecCxt)

{

MY_DEBUG << "avcodec_alloc_context3 error m_pVideoCodecCxt=nullptr";

return -1;

}

avcodec_parameters_to_context(m_pVideoCodecCxt, m_pAVFmtCxt->streams[m_nVideoIndex]->codecpar);

if(m_pVideoCodecCxt)

{

if (m_pVideoCodecCxt->width == 0 || m_pVideoCodecCxt->height == 0)

{

MY_DEBUG << "m_pVideoCodecCxt->width=0 or m_pVideoCodecCxt->height=0 error";

return -1;

}

}

//打开视频解码器

int nRet = avcodec_open2(m_pVideoCodecCxt, pAVCodec, nullptr);

if(nRet < 0)

{

MY_DEBUG << "avcodec_open2 video error";

return -1;

}

//申请并分配内存,初始化转换上下文,用于YUV转RGB

m_pOutBuffer = (uint8_t*)av_malloc(av_image_get_buffer_size(AV_PIX_FMT_BGRA,

m_pVideoCodecCxt->width, m_pVideoCodecCxt->height, 1));

if(nullptr == m_pOutBuffer)

{

MY_DEBUG << "nullptr == m_pOutBuffer error";

return -1;

}

if(nullptr == m_pFrameRGB)

m_pFrameRGB = av_frame_alloc();

if(nullptr == m_pFrameRGB)

{

MY_DEBUG << "nullptr == m_pFrameRGB error";

return -1;

}

av_image_fill_arrays(m_pFrameRGB->data, m_pFrameRGB->linesize, m_pOutBuffer,

AV_PIX_FMT_BGRA, m_pVideoCodecCxt->width, m_pVideoCodecCxt->height, 1);

m_pVideoSwsContext = sws_getContext(m_pVideoCodecCxt->width, m_pVideoCodecCxt->height,

m_pVideoCodecCxt->pix_fmt, m_pVideoCodecCxt->width, m_pVideoCodecCxt->height,

AV_PIX_FMT_BGRA, SWS_FAST_BILINEAR, NULL, NULL, NULL);

if(nullptr == m_pVideoSwsContext)

{

MY_DEBUG << "nullptr == m_pVideoSwsContex error";

return -1;

}

return 0;

}

int ctFFmpeg::InitAudio()

{

if(m_nAudioIndex == -1)

{

MY_DEBUG << "m_nAudioIndex == -1";

return -1;

}

//查找音频解码器

const AVCodec *pAVCodec = avcodec_find_decoder(m_pAVFmtCxt->streams[m_nAudioIndex]->codecpar->codec_id);

if(!pAVCodec)

{

MY_DEBUG << "audio decoder not found";

return -1;

}

//音频解码器参数配置

if (!m_pAudioCodecCxt)

m_pAudioCodecCxt = avcodec_alloc_context3(nullptr);

if(nullptr == m_pAudioCodecCxt)

{

MY_DEBUG << "avcodec_alloc_context3 error m_pAudioCodecCxt=nullptr";

return -1;

}

avcodec_parameters_to_context(m_pAudioCodecCxt, m_pAVFmtCxt->streams[m_nAudioIndex]->codecpar);

//打开音频解码器

int nRet = avcodec_open2(m_pAudioCodecCxt, pAVCodec, nullptr);

if(nRet < 0)

{

avcodec_close(m_pAudioCodecCxt);

MY_DEBUG << "avcodec_open2 error m_pAudioCodecCxt";

return -1;

}

//音频重采样初始化

if (nullptr == m_pAudioSwrContext)

{

if(m_pAudioCodecCxt->channel_layout <= 0 || m_pAudioCodecCxt->channel_layout > 3)

m_pAudioCodecCxt->channel_layout = 1;

qDebug() << "m_audioCodecContext->channel_layout:" << m_pAudioCodecCxt->channel_layout;

qDebug() << "m_audioCodecContext->channels:" << m_pAudioCodecCxt->channels;

m_pAudioSwrContext = swr_alloc_set_opts(0,

m_pAudioCodecCxt->channel_layout,

AV_SAMPLE_FMT_S16,

m_pAudioCodecCxt->sample_rate,

av_get_default_channel_layout(m_pAudioCodecCxt->channels),

m_pAudioCodecCxt->sample_fmt,

m_pAudioCodecCxt->sample_rate,

0,

0);

auto nRet = swr_init(m_pAudioSwrContext);

if(nRet < 0)

{

MY_DEBUG << "swr_init error";

return -1;

}

}

//音频播放设备初始化

int nSampleSize = 16;

switch (m_pAudioCodecCxt->sample_fmt)//样本大小

{

case AV_SAMPLE_FMT_S16:

nSampleSize = 16;

break;

case AV_SAMPLE_FMT_S32:

nSampleSize = 32;

default:

break;

}

m_nAudioSampleRate = m_pAudioCodecCxt->sample_rate;

ctAudioPlayer::getInstance().m_nSampleRate = m_nAudioSampleRate;//采样率

ctAudioPlayer::getInstance().m_nChannelCount = m_pAudioCodecCxt->channels;//通道数

ctAudioPlayer::getInstance().m_nSampleSize = nSampleSize;//样本大小

if(!ctAudioPlayer::getInstance().Init())

return -1;

return 0;

}

ctopenglwidget.h

#ifndef CTOPENGLWIDGET_H

#define CTOPENGLWIDGET_H

#include <QOpenGLWidget>

class ctOpenglWidget : public QOpenGLWidget

{

Q_OBJECT

public:

ctOpenglWidget(QWidget *parent = nullptr);

~ctOpenglWidget();

protected:

void paintEvent(QPaintEvent *e);

private slots:

void slot_showImage(const QImage& image);

private:

QImage m_image;

};

#endif // CTOPENGLWIDGET_H

ctopenglwidget.cpp

#include "ctopenglwidget.h"

#include <QPainter>

#include "commondef.h"

ctOpenglWidget::ctOpenglWidget(QWidget *parent) : QOpenGLWidget(parent)

{

}

ctOpenglWidget::~ctOpenglWidget()

{

}

void ctOpenglWidget::paintEvent(QPaintEvent *e)

{

Q_UNUSED(e)

QPainter painter;

painter.begin(this);//清理屏幕

painter.drawImage(QPoint(0, 0), m_image);//绘制FFMpeg解码后的视频

painter.end();

}

void ctOpenglWidget::slot_showImage(const QImage &image)

{

if(image.width() > image.height())

m_image = image.scaledToWidth(width(),Qt::SmoothTransformation);

else

m_image = image.scaledToHeight(height(),Qt::SmoothTransformation);

update();

}

ctaudioplayer.h

#ifndef CTAUDIOPLAYER_H

#define CTAUDIOPLAYER_H

#include <QObject>

#include <QAudioDeviceInfo>

#include <QAudioFormat>

#include <QAudioOutput>

#include <QMutex>

#include "commondef.h"

class ctAudioPlayer

{

public:

ctAudioPlayer();

~ctAudioPlayer();

static ctAudioPlayer& getInstance();

bool Init();

void DeInit();

void isPlay(bool bPlay);

void Write(const char *pData, int nDatasize);

int getFreeSize();

public:

int m_nSampleRate = 8000;//采样率

int m_nSampleSize = 16;//采样大小

int m_nChannelCount = 1;//通道数

private:

QAudioDeviceInfo m_audio_device;

QAudioOutput* m_pAudioOut = nullptr;

QIODevice* m_pIODevice = nullptr;

QMutex m_mutex;

};

#endif // CTAUDIOPLAYER_H

ctaudioplayer.cpp

#include "ctaudioplayer.h"

ctAudioPlayer::ctAudioPlayer()

{

}

ctAudioPlayer::~ctAudioPlayer()

{

}

ctAudioPlayer &ctAudioPlayer::getInstance()

{

static ctAudioPlayer s_obj;

return s_obj;

}

bool ctAudioPlayer::Init()

{

DeInit();

m_mutex.lock();

MY_DEBUG << "m_nSampleRate:" << m_nSampleRate;

MY_DEBUG << "m_nSampleSize:" << m_nSampleSize;

MY_DEBUG << "m_nChannelCount:" << m_nChannelCount;

m_audio_device = QAudioDeviceInfo::defaultOutputDevice();

MY_DEBUG << "m_audio_device.deviceName():" << m_audio_device.deviceName();

if(m_audio_device.deviceName().isEmpty())

{

return false;

}

QAudioFormat format;

format.setSampleRate(m_nSampleRate);

format.setSampleSize(m_nSampleSize);

format.setChannelCount(m_nChannelCount);

format.setCodec("audio/pcm");

format.setByteOrder(QAudioFormat::LittleEndian);

format.setSampleType(QAudioFormat::UnSignedInt);

if(!m_audio_device.isFormatSupported(format))

{

MY_DEBUG << "QAudioDeviceInfo format No Supported.";

//format = m_audio_device.nearestFormat(format);

m_mutex.unlock();

return false;

}

if(m_pAudioOut)

{

m_pAudioOut->stop();

delete m_pAudioOut;

m_pAudioOut = nullptr;

m_pIODevice = nullptr;

}

m_pAudioOut = new QAudioOutput(format);

m_pIODevice = m_pAudioOut->start();

m_mutex.unlock();

return true;

}

void ctAudioPlayer::DeInit()

{

m_mutex.lock();

if(m_pAudioOut)

{

m_pAudioOut->stop();

delete m_pAudioOut;

m_pAudioOut = nullptr;

m_pIODevice = nullptr;

}

m_mutex.unlock();

}

void ctAudioPlayer::isPlay(bool bPlay)

{

m_mutex.lock();

if(!m_pAudioOut)

{

m_mutex.unlock();

return;

}

if(bPlay)

m_pAudioOut->resume();//恢复播放

else

m_pAudioOut->suspend();//暂停播放

m_mutex.unlock();

}

void ctAudioPlayer::Write(const char *pData, int nDatasize)

{

m_mutex.lock();

if(m_pIODevice)

m_pIODevice->write(pData, nDatasize);//将获取的音频写入到缓冲区中

m_mutex.unlock();

}

int ctAudioPlayer::getFreeSize()

{

m_mutex.lock();

if(!m_pAudioOut)

{

m_mutex.unlock();

return 0;

}

int nFreeSize = m_pAudioOut->bytesFree();//剩余空间

m_mutex.unlock();

return nFreeSize;

}

下载链接:https://download.csdn.net/download/linyibin_123/87435635

https://blog.csdn.net/qq871580236/article/details/120364013

我想在Ruby中创建一个用于开发目的的极其简单的Web服务器(不,不想使用现成的解决方案)。代码如下:#!/usr/bin/rubyrequire'socket'server=TCPServer.new('127.0.0.1',8080)whileconnection=server.acceptheaders=[]length=0whileline=connection.getsheaders想法是从命令行运行这个脚本,提供另一个脚本,它将在其标准输入上获取请求,并在其标准输出上返回完整的响应。到目前为止一切顺利,但事实证明这真的很脆弱,因为它在第二个请求上中断并出现错误:/usr/b

其实做自媒体的成本并不高,入门只需要一部手机即可!在手机上找视频素材、使用手机剪辑视频、最后使用手机发布视频作品获得收益!方法并不难,今天这期内容就来给粉丝们分享一种小方法,每天稳定收益100-300,抓紧点赞收藏!1、找素材(1)使用手机拍摄自己喜欢的经典段落,使用程序把文案内容提取出来(2)也可以在豆瓣、知乎、微博等网站中找一些自己需要的文案素材(3)把文案进行润色修改,可以加入一些自己的观点(4)视频素材可以使用软件中自带的素材,也可以在素材网站中下载完整版的素材2、文案配音(1)把复制好的文案直接导入小程序中(2)调整音色、音调后一键合成音频即可(3)可以选择自己朗读配音,需要花一点时

网络编程套接字网络编程基础知识理解源`IP`地址和目的`IP`地址理解源MAC地址和目的MAC地址认识端口号理解端口号和进程ID理解源端口号和目的端口号认识`TCP`协议认识`UDP`协议网络字节序socket编程接口`sockaddr``UDP`网络程序服务器端代码逻辑:需要用到的接口服务器端代码`udp`客户端代码逻辑`udp`客户端代码`TCP`网络程序服务器代码逻辑多个版本服务器单进程版本多进程版本多线程版本线程池版本服务器端代码客户端代码逻辑客户端代码TCP协议通讯流程TCP协议的客户端/服务器程序流程三次握手(建立连接)数据传输四次挥手(断开连接)TCP和UDP对比网络编程基础知识

在前面两节的例子中,主界面窗口的尺寸和标签控件显示的矩形区域等,都是用C++代码编写的。窗口和控件的尺寸都是预估的,控件如果多起来,那就不好估计每个控件合适的位置和大小了。用C++代码编写图形界面的问题就是不直观,因此Qt项目开发了专门的可视化图形界面编辑器——QtDesigner(Qt设计师)。通过QtDesigner就可以很方便地创建图形界面文件*.ui,然后将ui文件应用到源代码里面,做到“所见即所得”,大大方便了图形界面的设计。本节就演示一下QtDesigner的简单使用,学习拖拽控件和设置控件属性,并将ui文件应用到Qt程序代码里。使用QtDesigner设计界面在开始菜单中找到「Q

动漫制作技巧是很多新人想了解的问题,今天小编就来解答与大家分享一下动漫制作流程,为了帮助有兴趣的同学理解,大多数人会选择动漫培训机构,那么今天小编就带大家来看看动漫制作要掌握哪些技巧?一、动漫作品首先完成草图设计和原型制作。设计草图要有目的、有对象、有步骤、要形象、要简单、符合实际。设计图要一致性,以保证制作的顺利进行。二、原型制作是根据设计图纸和制作材料,可以是手绘也可以是3d软件创建。在此步骤中,要注意的问题是色彩和平面布局。三、动漫制作制作完成后,加工成型。完成不同的表现形式后,就要对设计稿进行加工处理,使加工的难易度降低,并得到一些基本准确的概念,以便于后续的大样、准确的尺寸制定。四、

2022/8/4更新支持加入水印水印必须包含透明图像,并且水印图像大小要等于原图像的大小pythonconvert_image_to_video.py-f30-mwatermark.pngim_dirout.mkv2022/6/21更新让命令行参数更加易用新的命令行使用方法pythonconvert_image_to_video.py-f30im_dirout.mkvFFMPEG命令行转换一组JPG图像到视频时,是将这组图像视为MJPG流。我需要转换一组PNG图像到视频,FFMPEG就不认了。pyav内置了ffmpeg库,不需要系统带有ffmpeg工具因此我使用ffmpeg的python包装p

Transformers开始在视频识别领域的“猪突猛进”,各种改进和魔改层出不穷。由此作者将开启VideoTransformer系列的讲解,本篇主要介绍了FBAI团队的TimeSformer,这也是第一篇使用纯Transformer结构在视频识别上的文章。如果觉得有用,就请点赞、收藏、关注!paper:https://arxiv.org/abs/2102.05095code(offical):https://github.com/facebookresearch/TimeSformeraccept:ICML2021author:FacebookAI一、前言Transformers(VIT)在图

是否可以在不实际下载文件的情况下检查文件是否存在?我有这么大的(~40mb)文件,例如:http://mirrors.sohu.com/mysql/MySQL-6.0/MySQL-6.0.11-0.glibc23.src.rpm这与ruby不严格相关,但如果发件人可以设置内容长度就好了。RestClient.get"http://mirrors.sohu.com/mysql/MySQL-6.0/MySQL-6.0.11-0.glibc23.src.rpm",headers:{"Content-Length"=>100} 最佳答案

我已经按照https://github.com/wayneeseguin/rvm#installation上的说明通过RVM安装了Ruby.有关信息,我有所有文件(readline-5.2.tar.gz、readline-6.2.tar.gz、ruby-1.9.3-p327.tar.bz2、rubygems-1.8.24.tgz、wayneeseguin-rvm-stable.tgz和yaml-0.1.4.tar.gz)在~/.rvm/archives目录中,我不想在任何目录中重新下载它们方式。当我这样做时:sudo/usr/bin/apt-getinstallbuild-essent

我在这方面尝试了很多URL,在我遇到这个特定的之前,它们似乎都很好:require'rubygems'require'nokogiri'require'open-uri'doc=Nokogiri::HTML(open("http://www.moxyst.com/fashion/men-clothing/underwear.html"))putsdoc这是结果:/Users/macbookair/.rvm/rubies/ruby-2.0.0-p481/lib/ruby/2.0.0/open-uri.rb:353:in`open_http':404NotFound(OpenURI::HT