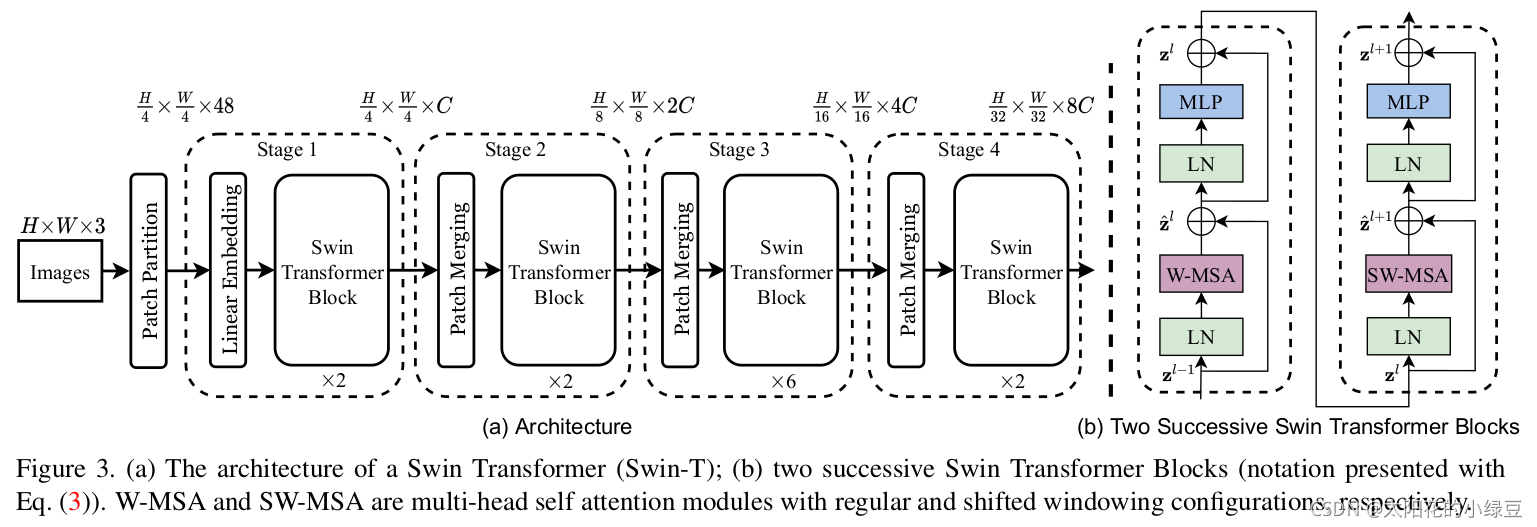

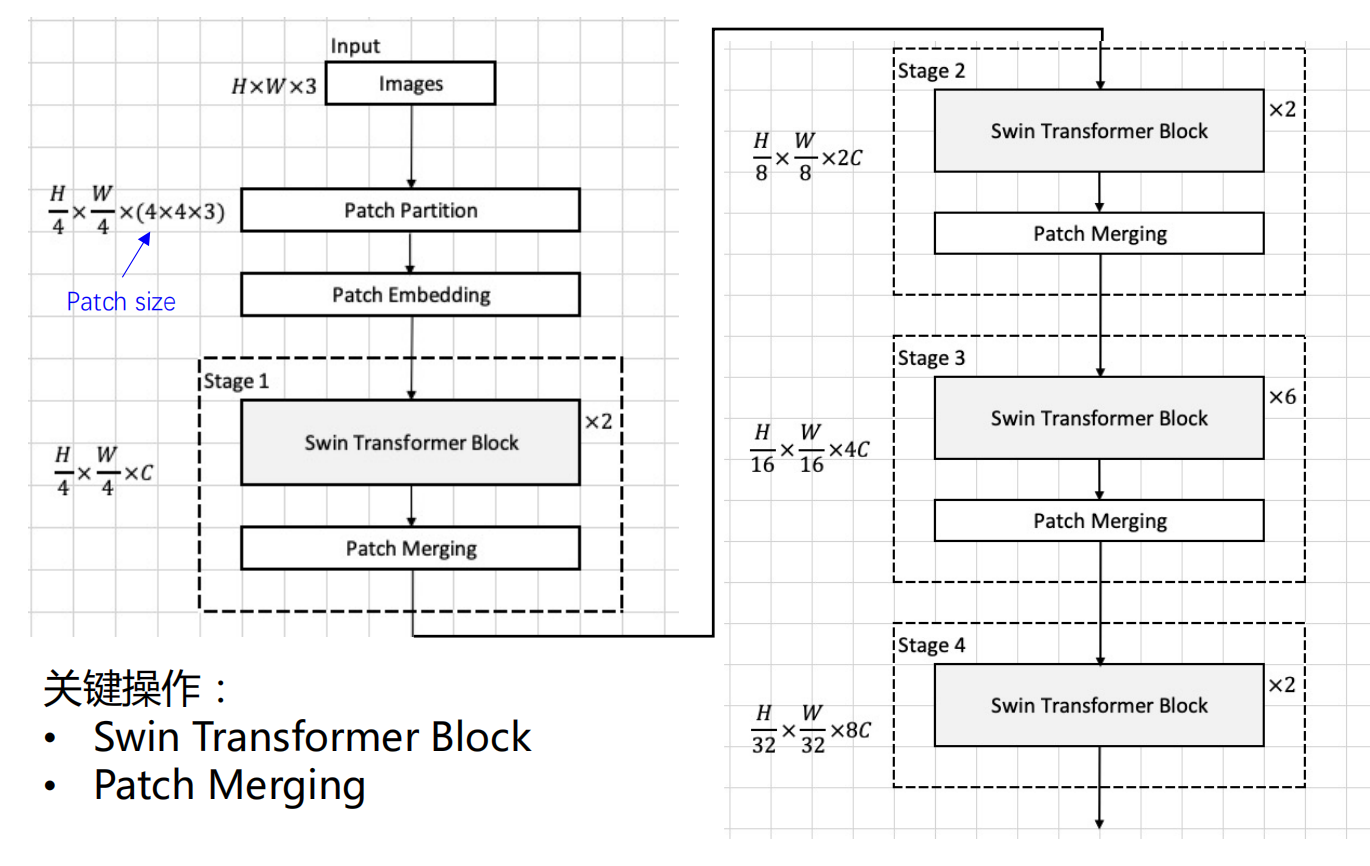

实际上,我们在进行代码复现时应该是下图,接下来我们根据下面的图片进行分段实现

首先将图片输入到Patch Partition模块中进行分块,即每4x4相邻的像素为一个Patch,然后在channel方向展平(flatten)。假设输入的是RGB三通道图片,那么每个patch就有4x4=16个像素,然后每个像素有R、G、B三个值所以展平后是16x3=48,所以通过Patch Partition后图像shape由 [H, W, 3]变成了 [H/4, W/4, 48]。然后在通过Linear Embeding层对每个像素的channel数据做线性变换,由48变成C,即图像shape再由 [H/4, W/4, 48]变成了 [H/4, W/4, C]。其实在源码中Patch Partition和Linear Embeding就是直接通过一个卷积层实现的,和之前Vision Transformer中讲的 Embedding层结构一模一样。

import paddle

import paddle.nn as nn

class PatchEmbedding(nn.Layer):

def __init__(self,patch_size=4,embed_dim=96):

super().__init__()

self.patch_embed = nn.Conv2D(3,out_channels=96,kernel_size=4,stride=4)

self.norm = nn.LayerNorm(embed_dim)

def forward(self,x):

x = self.patch_embed(x) #[B,embed_dim,h,w]

x = x.flatten(2) #[B,embed_dim,h*w]

x = x.transpose([0,2,1])

x = self.norm(x)

return x

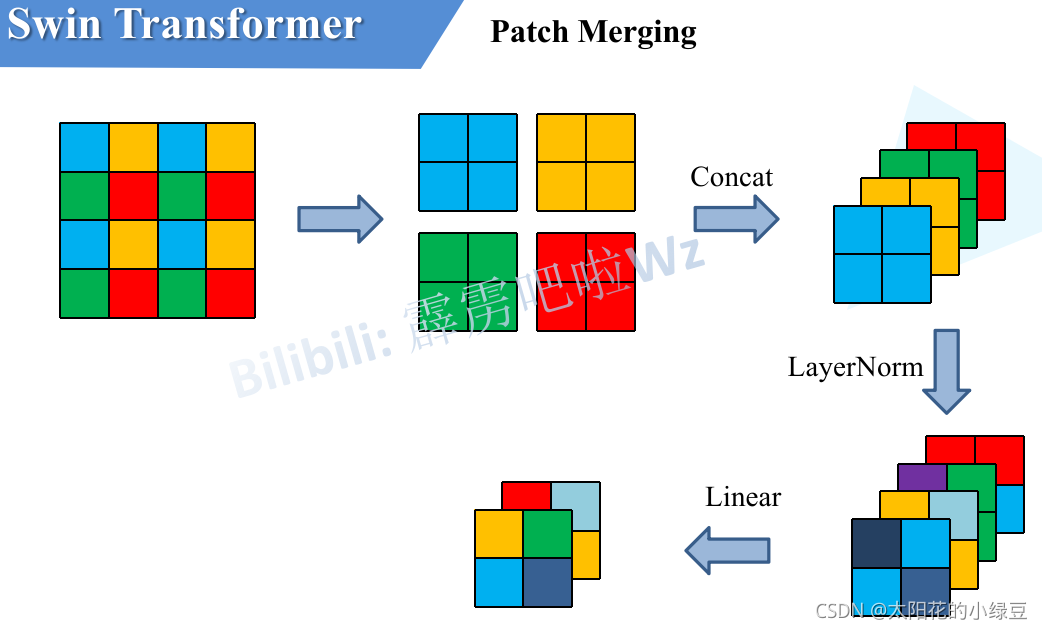

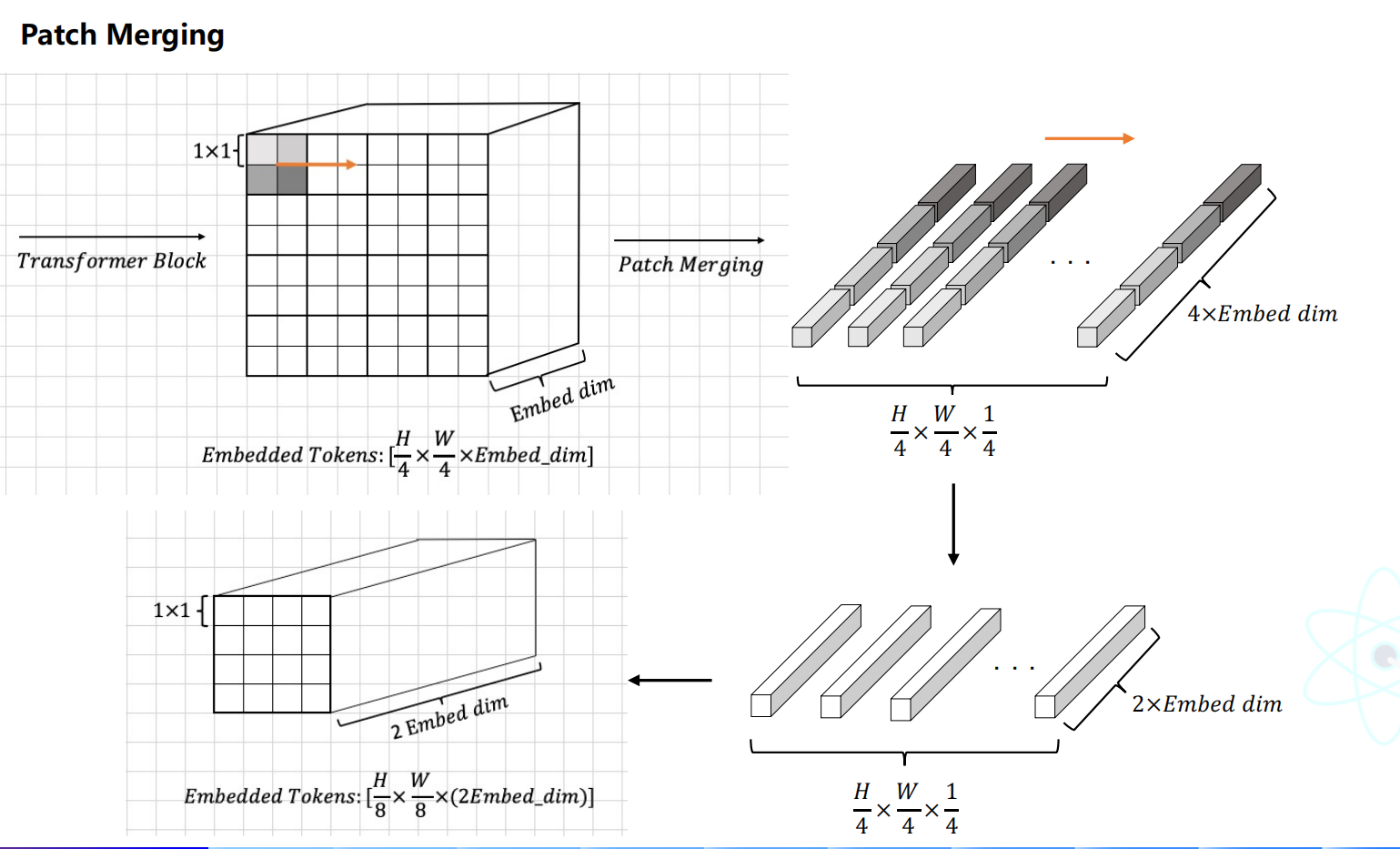

前面有说,在每个Stage中首先要通过一个Patch Merging层进行下采样(Stage1除外)。如下图所示,假设输入Patch Merging的是一个4x4大小的单通道特征图(feature map),Patch Merging会将每个2x2的相邻像素划分为一个patch,然后将每个patch中相同位置(同一颜色)像素给拼在一起就得到了4个feature map。接着将这四个feature map在深度方向进行concat拼接,然后在通过一个LayerNorm层。最后通过一个全连接层在feature map的深度方向做线性变化,将feature map的深度由C变成C/2。通过这个简单的例子可以看出,通过Patch Merging层后,feature map的高和宽会减半,深度会翻倍。

class PatchMerging(nn.Layer):

def __init__(self,resolution,dim):

super().__init__()

self.resolution = resolution

self.dim = dim

self.reduction = nn.Linear(4*dim,2*dim)

self.norm = nn.LayerNorm(4*dim)

def forward(self,x):

h ,w = self.resolution

b,_,c = x.shape

x = x.reshape([b,h,w,c])

x0 = x[:,0::2,0::2,:]

x1 = x[:,0::2,1::2,:]

x2 = x[:,1::2,0::2,:]

x3 = x[:,1::2,1::2,:]

x = paddle.concat([x0,x1,x2,x3],axis=-1)

x = x.reshape([b,-1,4*c])

x = self.norm(x)

x = self.reduction(x)

return x

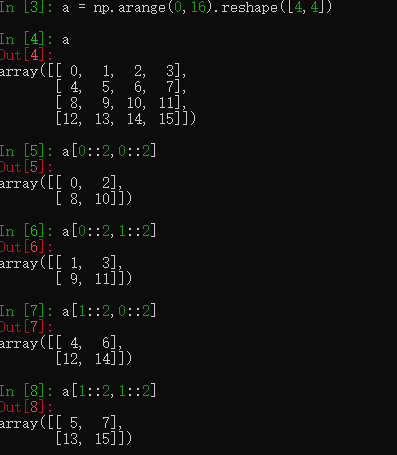

PS:演示一下 x[:,0::2,0::2,:]等的作用

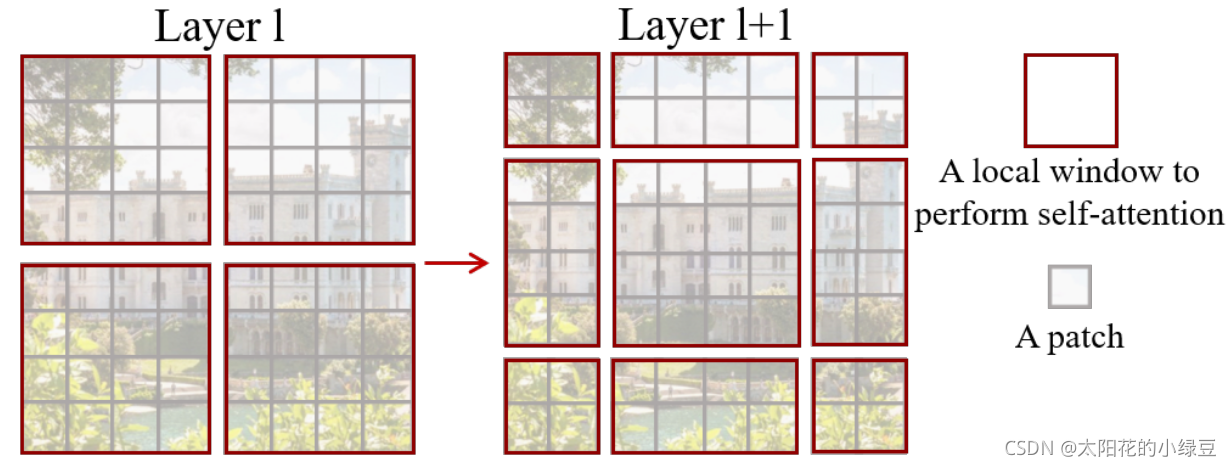

之所以引用Windows Multi-head Self-Attention(W-MSA)模块是为了减少计算量,采用W-MSA模块时,只会在每个窗口内进行自注意力计算,所以窗口与窗口之间是无法进行信息传递的,为了解决这个问题,作者引入了Shifted Windows Multi-Head Self-Attention(SW-MSA)模块。

# 将layer分成若干个windows,然后在每个windows内attention计算

def windows_partition(x , window_size):

B , H , W , C = x.shape

x = x.reshape([B,H//window_size,window_size,W//window_size,window_size,C])

# [B,H//window_size,W//window_size,window_size,window_size,C]

x.transpose([0,1,3,2,4,5])

x.reshape([-1,window_size,window_size,C])

# [B*H//window_size*w//window_size,window_size,window_size,c]

return x

#将若干个windows合并为一个layer。

def window_reverse(window, window_size , H , W ):

B = window.shape[0]//((H//window_size)*(W//window_size))

x = window.reshape([B,H//window_size,W//window_size,window_size,window_size,-1])

x = x.transpose([0,1,3,2,4,5])

x = x.reshape([B,H,W,-1])

return x

接下来,在每个window中做self attention,就是在不关注mask的情况下,attention与transformer中的self attention没啥区别。

class window_attention(nn.Layer):

def __init__(self,dim,window_size,num_heads):

super().__init__()

self.dim = dim

self.dim_head = dim//num_heads

self.num_heads = num_heads

self.scale = self.dim_head**-0.5

self.softmax = nn.Softmax(-1)

self.qkv = nn.Linear(dim,int(dim*3))

self.proj = nn.Linear(dim,dim)

def transpose_multi_head(self,x):

new_shape = x.shape[:-1]+[self.num_heads,self.dim_head]

x = x.reshape(new_shape)

# [B,num_patches,num_heads,dim_head]

x = x.transpose([0,2,1,3])

# [B,num_heads,num_patches,dim_head]

return x

def forward(self,x,mask=None):

B,N,C = x.shape

qkv = self.qkv(x).chunk(3,-1)

q,k,v = map(self.transpose_multi_head,qkv)

q = q*self.scale

attn = paddle.matmul(q,k,transpose_y=True)

# attn = self.softmax(attn)

if mask is None:

attn = self.softmax(attn)

else:

attn = attn.reshape([B//mask.shape[0],mask.shape[0],self.num_heads,mask.shape[1],mask.shape[1 ]])

attn = attn+mask.unsqueeze(1).unsqueeze(0)

attn = attn.reshape([-1,self.num_heads,mask.shape[1],mask.shape[1]])

attn = self.softmax(attn)

attn = paddle.matmul(attn,v)

# [B,num_heads,num_patches,dim_head]

attn = attn.transpose([0,2,1,3])

#[B,num_patches,num_heas,dim_head]

attn = attn.reshape([B,N,C])

out = self.proj(attn)

return out

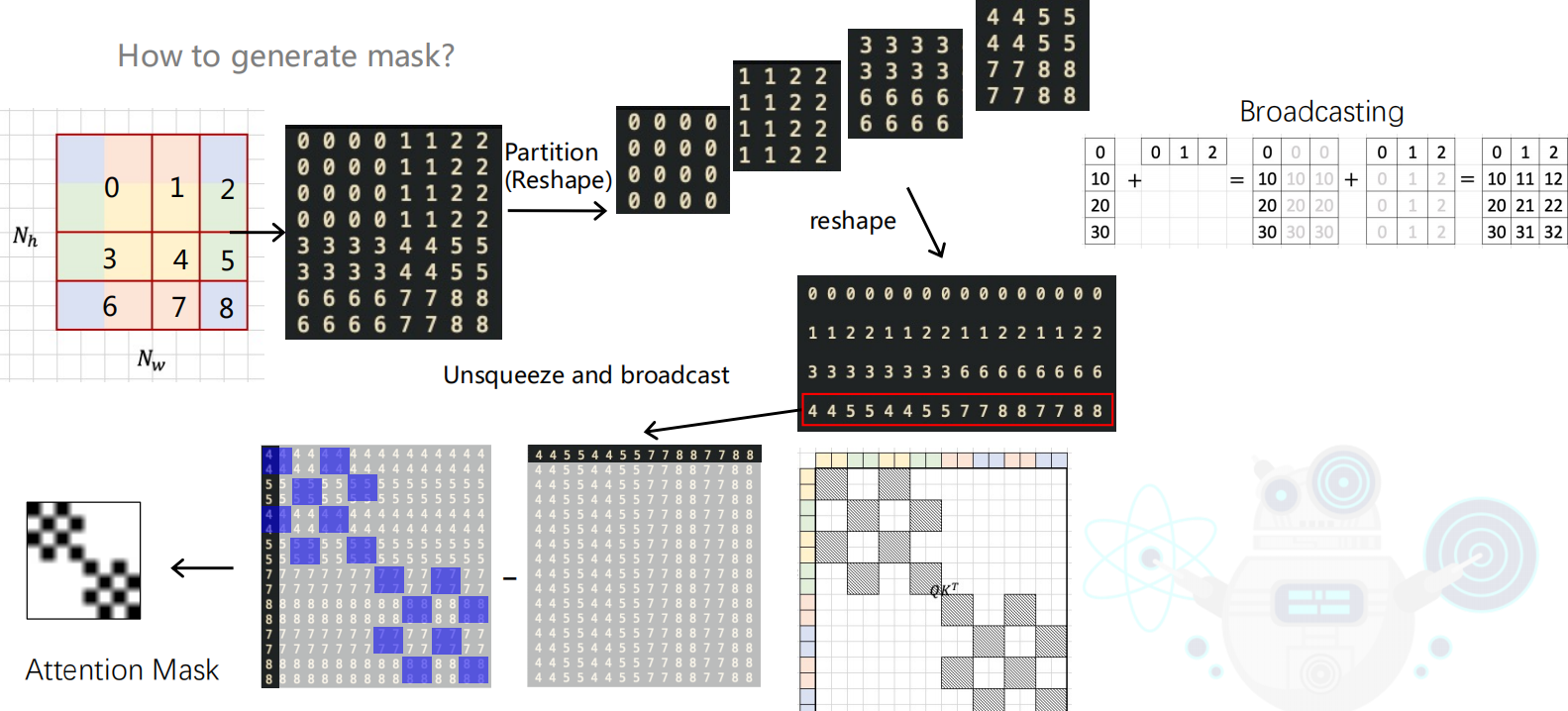

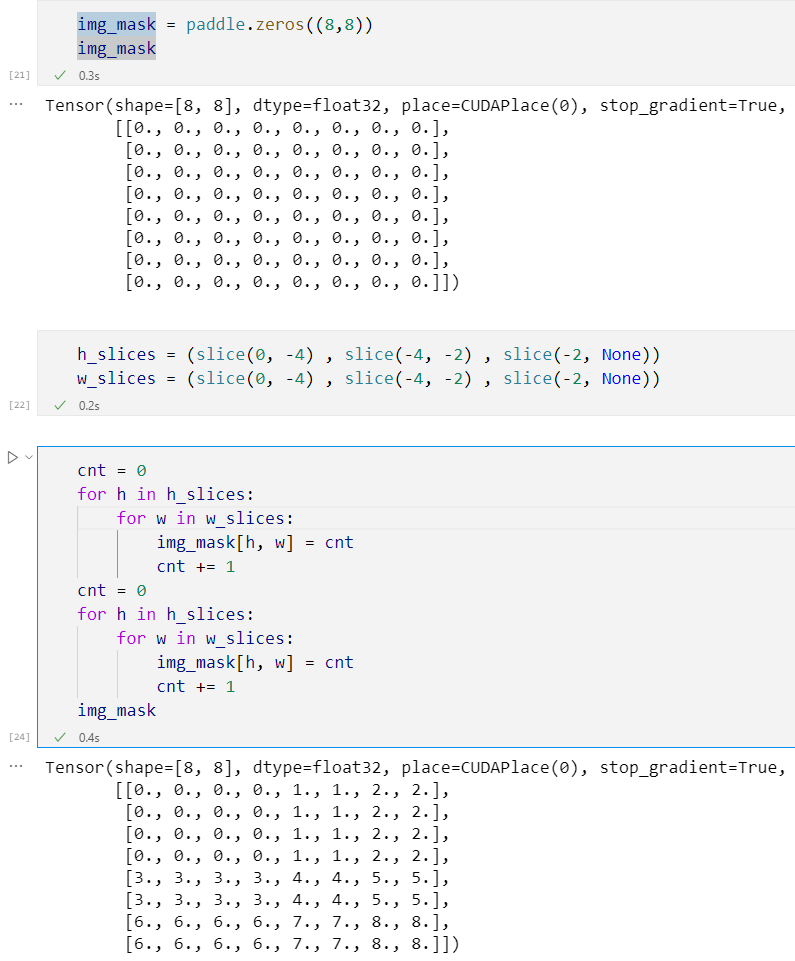

至于SW-MSA(Shifted Windows Multi-head Self-Attentio),具体的是如何实现的,可以详见博客,我在此处针对我所认为的难点,写了一些demo方便理解。

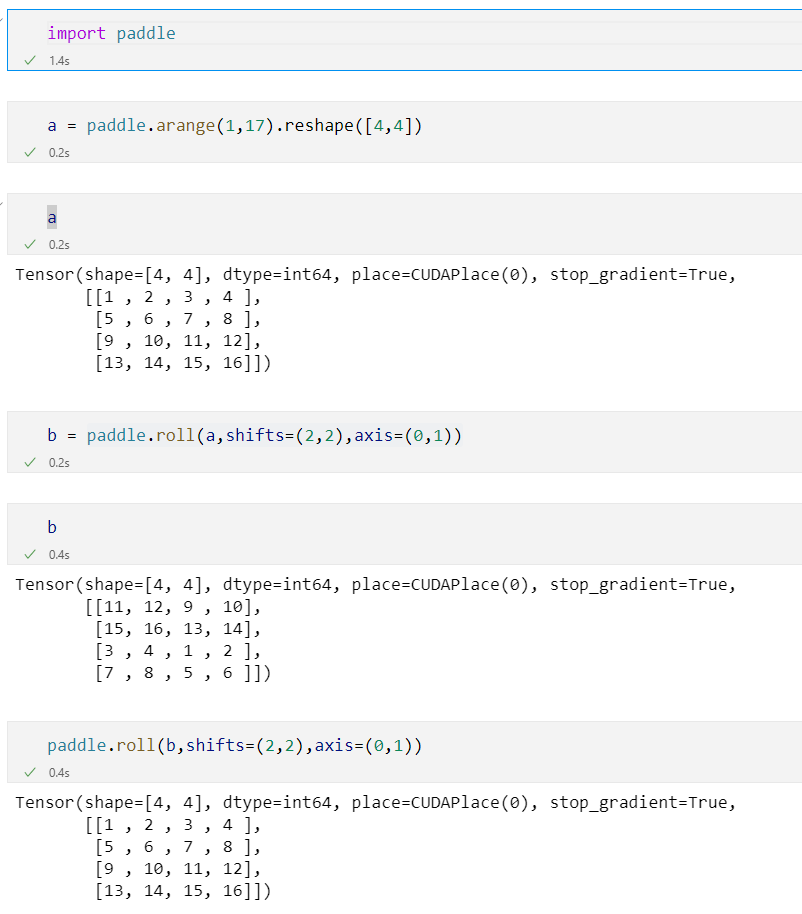

关于paddle.roll(同torch.roll),下面的图片中,b 是 a 分别在第0轴和第1轴,下移两次,然后b再同样的操作便能达到a

if self.shift_size > 0:

H, W = self.resolution

img_mask = paddle.zeros((1, H, W, 1))

h_slices = (slice(0, -self.window_size),

slice(-self.window_size, -self.shift_size),

slice(-self.shift_size, None))

w_slices = (slice(0, -self.window_size),

slice(-self.window_size, -self.shift_size),

slice(-self.shift_size, None))

cnt = 0

for h in h_slices:

for w in w_slices:

img_mask[:, h, w, :] = cnt

cnt += 1

mask_windows = windows_partition(img_mask, self.window_size)

mask_windows = mask_windows.reshape((-1, self.window_size * self.window_size))

attn_mask = mask_windows.unsqueeze(1) - mask_windows.unsqueeze(2)

attn_mask = paddle.where(attn_mask != 0,

paddle.ones_like(attn_mask) * float(-100.0),

attn_mask)

attn_mask = paddle.where(attn_mask == 0,

paddle.zeros_like(attn_mask),

attn_mask)

else:

attn_mask = None

self.register_buffer("attn_mask", attn_mask)

一般情况下,是将网络中的参数保存成orderedDict形式的,这里的参数其实包含两种,一种是模型中各种module含的参数,即nn.Parameter,我们当然可以在网络中定义其他的nn.Parameter参数,另一种就是buffer,前者每次optim.step会得到更新,而不会更新后者。

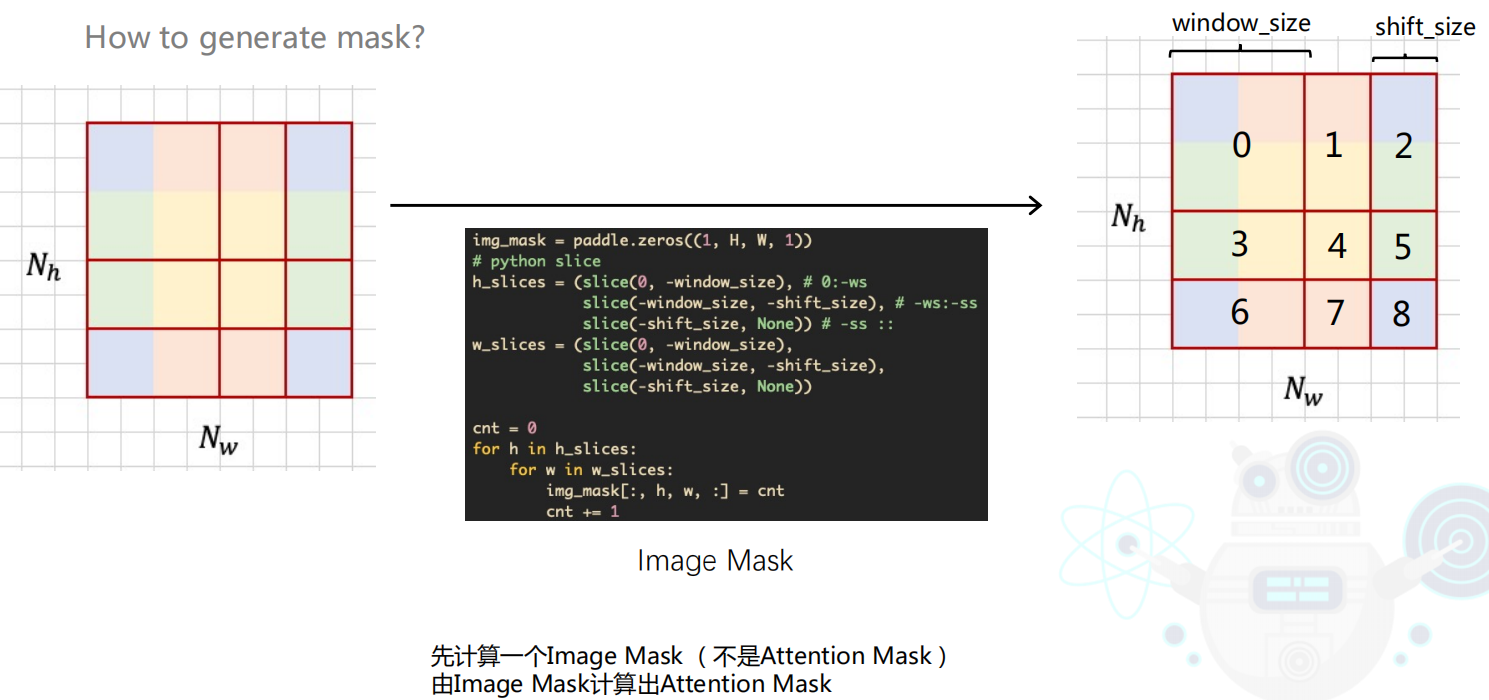

接下来就是分成若干个window,展平(flatten),展平后,自己乘自己,最后得到attention mask。(上上图有展示)

class Identity(nn.Layer):

def __init__(self):

super().__init__()

def forward(self,x):

return x

class Mlp(nn.Layer):

def __init__(self,embed_dim,mlp_ratio=4.0,dropout=0.):

super().__init__()

w_att_1,b_att_1 = self.init_weight()

w_att_2,b_att_2 = self.init_weight()

self.fc1 = nn.Linear(embed_dim,int(embed_dim*mlp_ratio),weight_attr=w_att_1,bias_attr=b_att_1)

self.fc2 = nn.Linear(int(embed_dim*mlp_ratio),embed_dim,weight_attr=w_att_2,bias_attr=b_att_2)

self.dropout = nn.Dropout(dropout)

self.act = nn.GELU()

def init_weight(self):

weight_attr = paddle.ParamAttr(initializer=nn.initializer.TruncatedNormal(std=0.2))

bias_attr = paddle.ParamAttr(initializer=nn.initializer.Constant(.0))

return weight_attr,bias_attr

def forward(self,x):

x = self.fc1(x)

x = self.act(x)

x = self.dropout(x)

x = self.fc2(x)

x = self.dropout(x)

return x

所有的模块在写完后,我们便需要将每个模块串联起来生成swin block。除了需要判断是 W-MSA和SW-MSA,其他的和transformer中的encoder没区别。在patch embedding后,将patch分成若干个window,在各个window中分别做W-MSA或SW-MSA,残差连接,然后再mlp,再进行残差连接。

class SwinBlock(nn.Layer):

def __init__(self,dim,input_resolution,num_heads,window_size,shift_size):

super().__init__()

self.dim = dim

self.resolution = input_resolution

self.window_size = window_size

self.att_norm = nn.LayerNorm(dim)

self.attn = window_attention(dim=dim,window_size=window_size, num_heads=num_heads)

self.mlp = Mlp(dim)

self.shift_size = shift_size

self.mlp_norm = nn.LayerNorm(dim)

if self.shift_size > 0:

H, W = self.resolution

img_mask = paddle.zeros((1, H, W, 1))

h_slices = (slice(0, -self.window_size),

slice(-self.window_size, -self.shift_size),

slice(-self.shift_size, None))

w_slices = (slice(0, -self.window_size),

slice(-self.window_size, -self.shift_size),

slice(-self.shift_size, None))

cnt = 0

for h in h_slices:

for w in w_slices:

img_mask[:, h, w, :] = cnt

cnt += 1

mask_windows = windows_partition(img_mask, self.window_size)

mask_windows = mask_windows.reshape((-1, self.window_size * self.window_size))

attn_mask = mask_windows.unsqueeze(1) - mask_windows.unsqueeze(2)

attn_mask = paddle.where(attn_mask != 0,

paddle.ones_like(attn_mask) * float(-100.0),

attn_mask)

attn_mask = paddle.where(attn_mask == 0,

paddle.zeros_like(attn_mask),

attn_mask)

else:

attn_mask = None

self.register_buffer("attn_mask", attn_mask)

def forward(self,x):

H,W = self.resolution

B,N,C = x.shape

h = x

x = self.att_norm(x)

x = x.reshape([B,H,W,C])

if self.shift_size >0 :

shift_x = paddle.roll(x,shifts=(-self.shift_size,-self.shift_size),axis=(1,2))

else:

shift_x = x

x_windows = windows_partition(shift_x,self.window_size)

x_windows = x_windows.reshape([-1,self.window_size*self.window_size,C])

attn_windows = self.attn(x_windows,mask = self.attn_mask)

attn_windows = attn_windows.reshape([-1,self.window_size,self.window_size,C])

shifted_x = window_reverse(attn_windows,self.window_size,H,W)

if self.shift_size>0:

x = paddle.roll(shifted_x,shifts=(-self.shift_size,-self.shift_size),axis=(1,2))

else:

x = shifted_x

x = x.reshape([B,-1,C])

x = h+x

h = x

x = self.mlp_norm(x)

x = self.mlp(x)

x = h+x

return x

stage由若干个Swin Transformer Block和一个Patch Merging生成。

class SwinTransformerStage(nn.Layer):

def __init__(self,dim,input_resolution,depth,num_heads,window_size,patch_merging= None):

super().__init__()

self.blocks = nn.LayerList()

for i in range(depth):

# print(i)

self.blocks.append(SwinBlock(dim = dim,input_resolution=input_resolution,num_heads=num_heads,window_size=window_size,\

shift_size=0 if (i % 2 == 0) else window_size//2))

if patch_merging is None:

self.patch_merging = Identity()

else:

self.patch_merging = patch_merging(input_resolution,dim)

def forward(self,x):

for block in self.blocks:

x = block(x)

x = self.patch_merging(x)

return x

class SwinTransformerStage(nn.Layer):

def __init__(self,dim,input_resolution,depth,num_heads,window_size,patch_merging= None):

super().__init__()

self.blocks = nn.LayerList()

for i in range(depth):

# print(i)

self.blocks.append(SwinBlock(dim = dim,input_resolution=input_resolution,num_heads=num_heads,window_size=window_size,\

shift_size=0 if (i % 2 == 0) else window_size//2))

if patch_merging is None:

self.patch_merging = Identity()

else:

self.patch_merging = patch_merging(input_resolution,dim)

def forward(self,x):

for block in self.blocks:

x = block(x)

x = self.patch_merging(x)

return x

class Swin(nn.Layer):

def __init__(self,

image_size=224,

patch_size=4,

in_channels=3,

embed_dim=96,

window_size=7,

num_heads=[3,6,12,24],

depths = [2,2,62],

num_classes=1000):

super().__init__()

self.num_classes = num_classes

self.depths = depths

self.num_heads = num_heads

self.embed_dim = embed_dim

self.num_stages = len(depths)

self.num_features = int(self.embed_dim * 2 ** (self.num_stages - 1))

self.patch_resolution = [image_size//patch_size,image_size//patch_size]

self.patch_embedding = PatchEmbedding(patch_size=patch_size,embed_dim=embed_dim)

self.stages = nn.LayerList()

for idx,(depth,num_heads) in enumerate(zip(self.depths,num_heads)):

stage = SwinTransformerStage(dim=int(self.embed_dim*2**idx),

input_resolution=(self.patch_resolution[0]//(2**idx),

self.patch_resolution[0]//(2**idx)),

depth=depth,

num_heads=num_heads,

window_size=window_size,

patch_merging=PatchMerging if (idx < self.num_stages-1 ) else None )

self.stages.append(stage)

self.norm = nn.LayerNorm(self.num_features)

self.avgpool = nn.AdaptiveAvgPool1D(1)

self.fc = nn.Linear(self.num_features,self.num_classes)

def forward(self,x):

x = self.patch_embedding(x)

for stage in self.stages:

x = stage(x)

x = self.norm(x)

x = x.transpose([0,2,1])

x = self.avgpool(x)

x = x.flatten(1)

x = self.fc(x)

return x

model = Swin()

print(model)

out = model(t)

print(out.shape)

Swin(

(patch_embedding): PatchEmbedding(

(patch_embed): Conv2D(3, 96, kernel_size=[4, 4], stride=[4, 4], data_format=NCHW)

(norm): LayerNorm(normalized_shape=[96], epsilon=1e-05)

)

(stages): LayerList(

(0): SwinTransformerStage(

(blocks): LayerList(

(0): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[96], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=96, out_features=288, dtype=float32)

(proj): Linear(in_features=96, out_features=96, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=96, out_features=384, dtype=float32)

(fc2): Linear(in_features=384, out_features=96, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[96], epsilon=1e-05)

)

(1): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[96], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=96, out_features=288, dtype=float32)

(proj): Linear(in_features=96, out_features=96, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=96, out_features=384, dtype=float32)

(fc2): Linear(in_features=384, out_features=96, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[96], epsilon=1e-05)

)

)

(patch_merging): PatchMerging(

(reduction): Linear(in_features=384, out_features=192, dtype=float32)

(norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

)

(1): SwinTransformerStage(

(blocks): LayerList(

(0): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[192], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=192, out_features=576, dtype=float32)

(proj): Linear(in_features=192, out_features=192, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=192, out_features=768, dtype=float32)

(fc2): Linear(in_features=768, out_features=192, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[192], epsilon=1e-05)

)

(1): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[192], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=192, out_features=576, dtype=float32)

(proj): Linear(in_features=192, out_features=192, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=192, out_features=768, dtype=float32)

(fc2): Linear(in_features=768, out_features=192, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[192], epsilon=1e-05)

)

)

(patch_merging): PatchMerging(

(reduction): Linear(in_features=768, out_features=384, dtype=float32)

(norm): LayerNorm(normalized_shape=[768], epsilon=1e-05)

)

)

(2): SwinTransformerStage(

(blocks): LayerList(

(0): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(1): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(2): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(3): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(4): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(5): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(6): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(7): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(8): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(9): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(10): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(11): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(12): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(13): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(14): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(15): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(16): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(17): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(18): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(19): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(20): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(21): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(22): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(23): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(24): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(25): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(26): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(27): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(28): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(29): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(30): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(31): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(32): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(33): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(34): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(35): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(36): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(37): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(38): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(39): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(40): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(41): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(42): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(43): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(44): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(45): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(46): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(47): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(48): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(49): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(50): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(51): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(52): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(53): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(54): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(55): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(56): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(57): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(58): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(59): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(60): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

(61): SwinBlock(

(att_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(attn): window_attention(

(softmax): Softmax(axis=-1)

(qkv): Linear(in_features=384, out_features=1152, dtype=float32)

(proj): Linear(in_features=384, out_features=384, dtype=float32)

)

(mlp): Mlp(

(fc1): Linear(in_features=384, out_features=1536, dtype=float32)

(fc2): Linear(in_features=1536, out_features=384, dtype=float32)

(dropout): Dropout(p=0.0, axis=None, mode=upscale_in_train)

(act): GELU(approximate=False)

)

(mlp_norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

)

)

(patch_merging): Identity()

)

)

(norm): LayerNorm(normalized_shape=[384], epsilon=1e-05)

(avgpool): AdaptiveAvgPool1D(output_size=1)

(fc): Linear(in_features=384, out_features=1000, dtype=float32)

)

---------------------------------------------------------------------------

NameError Traceback (most recent call last)

/tmp/ipykernel_790/2976751405.py in <module>

1 model = Swin()

2 print(model)

----> 3 out = model(t)

4 print(out.shape)

NameError: name 't' is not defined

如何在buildr项目中使用Ruby?我在很多不同的项目中使用过Ruby、JRuby、Java和Clojure。我目前正在使用我的标准Ruby开发一个模拟应用程序,我想尝试使用Clojure后端(我确实喜欢功能代码)以及JRubygui和测试套件。我还可以看到在未来的不同项目中使用Scala作为后端。我想我要为我的项目尝试一下buildr(http://buildr.apache.org/),但我注意到buildr似乎没有设置为在项目中使用JRuby代码本身!这看起来有点傻,因为该工具旨在统一通用的JVM语言并且是在ruby中构建的。除了将输出的jar包含在一个独特的、仅限ruby

在rails源中:https://github.com/rails/rails/blob/master/activesupport/lib/active_support/lazy_load_hooks.rb可以看到以下内容@load_hooks=Hash.new{|h,k|h[k]=[]}在IRB中,它只是初始化一个空哈希。和做有什么区别@load_hooks=Hash.new 最佳答案 查看rubydocumentationforHashnew→new_hashclicktotogglesourcenew(obj)→new_has

我的主要目标是能够完全理解我正在使用的库/gem。我尝试在Github上从头到尾阅读源代码,但这真的很难。我认为更有趣、更温和的踏脚石就是在使用时阅读每个库/gem方法的源代码。例如,我想知道RubyonRails中的redirect_to方法是如何工作的:如何查找redirect_to方法的源代码?我知道在pry中我可以执行类似show-methodmethod的操作,但我如何才能对Rails框架中的方法执行此操作?您对我如何更好地理解Gem及其API有什么建议吗?仅仅阅读源代码似乎真的很难,尤其是对于框架。谢谢! 最佳答案 Ru

我的假设是moduleAmoduleBendend和moduleA::Bend是一样的。我能够从thisblog找到解决方案,thisSOthread和andthisSOthread.为什么以及什么时候应该更喜欢紧凑语法A::B而不是另一个,因为它显然有一个缺点?我有一种直觉,它可能与性能有关,因为在更多命名空间中查找常量需要更多计算。但是我无法通过对普通类进行基准测试来验证这一点。 最佳答案 这两种写作方法经常被混淆。首先要说的是,据我所知,没有可衡量的性能差异。(在下面的书面示例中不断查找)最明显的区别,可能也是最著名的,是你的

几个月前,我读了一篇关于rubygem的博客文章,它可以通过阅读代码本身来确定编程语言。对于我的生活,我不记得博客或gem的名称。谷歌搜索“ruby编程语言猜测”及其变体也无济于事。有人碰巧知道相关gem的名称吗? 最佳答案 是这个吗:http://github.com/chrislo/sourceclassifier/tree/master 关于ruby-寻找通过阅读代码确定编程语言的rubygem?,我们在StackOverflow上找到一个类似的问题:

我目前正在使用以下方法获取页面的源代码:Net::HTTP.get(URI.parse(page.url))我还想获取HTTP状态,而无需发出第二个请求。有没有办法用另一种方法做到这一点?我一直在查看文档,但似乎找不到我要找的东西。 最佳答案 在我看来,除非您需要一些真正的低级访问或控制,否则最好使用Ruby的内置Open::URI模块:require'open-uri'io=open('http://www.example.org/')#=>#body=io.read[0,50]#=>"["200","OK"]io.base_ur

前言作为一名程序员,自己的本质工作就是做程序开发,那么程序开发的时候最直接的体现就是代码,检验一个程序员技术水平的一个核心环节就是开发时候的代码能力。众所周知,程序开发的水平提升是一个循序渐进的过程,每一位程序员都是从“菜鸟”变成“大神”的,所以程序员在程序开发过程中的代码能力也是根据平时开发中的业务实践来积累和提升的。提高代码能力核心要素程序员要想提高自身代码能力,尤其是新晋程序员的代码能力有很大的提升空间的时候,需要针对性的去提高自己的代码能力。提高代码能力其实有几个比较关键的点,只要把握住这些方面,就能很好的、快速的提高自己的一部分代码能力。1、多去阅读开源项目,如有机会可以亲自参与开源

嗨~大家好,这里是可莉!今天给大家带来的是7个C语言的经典基础代码~那一起往下看下去把【程序一】打印100到200之间的素数#includeintmain(){ inti; for(i=100;i 【程序二】输出乘法口诀表#includeintmain(){inti;for(i=1;i 【程序三】判断1000年---2000年之间的闰年#includeintmain(){intyear;for(year=1000;year 【程序四】给定两个整形变量的值,将两个值的内容进行交换。这里提供两种方法来进行交换,第一种为创建临时变量来进行交换,第二种是不创建临时变量而直接进行交换。1.创建临时变量来

文章目录git常用命令(简介,详细参数往下看)Git提交代码步骤gitpullgitstatusgitaddgitcommitgitpushgit代码冲突合并问题方法一:放弃本地代码方法二:合并代码常用命令以及详细参数gitadd将文件添加到仓库:gitdiff比较文件异同gitlog查看历史记录gitreset代码回滚版本库相关操作远程仓库相关操作分支相关操作创建分支查看分支:gitbranch合并分支:gitmerge删除分支:gitbranch-ddev查看分支合并图:gitlog–graph–pretty=oneline–abbrev-commit撤消某次提交git用户名密码相关配置g

Transformers开始在视频识别领域的“猪突猛进”,各种改进和魔改层出不穷。由此作者将开启VideoTransformer系列的讲解,本篇主要介绍了FBAI团队的TimeSformer,这也是第一篇使用纯Transformer结构在视频识别上的文章。如果觉得有用,就请点赞、收藏、关注!paper:https://arxiv.org/abs/2102.05095code(offical):https://github.com/facebookresearch/TimeSformeraccept:ICML2021author:FacebookAI一、前言Transformers(VIT)在图